Matching Method for Airborne and Simulated Synthetic Aperture Radar Images Based on Local Fitting Consistency

-

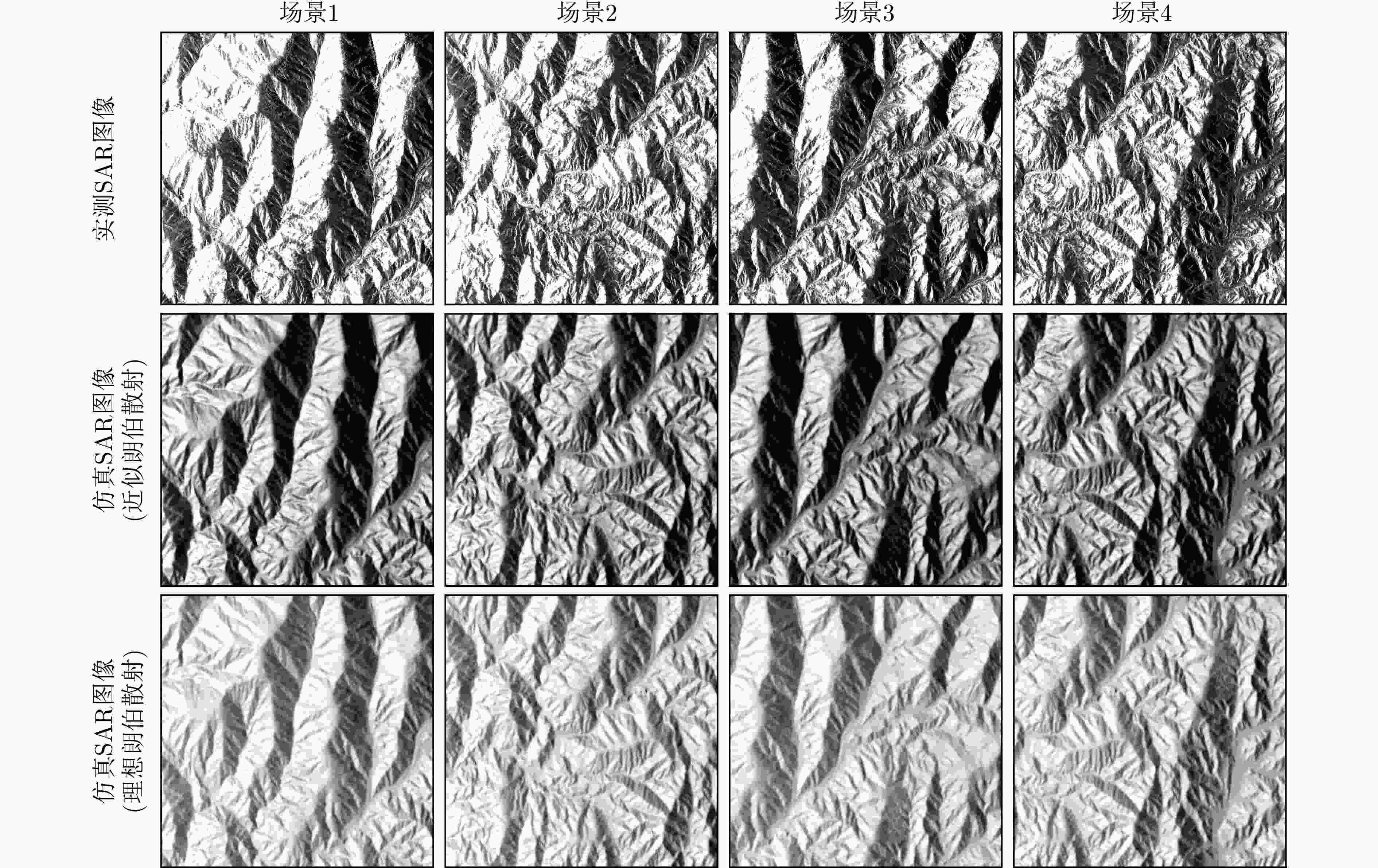

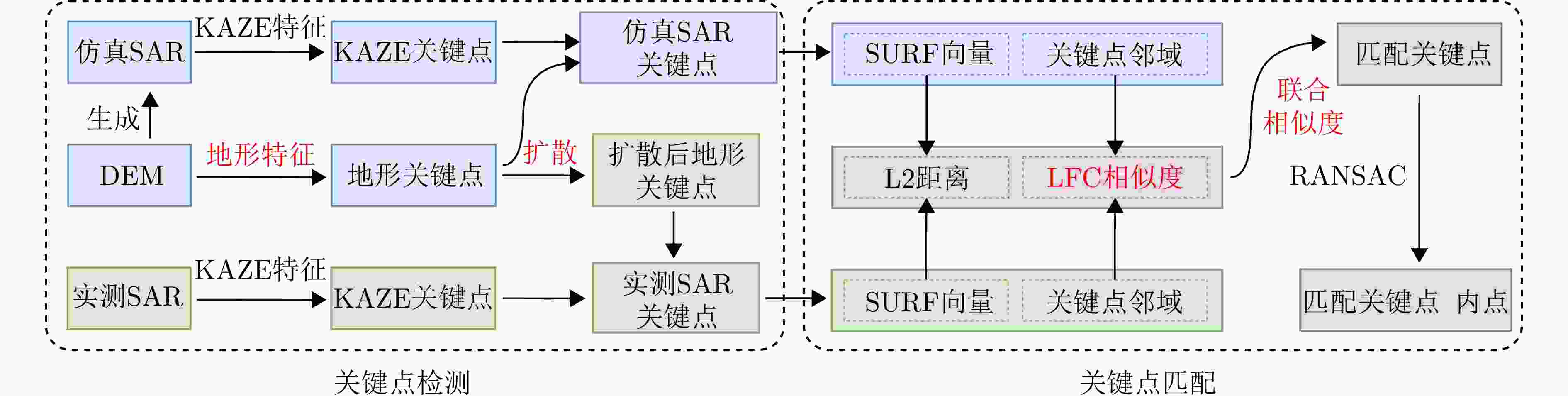

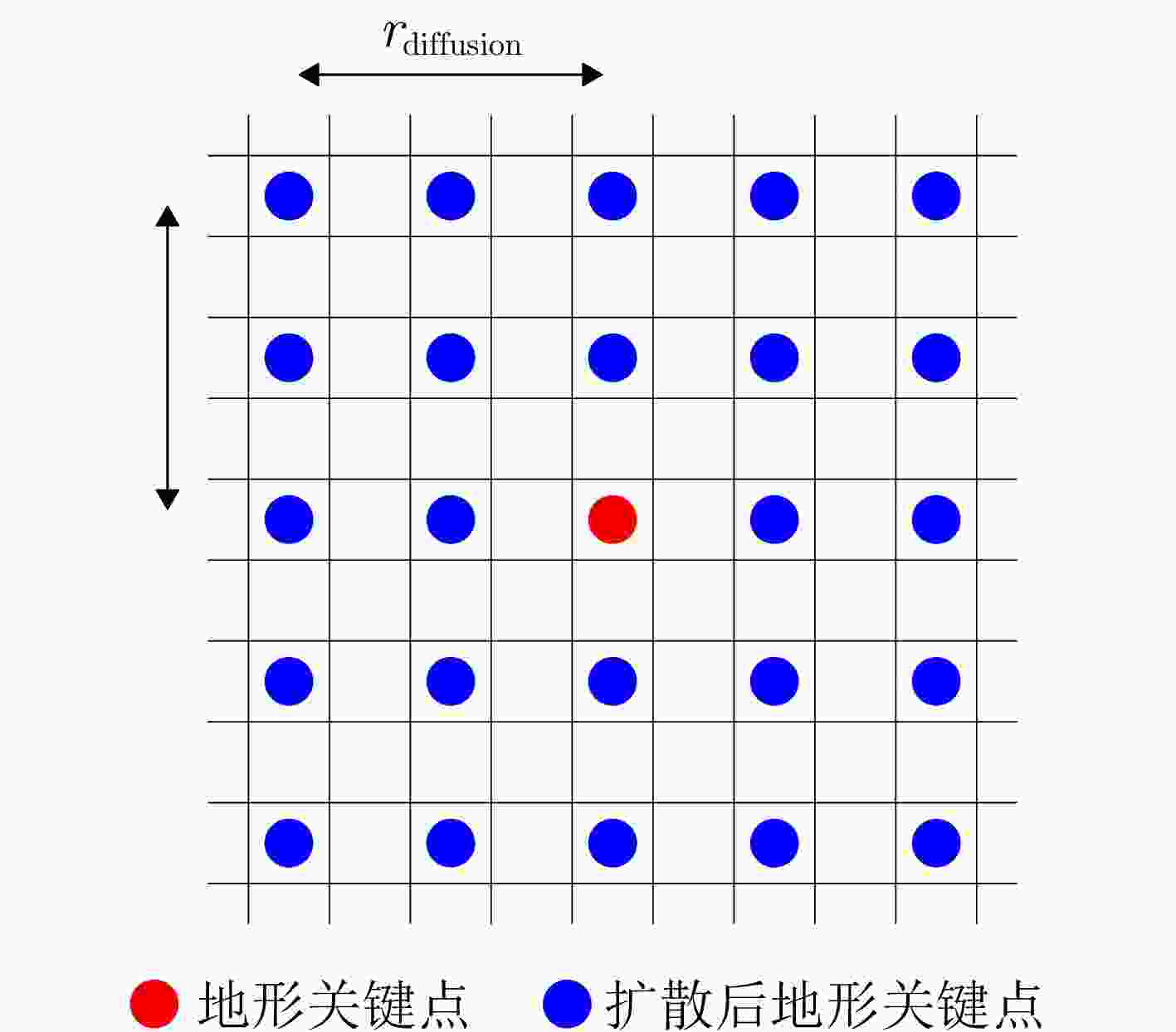

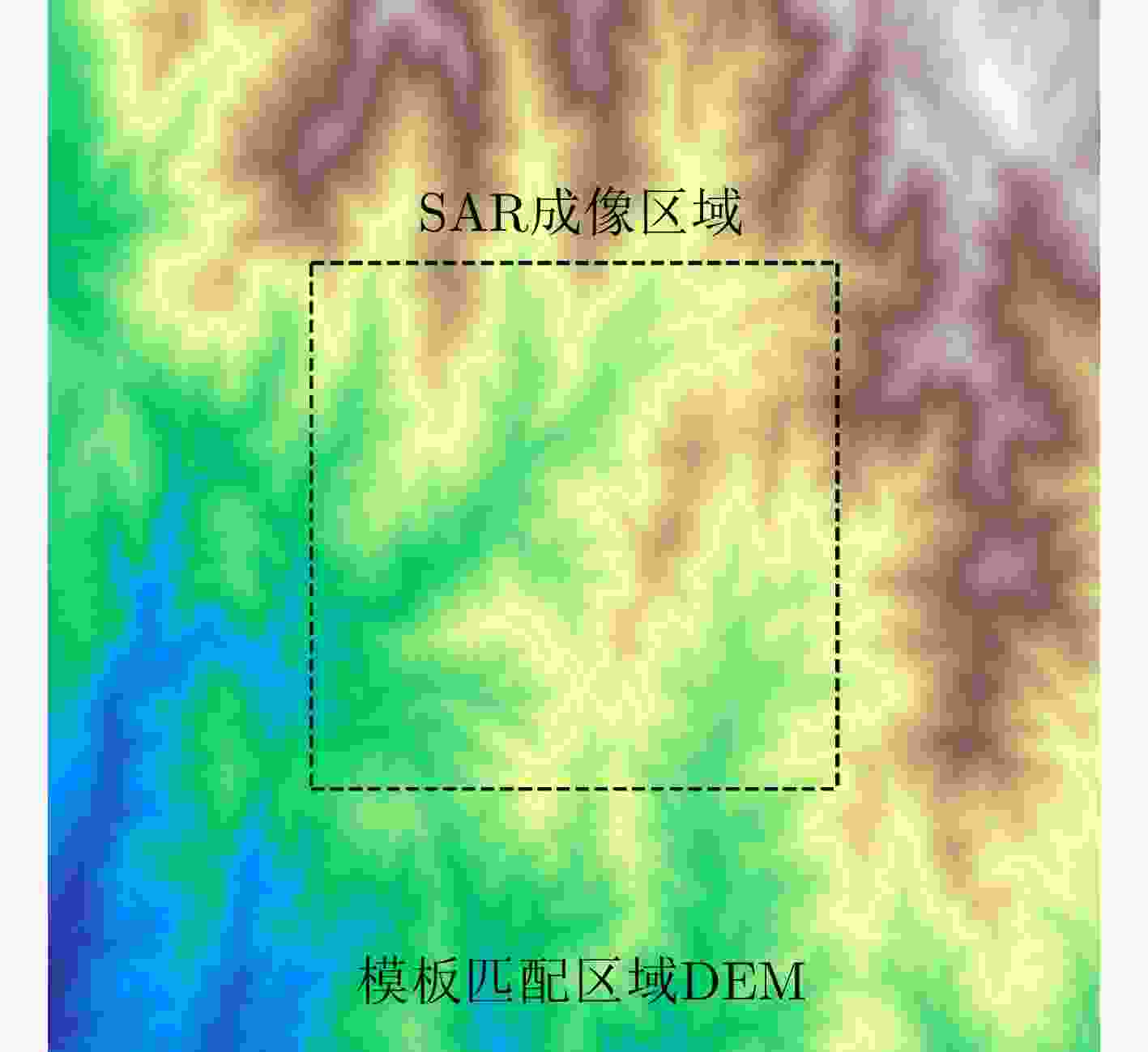

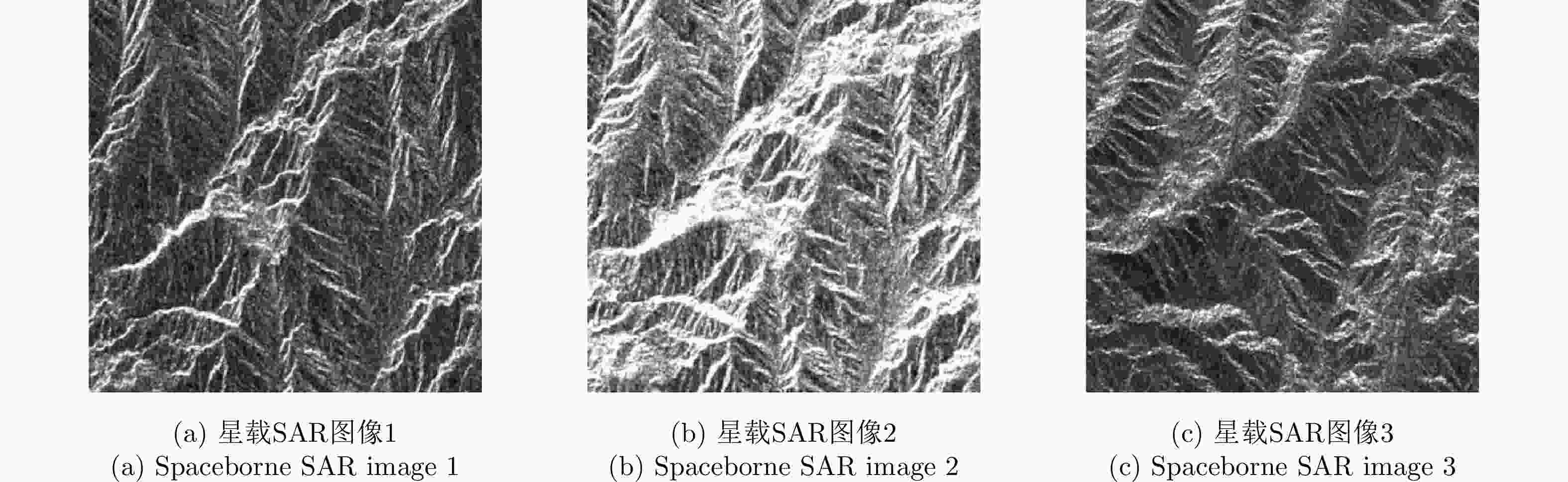

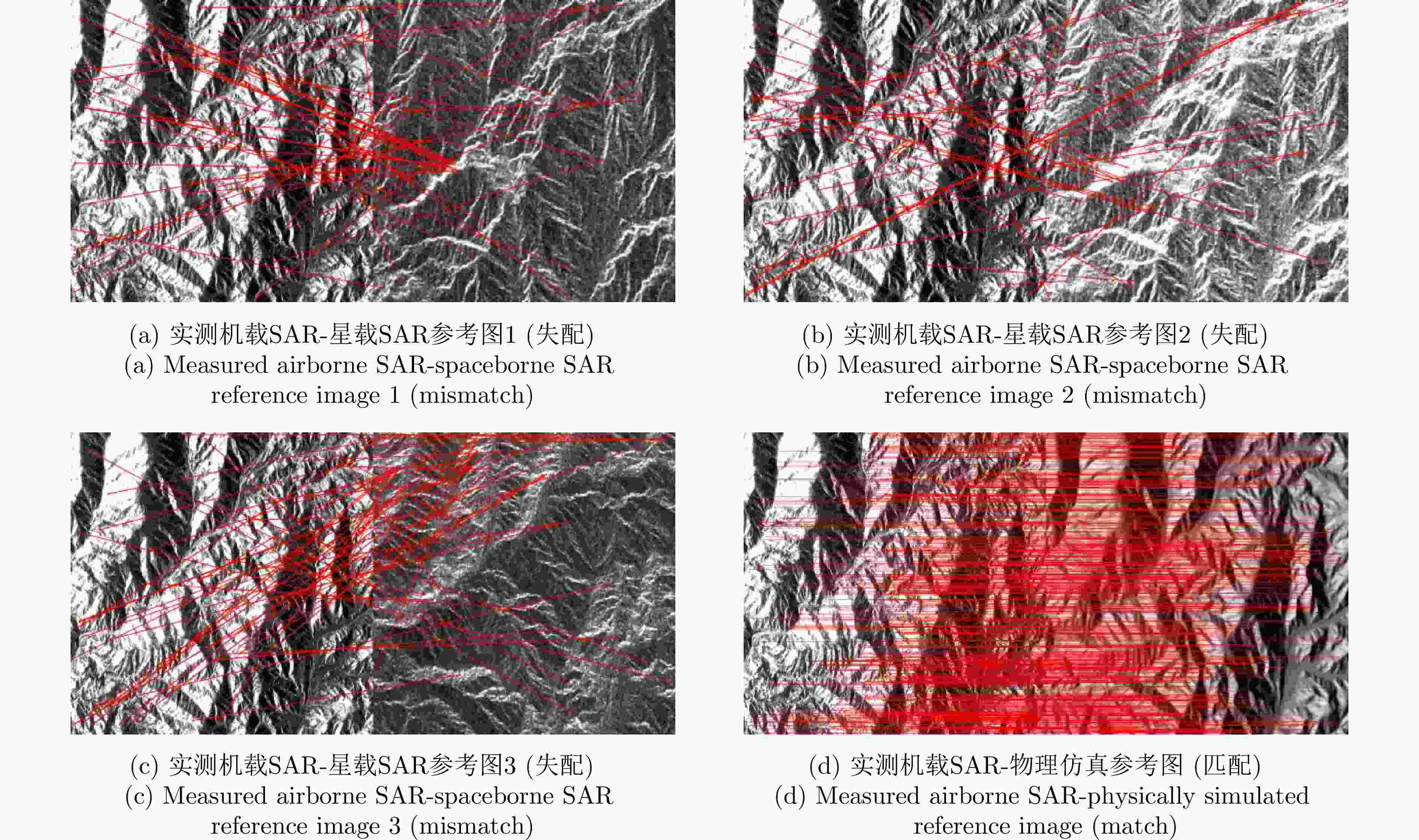

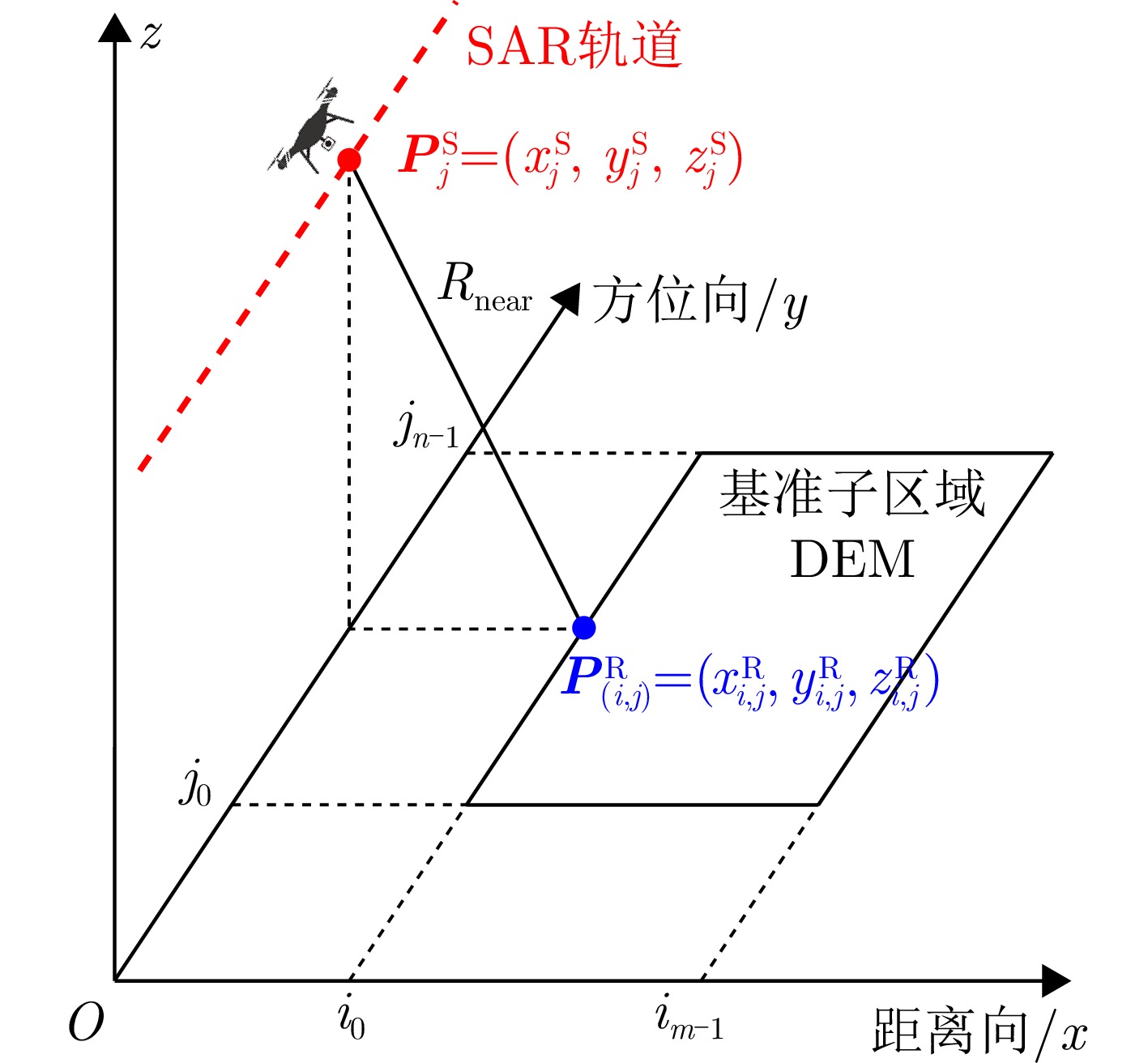

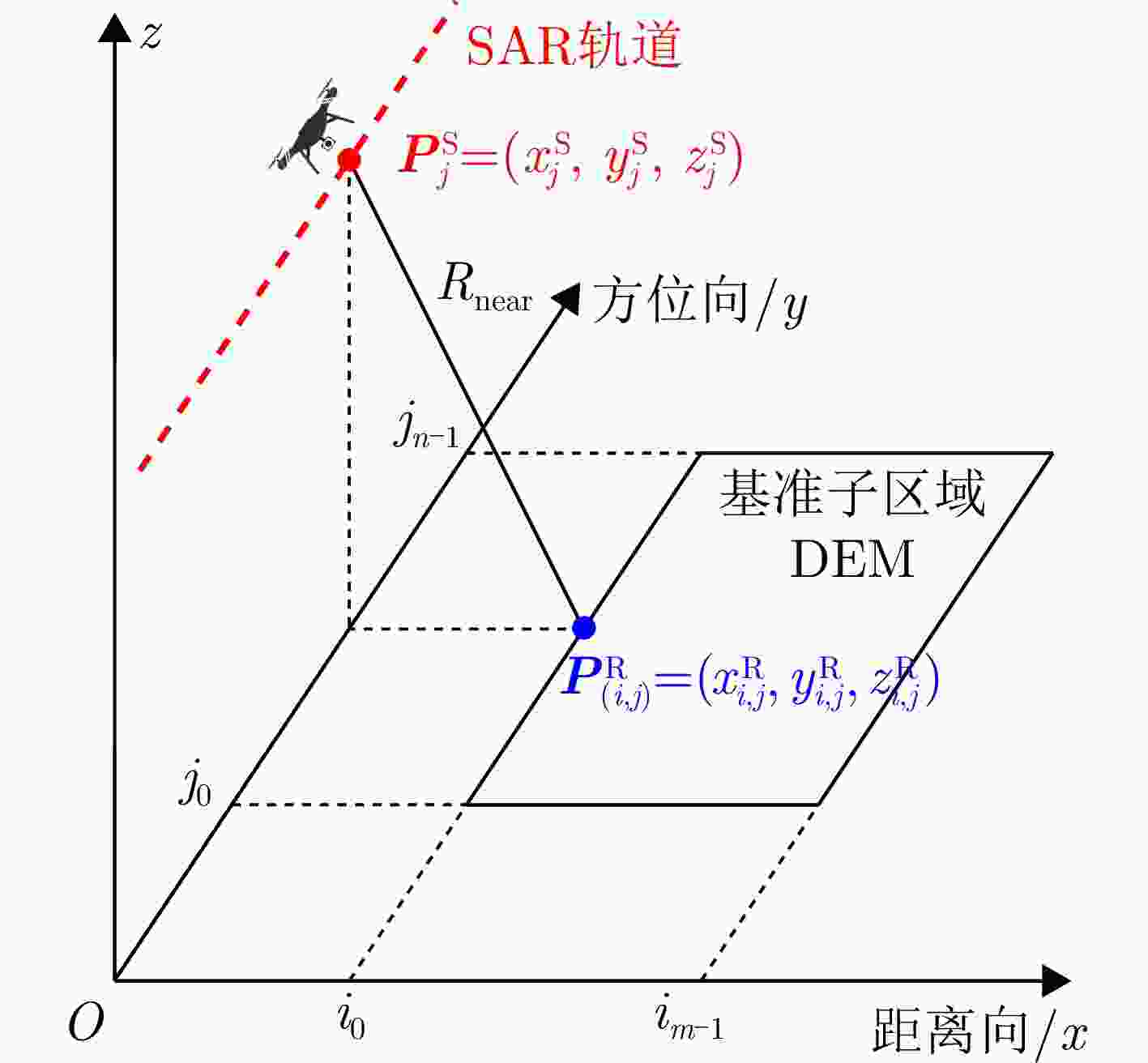

摘要: 成像几何差异是引起SAR图像特征相对畸变的主要原因,进而导致SAR图像的匹配难度骤增。以仿真SAR图像作为参考图可以从根本上消除因成像几何差异造成的图像特征相对畸变,然而实测SAR图像与仿真SAR图像仍然会存在散射和噪声方面的差异,且现有的匹配算法基本采用对称式关键点检测及描述符匹配,因此匹配点的数量和精度仍然有待提升。针对上述问题,该文根据实测和仿真SAR图像的局部统计特征提出了非对称式的局部拟合一致性相似度度量准则,并基于该相似度设计了机载SAR图像与仿真SAR图像的粗匹配和精匹配方法,在此基础上引入地形特征提高关键点检测的多样性,最终实现实测机载SAR图像与仿真SAR图像的鲁棒匹配。实验结果表明,在不同噪声程度下,基于局部拟合一致性度量准则设计匹配方法具备更强的鲁棒性和准确性,在匹配精度等多方面指标上均显著优于现有主流算法。Abstract: Variations in imaging geometry are the main cause of relative feature distortion in Synthetic Aperture Radar (SAR) images, greatly increasing the difficulty of image matching. Using simulated SAR images as references can remove the feature distortions caused by geometric differences. However, significant differences in scattering characteristics and noise patterns between measured and simulated images still exist. Additionally, since most existing matching algorithms mainly rely on symmetric keypoint detection and descriptor matching, the number and precision of matched points are not optimal. To solve these problems, this paper introduces an asymmetric Local Fitting Consistency (LFC) similarity metric based on the local statistical features of both measured and simulated SAR images. Using this metric, a coarse-to-fine matching framework for airborne and simulated SAR images is designed. Furthermore, terrain features are added to improve keypoint detection diversity, leading to more robust matching between airborne and simulated SAR images. Experimental results show that the proposed LFC-based matching method offers better robustness and accuracy compared to other approaches, significantly surpassing current state-of-the-art algorithms in terms of matching precision and other key metrics.

-

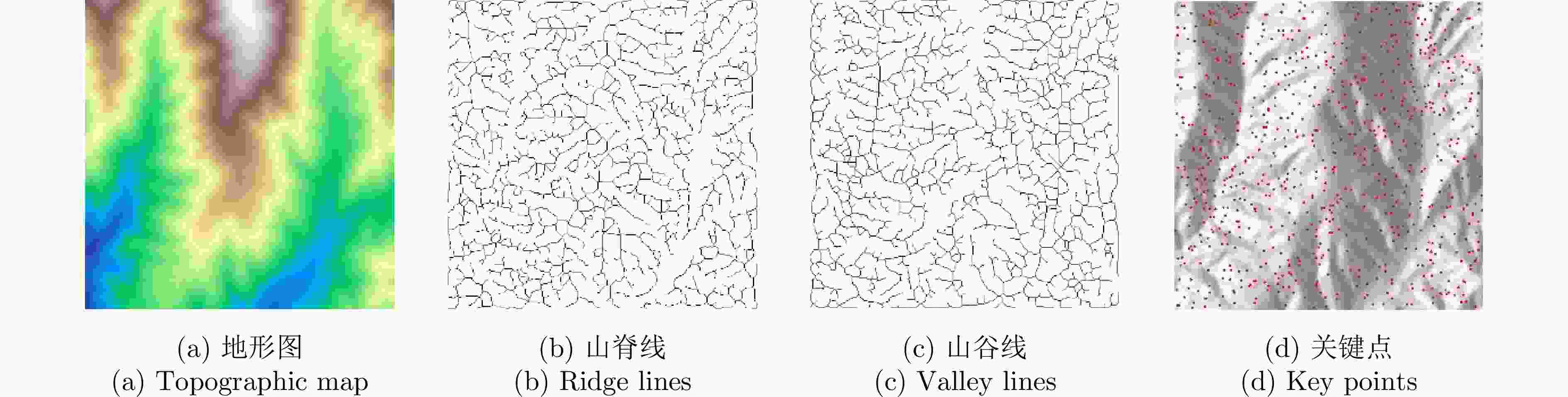

1 地形特征点检测方法

1. Terrain feature point detection method

输入:数字高程模型矩阵D 输出:特征点二值掩模矩阵K (1) 山脊线/山谷线骨架提取 $ ({\boldsymbol{R}}_{\text{skel}},{\boldsymbol{V}}_{\text{skel}},{\boldsymbol{R}}_{\text{raw}},{\boldsymbol{V}}_{\text{raw}},{\boldsymbol{R}}_{\text{dil}},{\boldsymbol{V}}_{\text{dil}}) $

$\leftarrow \text{DetectRidgesValleys}(\boldsymbol{D}) $其中$ {\boldsymbol{R}}_{\text{skel}} $与$ {\boldsymbol{V}}_{\text{skel}} $分别为骨化后的山脊线与山谷线二值矩阵。 (2) 骨架分支修剪 设定最小分支长度阈值Lmin = 15,分别处理山脊与山谷骨架: $ {\boldsymbol{R}}_{\mathrm{skel}}^{\mathrm{proc}}\leftarrow \text{ProcessSkeletons}({\boldsymbol{R}}_{\mathrm{skel}},{L}_{\min }) $,

$ {\boldsymbol{V}}_{\mathrm{skel}}^{\mathrm{proc}}\leftarrow \text{ProcessSkeletons}({\boldsymbol{V}}_{\mathrm{skel}},{L}_{\min }) $(3) 分支点提取 分别从处理后的骨架中提取分支点: $ {\boldsymbol{B}}_{R}\leftarrow \text{ExtractBranchPoints}(\boldsymbol{R}_{\mathrm{skel}}^{\mathrm{proc}}) $,

$ {\boldsymbol{B}}_{V}\leftarrow \text{ExtractBranchPoints}(\boldsymbol{V}_{\mathrm{skel}}^{\mathrm{proc}}) $(4) 特征点融合 初始化与D尺寸相同的二值矩阵K,并将分支点位置置为真 $ {\boldsymbol{K}}[{\boldsymbol{B}}_{R}]\leftarrow \text{True}, {\boldsymbol{K}}[{\boldsymbol{B}}_{V}]\leftarrow \text{True} $ (5) 返回K 表 1 SAR图像粗匹配实验各方法主要参数设置情况

Table 1. Parameter settings of main methods in SAR image coarse matching experiment

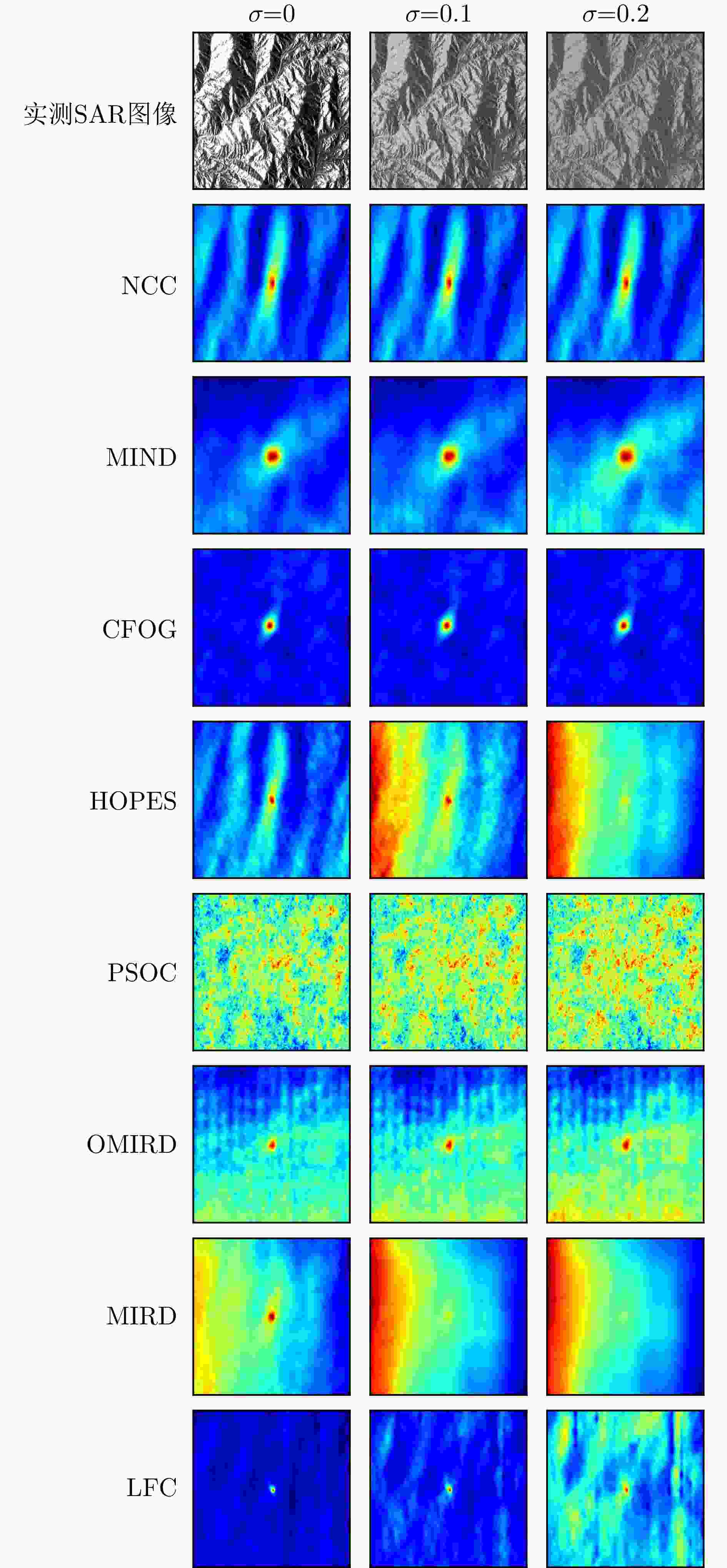

方法 主要参数及预设值 参考来源 MIND Patch size = 3×3×3; Search region: 6-neighbourhood 文献[24] CFOG Gaussian STD = 0.8; Channel number m = 9 文献[12] HOPES kMSG = 1.4, NScale = 4, Orientation M = 8 文献[25] PSOC Fast-MSG = 3, σ1=2, σi/σi – 1=1.6 文献[26] MIRD Gaussian kernel size: 9×9; k = 0.9 文献[11] OMIRD Gaussian kernel size: 3×3 文献[27] LFC r = 32 — 表 2 不同噪声条件下各模板匹配方法热力图峰值x和y方向偏离结果(pixel)

Table 2. Deviation results of heatmap peaks in x and y directions for different template matching methods under various noise conditions (pixel)

模板匹配方法 噪声方差 0 0.1 0.2 NCC (+3,+3) (+3,+3) (+3,+3) MIND (+3,+3) (+3,+3) (+3,+4) CFOG (–2,–2) (–2,–3) (–2,–3) HOPES (+2,+2) (+2,+3) (–126,+3) PSOC (+12,–13) (+12,-13) (+107,-18) OMIRD (+3,+3) (+3,+3) (+3,+3) MIRD (+3,+3) (–127,–21) (–127,–21) LFC (+1,+2) (+2,+2) (+2,+2) 注:表中加粗数值为最优值。 表 3 不同噪声条件下各模板匹配方法匹配误差(pixel)

Table 3. Matching errors of different template matching methods under various noise conditions (pixel)

模板匹配方法 噪声方差 0 0.1 0.2 NCC 16.97 16.97 16.97 MIND 16.97 16.97 20.00 CFOG 11.31 14.42 14.42 HOPES 11.31 14.42 504.14 PSOC 70.77 70.77 434.01 OMIRD 16.97 16.97 16.97 MIRD 16.97 514.90 514.90 LFC 8.94 11.31 11.31 注:表中加粗数值为最优值。 表 4 不同噪声条件下各模板匹配方法对应热力图峰值梯度对比结果

Table 4. Comparison of heatmap peak gradients for different template matching methods under various noise conditions

模板匹配方法 噪声方差 0 0.1 0.2 NCC 106.24 106.17 106.59 MIND 130.12 120.26 100.96 CFOG 58.29 58.73 58.45 HOPES 67.68 56.84 10.33(×) PSOC 174.20(×) 186.40(×) 246.57(×) OMIRD 114.53 85.53 80.53 MIRD 143.44 143.03(×) 141.47(×) LFC 629.36 321.22 226.07 注:由于HOPES, PSOC, MIRD的部分匹配误差过大,严重偏离目标点,因此我们认为其热力图峰值已经不具备参考性,在表中以“×”符号标识。表中加粗数值为最优值。 表 5 SAR图像精匹配实验各方法主要参数设置情况

Table 5. Parameter settings of main methods in SAR image fine matching experiment

方法 主要参数及预设值 参考来源 KAZE-SAR Default 文献[6] SAR-SIFT DistRatio = 0.9; LayerNum = 8 文献[5] ASS Orientations = 8; Radial bins num = 3; Region radius = 42 文献[28] FED-HOPC Template size = 100; searchRad = 10; Gamma1 = 0.15 文献[29] M2FF Filter orientations = 4; Filter scales = 4 文献[30] LFC / Sjoint r = 32, $ {w}_{1}=30 $, $ {w}_{2}=64 $ — 表 6 不同噪声条件下各匹配方法匹配精度评估

Table 6. Evaluation of matching accuracy for different methods under various noise conditions

方法 RMSE (像素) TNMs (个) NCMs (个) CMR (%) σ = 0 σ = 0.1 σ = 0.2 σ = 0 σ = 0.1 σ = 0.2 σ = 0 σ = 0.1 σ = 0.2 σ = 0 σ = 0.1 σ = 0.2 KAZE-SAR 1.94 1.96 1.93 806 629 278 397 294 141 0.493 0.467 0.507 SAR-SIFT 1.57 3.21 3.94 122 34 14 86 8 2 0.705 0.235 0.143 ASS 1.63 2.12 1.98 590 333 291 416 180 141 0.705 0.541 0.485 FED-HOPC 2.93 5.35 10.15 100 100 100 36 23 6 0.360 0.230 0.060 M2FF 1.41 1.60 9.27 317 213 27 239 156 11 0.754 0.732 0.407 本文 1.39 1.39 1.48 1130 1334 925 1017 1213 809 0.900 0.909 0.875 注:表中加粗数值为最优值。 -

[1] 靳国旺, 张红敏, 徐青. 雷达摄影测量[M]. 北京: 测绘出版社, 2015.JIN Guowang, ZHANG Hongmin, and XU Qing. Radargrammetry[M]. Beijing: Surveying and Mapping Press, 2015. [2] 孙晓坤, 贠泽楷, 胡粲彬, 等. 面向高分辨率多视角SAR图像的端到端配准算法[J]. 雷达学报(中英文), 2025, 14(2): 389–404. doi: 10.12000/JR24211.SUN Xiaokun, YUN Zekai, HU Canbin, et al. End-to-end registration algorithm for high-resolution multi-view SAR images[J]. Journal of Radars, 2025, 14(2): 389–404. doi: 10.12000/JR24211. [3] LOWE D G. Distinctive image features from scale-invariant keypoints[J]. International Journal of Computer Vision, 2004, 60(2): 91–110. doi: 10.1023/B:VISI.0000029664.99615.94. [4] ALCANTARILLA P F, BARTOLI A, and DAVISON A J. KAZE features[C]. The 12th European Conference on Computer Vision, Florence, Italy, 2012: 214–227. doi: 10.1007/978-3-642-33783-3_16. [5] DELLINGER F, DELON J, GOUSSEAU Y, et al. SAR-SIFT: A SIFT-like algorithm for applications on SAR images[C]. 2012 IEEE International Geoscience and Remote Sensing Symposium, Munich, Germany, 2012: 3478–3481. doi: 10.1109/IGARSS.2012.6350671. [6] POURFARD M, HOSSEINIAN T, SAEIDI R, et al. KAZE-SAR: SAR image registration using KAZE detector and modified SURF descriptor for tackling speckle noise[J]. IEEE Transactions on Geoscience and Remote Sensing, 2022, 60: 5207612. doi: 10.1109/TGRS.2021.3084411. [7] WANG Linhui, XIANG Yuming, YOU Hongjian, et al. A robust multiscale edge detection method for accurate SAR image registration[J]. IEEE Geoscience and Remote Sensing Letters, 2023, 20: 4006305. doi: 10.1109/LGRS.2023.3279141. [8] SUN Jianjun, ZHAO Yan, LI Xinbo, et al. Fractional order spectrum in SAR image registration[C]. 2024 IEEE International Conference on Multimedia and Expo (ICME), Niagara Falls, Canada, 2024: 1–6. doi: 10.1109/ICME57554.2024.10688291. [9] XIANG Deliang, PAN Xiaoyu, DING Huaiyue, et al. Two-stage registration of SAR images with large distortion based on superpixel segmentation[J]. IEEE Transactions on Geoscience and Remote Sensing, 2024, 62: 5211115. doi: 10.1109/TGRS.2024.3392971. [10] YE Yuanxin, SHAN Jie, BRUZZONE L, et al. Robust registration of multimodal remote sensing images based on structural similarity[J]. IEEE Transactions on Geoscience and Remote Sensing, 2017, 55(5): 2941–2958. doi: 10.1109/TGRS.2017.2656380. [11] LIU Xuecong, TENG Xichao, LUO Jing, et al. Robust multi-sensor image matching based on normalized self-similarity region descriptor[J]. Chinese Journal of Aeronautics, 2024, 37(1): 271–286. doi: 10.1016/j.cja.2023.10.003. [12] YE Yuanxin, BRUZZONE L, SHAN Jie, et al. Fast and robust matching for multimodal remote sensing image registration[J]. IEEE Transactions on Geoscience and Remote Sensing, 2019, 57(11): 9059–9070. doi: 10.1109/TGRS.2019.2924684. [13] 项德良, 徐益豪, 程建达, 等. 一种基于特征交汇关键点检测和Sim-CSPNet的SAR图像配准算法[J]. 雷达学报, 2022, 11(6): 1081–1097. doi: 10.12000/JR22110.XIANG Deliang, XU Yihao, CHENG Jianda, et al. An algorithm based on a feature interaction-based keypoint detector and Sim-CSPNet for SAR image registration[J]. Journal of Radars, 2022, 11(6): 1081–1097. doi: 10.12000/JR22110. [14] ZHAO Xuanran, WU Yan, HU Xin, et al. A novel dual-branch global and local feature extraction network for SAR and optical image registration[J]. IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, 2024, 17: 17637–17650. doi: 10.1109/JSTARS.2024.3435684. [15] WU Wei, XIAN Yong, SU Juan, et al. A Siamese template matching method for SAR and optical image[J]. IEEE Geoscience and Remote Sensing Letters, 2022, 19: 4017905. doi: 10.1109/LGRS.2021.3108579. [16] HU Xin, WU Yan, LIU Xingyu, et al. Intra- and inter-modal graph attention network and contrastive learning for SAR and optical image registration[J]. IEEE Transactions on Geoscience and Remote Sensing, 2023, 61: 5220216. doi: 10.1109/TGRS.2023.3328368. [17] TOSS T, DÄMMERT P, SJANIC Z, et al. Navigation with SAR and 3D-map aiding[C]. 2015 18th International Conference on Information Fusion (Fusion), Washington, USA, 2015: 1505–1510. [18] 李贺, 秦志远, 靳国旺. 基于DEM的SAR图像模拟[J]. 测绘科学技术学报, 2008, 25(4): 296–299.LI He, QIN Zhiyuan, and JIN Guowang. Simulation of SAR images based on DEM[J]. Journal of Geomatics Science and Technology, 2008, 25(4): 296–299. [19] 张红敏, 靳国旺, 徐青, 等. 机载SAR图像与仿真SAR图像的匹配策略[J]. 测绘科学技术学报, 2013, 30(2): 144–148. doi: 10.3969/j.issn.1673-6338.2013.02.009.ZHANG Hongmin, JIN Guowang, XU Qing, et al. Matching strategy of airborne SAR image and simulated SAR image[J]. Journal of Geomatics Science and Technology, 2013, 30(2): 144–148. doi: 10.3969/j.issn.1673-6338.2013.02.009. [20] HE Kaiming, SUN Jian, and TANG Xiaoou. Guided image filtering[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2013, 35(6): 1397–1409. doi: 10.1109/TPAMI.2012.213. [21] Jet Propulsion Laboratory. UAVSAR L-band Synthetic Aperture Radar (SAR) repeat pass interferometry products[R]. NASA Alaska Satellite Facility DAAC, 2024. [22] European Space Agency. Copernicus global digital elevation models[R]. OT.032021.4326.1, 2021. [23] European Space Agency. Sentinel-1[R]. NASA Alaska Satellite Facility DAAC, 2025. [24] HEINRICH M P, JENKINSON M, BHUSHAN M, et al. MIND: Modality independent neighbourhood descriptor for multi-modal deformable registration[J]. Medical Image Analysis, 2012, 16(7): 1423–1435. doi: 10.1016/j.media.2012.05.008. [25] LI Shuo, LV Xiaolei, REN Jian, et al. A robust 3D density descriptor based on histogram of oriented primary edge structure for SAR and optical image co-registration[J]. Remote Sensing, 2022, 14(3): 630. doi: 10.3390/rs14030630. [26] LI Shuo, LV Xiaolei, WANG Hao, et al. A novel fast and robust multimodal images matching method based on primary structure-weighted orientation consistency[J]. IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, 2023, 16: 9916–9927. doi: 10.1109/JSTARS.2023.3325577. [27] TENG Xichao, LIU Xuecong, LI Zhang, et al. OMIRD: Orientated modality independent region descriptor for optical-to-SAR image matching[J]. IEEE Geoscience and Remote Sensing Letters, 2023, 20: 4003405. doi: 10.1109/LGRS.2023.3256186. [28] XIONG Xin, JIN Guowang, XU Qing, et al. Robust registration algorithm for optical and SAR images based on adjacent self-similarity feature[J]. IEEE Transactions on Geoscience and Remote Sensing, 2022, 60: 5233117. doi: 10.1109/tgrs.2022.3197357. [29] YE Yibin, WANG Qinwei, ZHAO Hong, et al. Fast and robust optical-to-SAR remote sensing image registration using region-aware phase descriptor[J]. IEEE Transactions on Geoscience and Remote Sensing, 2024, 62: 5208512. doi: 10.1109/TGRS.2024.3379370. [30] ZHANG Xiaoting, WANG Yinghua, LIU Jun, et al. Robust coarse-to-fine registration algorithm for optical and SAR images based on two novel multiscale and multidirectional features[J]. IEEE Transactions on Geoscience and Remote Sensing, 2024, 62: 5215126. doi: 10.1109/TGRS.2024.3417217. -

作者中心

作者中心 专家审稿

专家审稿 责编办公

责编办公 编辑办公

编辑办公

下载:

下载: