Low-altitude Target Dataset and Multifeature Recognition Method Based on Holographic Staring Radar

-

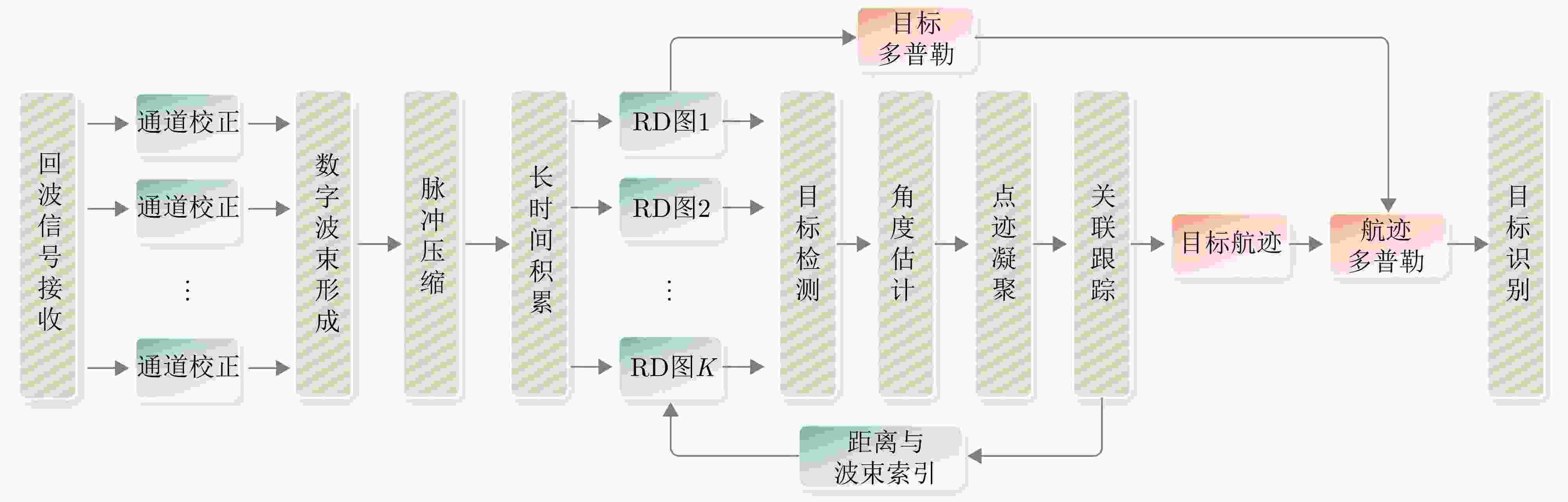

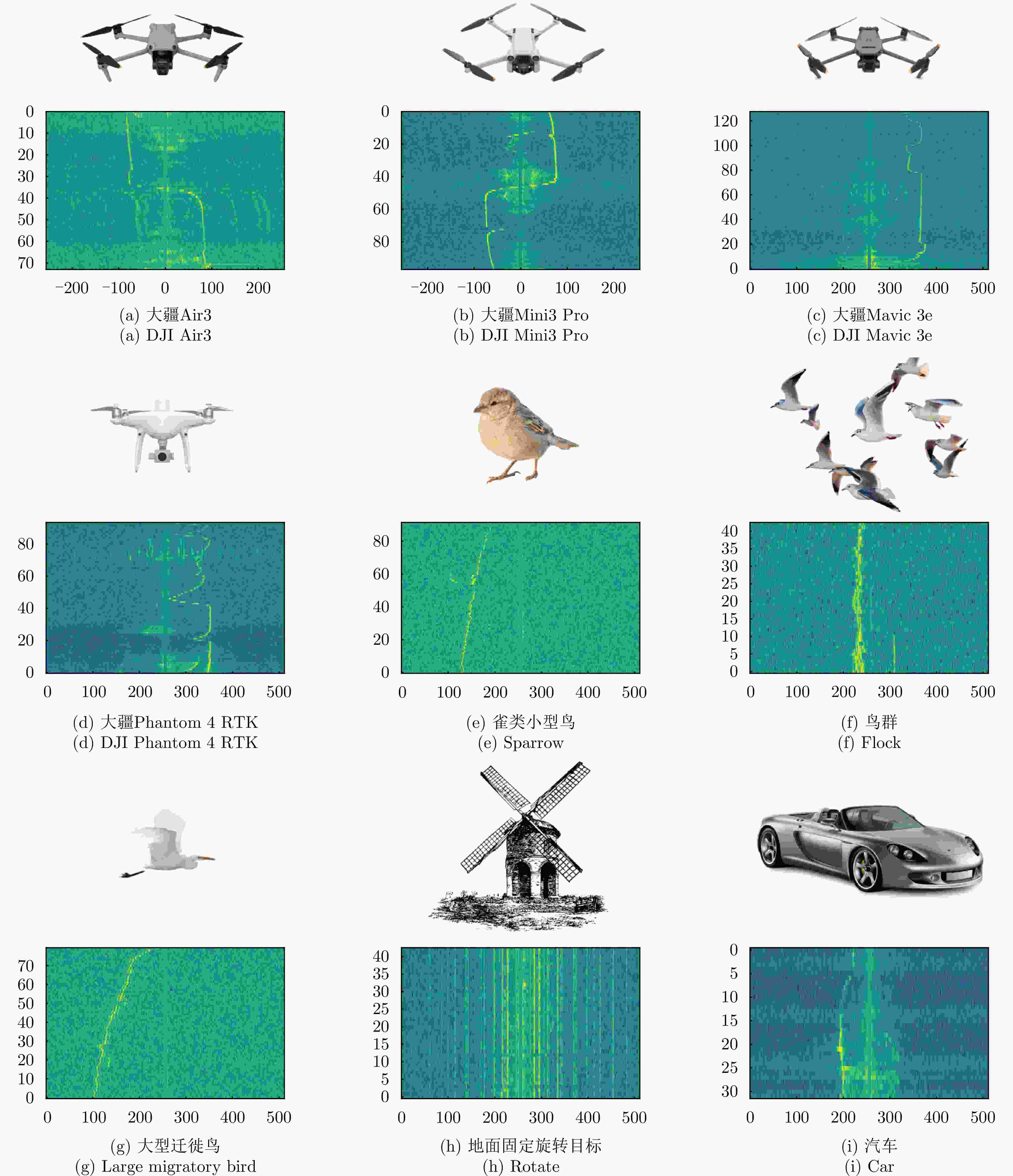

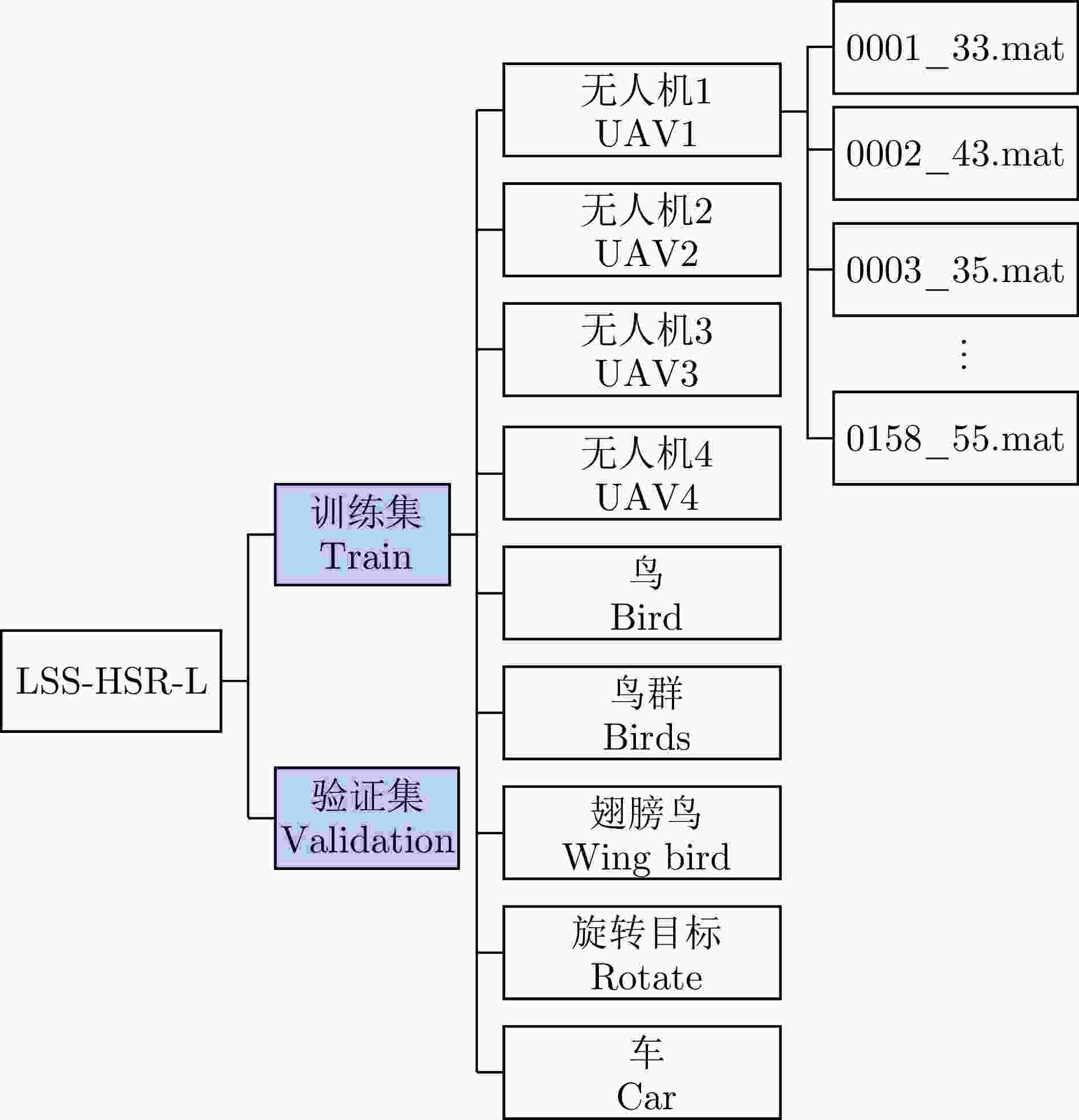

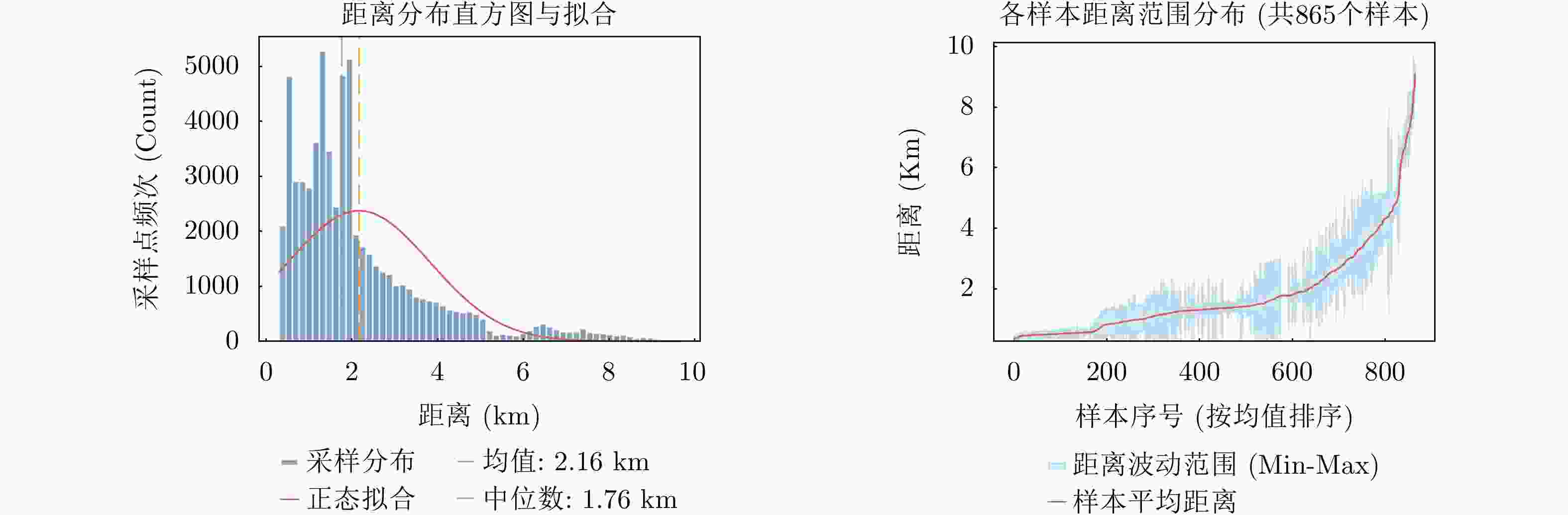

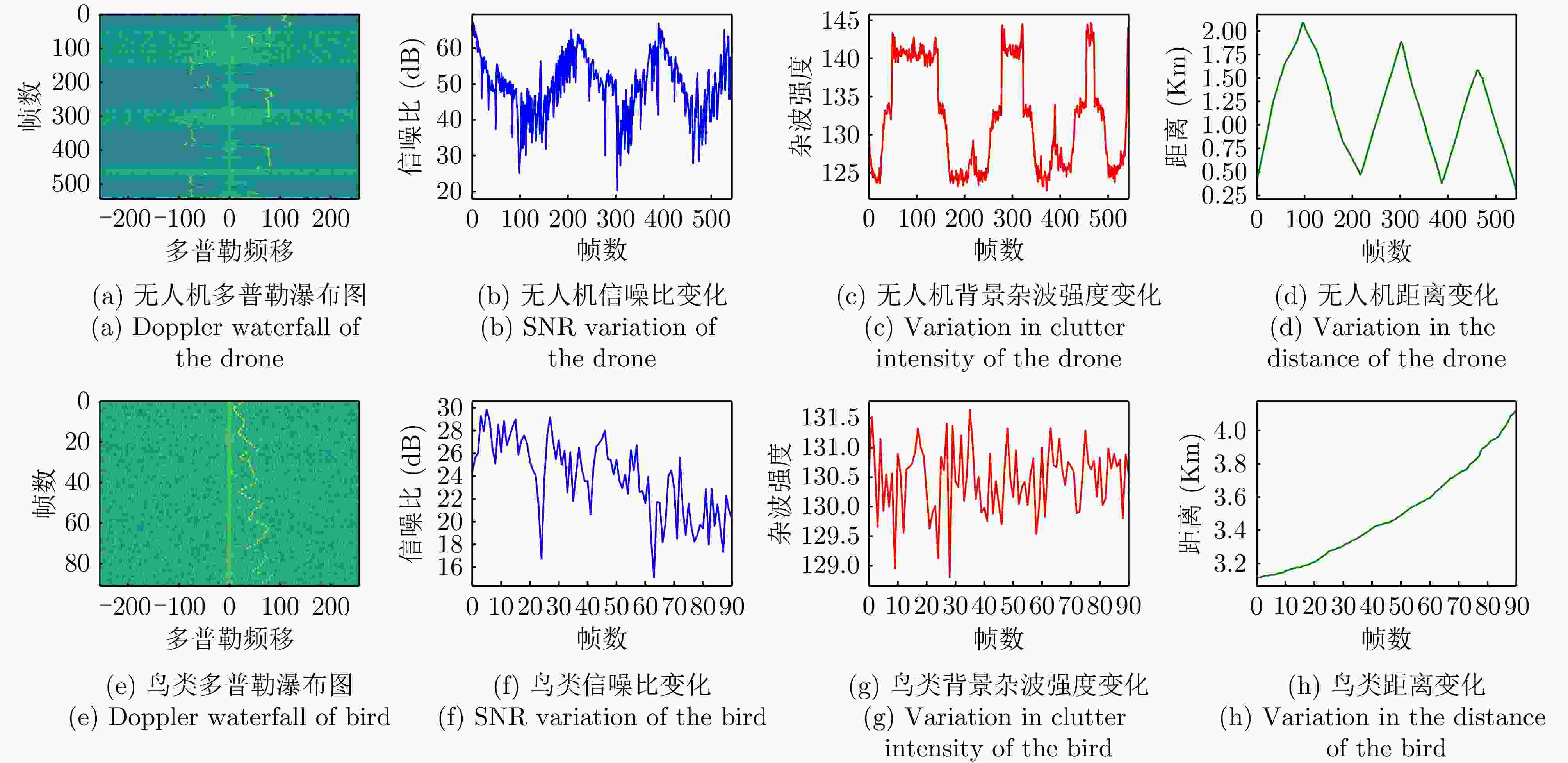

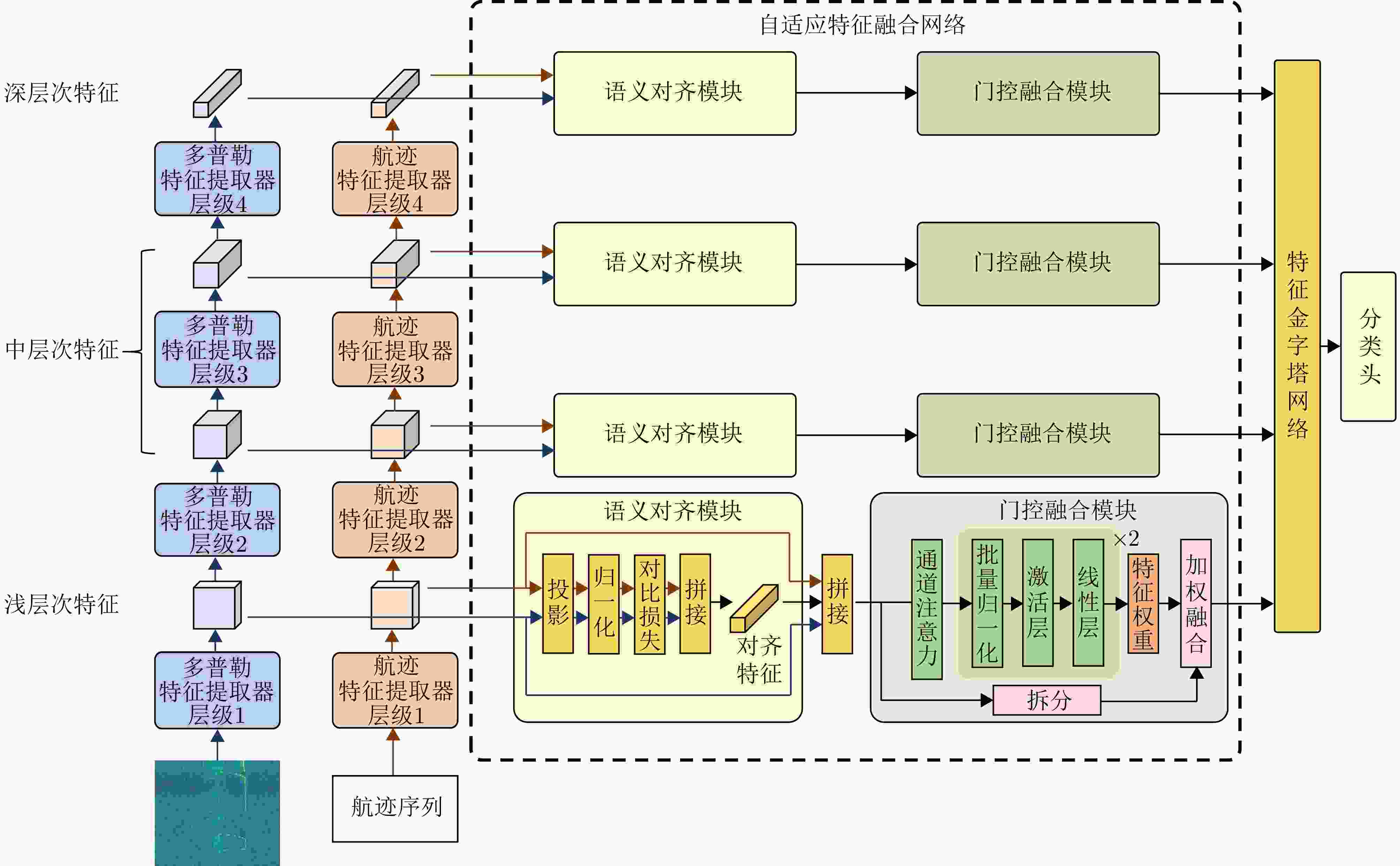

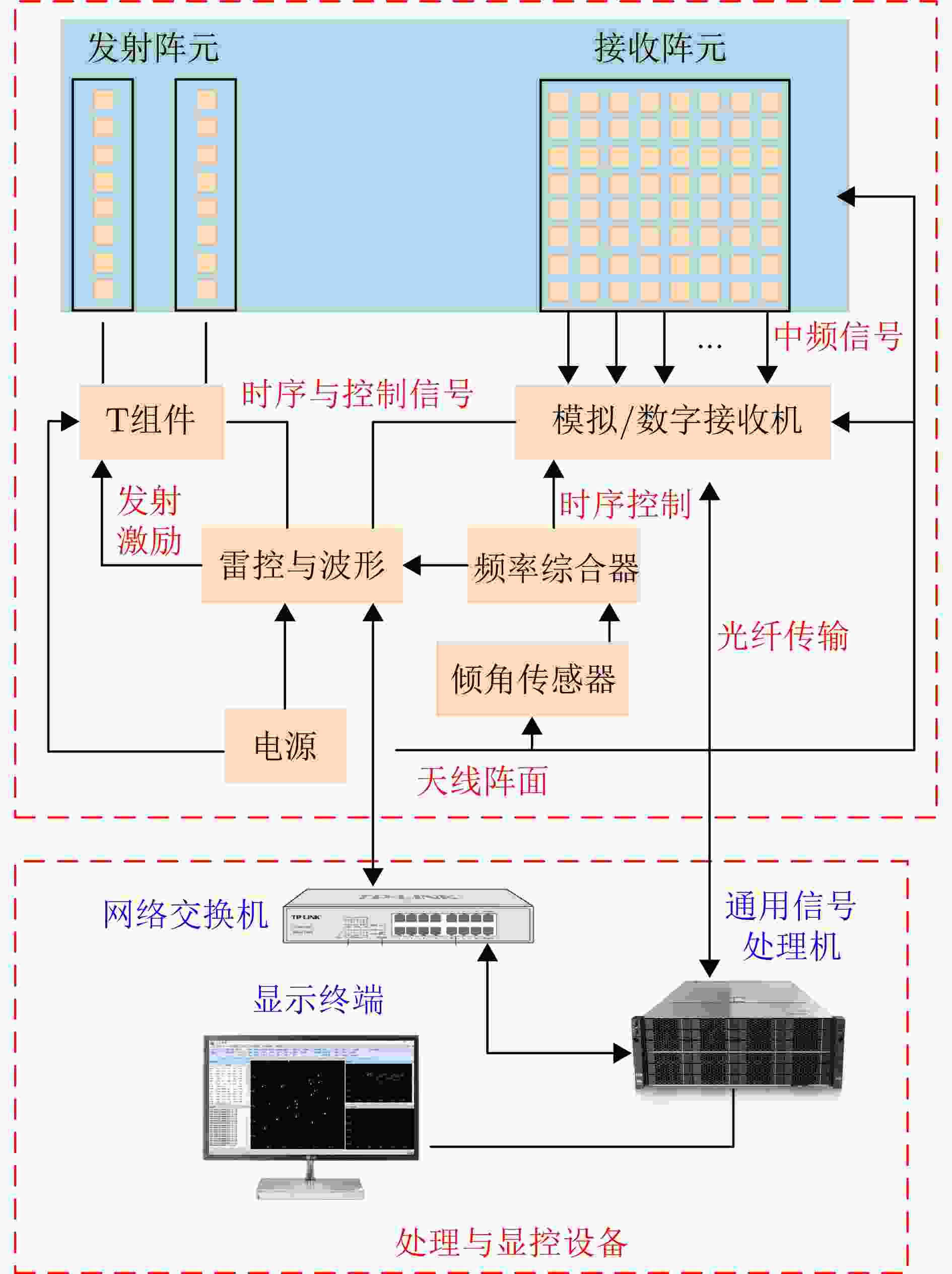

摘要: 低空目标对机场等空域安全的威胁日趋显著,其精准探测与识别是雷达系统亟待解决的关键问题,而高质量的雷达实测数据集是推进低空目标识别的核心基础。然而,现有公开雷达低空目标数据集多为仿真数据或近距离采集数据,难以真实反映和验证远距离场景下雷达目标识别性能。因此,该文构建了基于全息凝视雷达(HSR)的低空目标探测识别数据集,完成了外场环境下典型低空目标的实测数据采集与识别验证。该数据集涵盖多旋翼无人机、雀类、大型迁徙鸟等典型目标,以及悬停、盘旋、径向飞行等典型运动场景,并且同步提供目标多普勒瀑布图与雷达实测航迹信息(含方位角、俯仰角、径向速度、归一化信噪比),为探索目标精细化特征与运动状态的内在关联提供了数据支撑。在此基础上,该文采用多模态自适应特征融合网络,提取不同目标的多普勒特征与运动学特征并进行融合,验证了区分不同类型低空目标的有效性。Abstract: The threat from low-altitude targets to airspace security such as airport is increasing, making accurate detection and recognition essential for radar systems. High-quality measured radar datasets are crucial for advancing low-altitude target recognition. However, most existing public radar datasets for these targets consist of simulation data or short-range collected data, which have difficulty accurately reflecting and verifying radar target recognition performance in long-range scenarios. To overcome these limitations, this study creates a low-altitude target detection and recognition dataset based on Holographic Staring Radar (HSR), including measured data collection and recognition validation for typical low-altitude targets in outdoor environments. The dataset includes common targets such as multirotor unmanned aerial vehicles, sparrows, and large migratory birds, along with representative motion scenarios like hovering, circling, and radial flight. It also offers synchronized target micro-Doppler waterfall plots and radar-measured track information (including azimuth and elevation angles, radial velocity, and normalized signal-to-noise ratio), providing a data foundation for exploring the intrinsic link between target detailed features and motion states. Building on this, a multimodal adaptive feature fusion network is developed to extract and combine Doppler and kinematic features from different targets, demonstrating the dataset’s effectiveness in distinguishing various low-altitude targets.

-

表 1 中山大学L波段全息雷达系统参数

Table 1. Basic parameters of the L-HSR developed by SYSU

参数 指标 频段 L波段 信号形式 线性调频 信号脉宽 2~20 μs(根据目标距离切换) 信号带宽 2~16 MHz PRF ~5 kHz 更新率 优于1 s 表 2 LSS-HSR-L数据集类别分布情况

Table 2. Class distribution of LSS-HSR-L dataset

类别名称 训练集航迹数 验证集航迹数 无人机1 (UAV1) 116 25 无人机2 (UAV2) 120 25 无人机3 (UAV3) 117 24 无人机4 (UAV4) 121 25 鸟(Bird) 158 30 鸟群(Birds) 114 24 翅膀鸟(Wing bird) 119 24 旋转目标(Rotate) 122 28 车(Car) 218 45 表 3 LSS-HSR-L数据集与典型数据集对比

Table 3. Comparison between LSS-HSR-L and typical datasets

对比项 LSS-FMCWR-1.0 LSS-PR-1.0 LSS-HSR-L 体制 多波段FMCW雷达 非合作被动雷达

(外辐射源DTMB)全息凝视雷达 频段 Ku: 23.7 GHz;

L: 1.4~1.5 GHz470~806 MHz (DTMB) L波段 带宽 Ku: 100/200/300/500 MHz;

L: 100 MHz7.56 MHz 4 MHz 更新周期/帧率 - 1.0 s 0.8 s 距离范围 5~30 m ~ km 级 0.3~10.0 km 目标类别 6类(各类无人机) 4类(无人机/直升机/快艇/客轮) 9类(无人机/鸟类/车辆等) 数据模态 时频图 距离多普勒谱 + 航迹 多普勒瀑布图 + 航迹 模态对齐 - 是 是(帧级严格对齐) 采样频率 500 kHz - 5 MHz 标注方式 实验受控采集 结合ADS-B与人工校对 飞控日志、双盲人工交叉验证、光学图片抽检校验 核心优势 多波段融合特征 无源探测,静默感知 远距离、凝视观测 表 4 数据集有效性验证实验超参数

Table 4. Hyperparameters for dataset validity verification experiments

参数 指标 优化器 AdamW 损失函数 Cross Entropy Loss 学习率调度器 StepLR 初始学习率 1×10–4 批次大小 64 训练轮数 100或30 表 5 各视觉模型与时序模型在LSS-HSR-L数据集的召回率及总体准确率(%)

Table 5. Recall and ACC of each visual model and temporal model on the LSS-HSR-L dataset (%)

算法名称 RC-UAV1 RC-UAV2 RC-UAV3 RC-UAV4 RC-Bird RC-Birds RC-WingBird RC-Rotate RC-Car ACC 视觉模型(timm) ConvNet[25] 69.6 76.2 64.1 65.4 13.8 57.1 30.5 96.0 59.5 59.1 CSPNet[26] 86.2 84.6 86.1 85.4 97.4 88.4 83.4 99.1 91.9 89.2 DenseNet[27] 86.6 87.3 88.3 78.7 96.1 93.3 79.8 98.3 96.2 89.4 EfficientNetV1[28] 63.2 74.8 68.8 75.7 86.1 67.7 49.3 90.0 70.6 71.8 EfficientNetV2[29] 75.7 86.7 83.4 82.5 96.8 76.8 64.8 99.3 74.5 82.3 GhostNet[30] 79.2 86.3 80.5 85.5 96.6 81.5 62.5 99.0 83.3 83.8 HRNet[31] 84.0 89.4 85.4 84.4 94.9 77.5 73.3 99.6 80.4 85.4 PP-LCNet[32] 55.3 66.4 67.6 69.6 88.2 41.3 45.8 88.8 56.7 64.4 MobileNetV3[33] 78.6 80.7 81.2 74.6 94.1 80.5 56.3 98.2 74.6 79.9 ResNet[34] 79.2 82.9 80.8 81.3 94.7 83.9 84.5 99.4 70.9 84.2 Res2Net[35] 85.0 87.3 85.7 85.1 96.8 93.4 88.7 99.0 86.8 89.8 ResNet18[36] 79.1 79.2 80.2 84.2 97.7 80.4 90.4 98.4 88.4 86.4 ResNet34[36] 81.5 83.3 79.6 85.8 96.6 80.3 88.6 99.0 90.8 87.3 ResNet50[36] 79.3 86.0 82.9 86.1 95.2 86.8 80.4 99.2 89.7 87.3 TinyNet[37] 73.1 80.5 79.6 80.4 95.8 70.8 46.9 96.2 71.2 77.2 VGG[38] 88.3 87.6 80.0 87.3 84.1 48.1 37.8 97.9 84.6 77.3 时序模型(tsai) FCN[39] 76.3 85.4 79.3 63.0 79.5 37.9 31.8 98.6 94.5 71.8 gMLP[40] 77.0 86.2 80.9 60.5 31.3 26.8 95.4 99.1 86.4 71.5 GRU_FCN[41] 82.8 87.9 84.5 65.7 85.4 32.5 37.8 98.9 93.0 74.3 InceptionTime[42] 80.5 87.4 84.7 79.9 76.4 32.5 42.7 99.3 95.2 75.4 LSTM-FCN[43] 81.4 87.1 83.9 69.7 72.8 38.6 42.7 98.9 94.3 74.4 MiniRocket[44] 81.5 80.1 77.1 80.7 84.1 46.9 31.1 97.1 88.9 74.2 OmniScale[45] 79.7 88.3 85.8 76.9 89.3 34.6 34.9 99.8 92.9 75.8 PatchTST[46] 16.9 47.1 50.8 29.0 47.8 27.0 60.6 83.9 96.9 51.1 ResCNN[47] 85.6 87.7 80.4 72.8 89.8 34.1 41.4 99.5 92.3 76.0 ResNet[39] 79.8 87.8 86.2 73.1 85.3 41.6 37.5 98.9 93.0 76.0 Rocket[48] 69.2 29.0 48.5 58.9 38.5 19.9 63.6 93.3 84.4 56.1 TSPerceiver[49] 62.7 73.4 63.7 66.1 70.2 20.6 36.0 98.5 94.6 65.1 TSSequencer[50] 63.1 74.3 69.6 64.0 70.1 22.9 40.8 99.6 92.2 66.3 XceptionTime[51] 86.1 90.6 84.1 79.8 86.8 46.1 44.8 99.0 95.0 79.1 XCM[52] 82.2 84.6 64.7 39.4 75.5 23.6 46.6 99.8 91.0 67.5 注:加粗数值表示所在列最优。 表 6 不同融合策略下各类目标的召回率与总体准确率对比(%)

Table 6. Comparison of Recall and ACC for each target under different fusion strategies (%)

算法名称 RC-UAV1 RC-UAV2 RC-UAV3 RC-UAV4 RC-Bird RC-Birds RC-WingBird RC-Rotate RC-Car ACC 浅层次融合 77.7 86.6 72.9 73.1 93.6 68.5 89.5 97.7 96.4 84.0 中层次融合 91.7 86.5 80.4 76.8 92.8 71.5 88.4 99.7 96.1 87.1 深层次融合 93.9 86.3 84.7 86.8 91.0 75.2 93.1 99.7 96.8 89.7 多模态自适应特征融合网络 93.0 83.6 92.1 88.8 92.4 86.4 85.6 99.6 95.2 90.7 表 7 不同特征层级组合对识别性能影响的消融实验

Table 7. Ablation experiments on the impact of different feature-level combinations on recognition performance

实验编号 层级1 层级2 层级3 层级4 总体准确率(%) 性能下降(%) 1 √ √ √ √ 90.74 - 2 √ √ √ - 89.86 0.88 3 √ √ - √ 90.45 0.29 4 √ - √ √ 90.03 0.71 5 - √ √ √ 89.79 0.95 表 8 全息凝视雷达实地部署识别情况(%)

Table 8. Recognition performance of HSR in real deployment (%)

类别名称 正确识别率 误报率 鸟类 92.6 9.1 无人机类 100 13.3 其他类 75 0 -

[1] 王栋, 赵洁, 刘洋, 等. 多模态反无人机检测系统与技术[J]. 中国科学基金, 2026, 40(1): 73–84. doi: 10.3724/BNSFC-2025-0024.WANG Dong, ZHAO Jie, LIU Yang, et al. Multimodal anti-UAV detection system and technology[J]. Bulletin of National Natural Science Foundation of China, 2026, 40(1): 73–84. doi: 10.3724/BNSFC-2025-0024. [2] 汤洁, 张明明, 张东方, 等. 民航机场低空目标光电探测系统的研究和应用[J]. 激光与红外, 2025, 55(11): 1776–1781. doi: 10.3969/j.issn.1001-5078.2025.11.018.TANG Jie, ZHANG Mingming, ZHANG Dongfang, et al. Research and application of low altitude target photoelectric detection system in civil aviation airport[J]. Laser & Infrared, 2025, 55(11): 1776–1781. doi: 10.3969/j.issn.1001-5078.2025.11.018. [3] NILSSON C, LA SORTE F A, DOKTER A, et al. Bird strikes at commercial airports explained by citizen science and weather radar data[J]. Journal of Applied Ecology, 2021, 58(10): 2029–2039. doi: 10.1111/1365-2664.13971. [4] 陈小龙, 陈唯实, 陈思伟, 等. 雷达低空目标探测与防范: 进展与展望[J]. 中国科学基金, 2026, 40(1): 48–64. doi: 10.3724/BNSFC-2025.07.25.0005.CHEN Xiaolong, CHEN Weishi, CHEN Siwei, et al. Radar low altitude target detection and prevention: Progress and prospects[J]. Bulletin of National Natural Science Foundation of China, 2026, 40(1): 48–64. doi: 10.3724/BNSFC-2025.07.25.0005. [5] VARNOUSFADERANI E S and SHIHAB S A M. Bird strikes in aviation: A systematic review for informing future directions[J]. Aerospace Science and Technology, 2025, 163: 110303. doi: 10.1016/j.ast.2025.110303. [6] 许道明, 张宏伟. 雷达低慢小目标检测技术综述[J]. 现代防御技术, 2018, 46(1): 148–155. doi: 10.3969/j.issn.1009-086x.2018.01.024.XU Daoming and ZHANG Hongwei. Overview of radar LSS target detection technology[J]. Modern Defence Technology, 2018, 46(1): 148–155. doi: 10.3969/j.issn.1009-086x.2018.01.024. [7] 屈旭涛, 庄东晔, 谢海斌. “低慢小”无人机探测方法[J]. 指挥控制与仿真, 2020, 42(2): 128–135. doi: 10.3969/j.issn.1673-3819.2020.02.024.QU Xutao, ZHUANG Dongye, and XIE Haibin. Detection methods for low-slow-small (LSS) UAV[J]. Command Control & Simulation, 2020, 42(2): 128–135. doi: 10.3969/j.issn.1673-3819.2020.02.024. [8] 陈小龙, 陈唯实, 饶云华, 等. 飞鸟与无人机目标雷达探测与识别技术进展与展望[J]. 雷达学报, 2020, 9(5): 803–827. doi: 10.12000/JR20068.CHEN Xiaolong, CHEN Weishi, RAO Yunhua, et al. Progress and prospects of radar target detection and recognition technology for flying birds and unmanned aerial vehicles[J]. Journal of Radars, 2020, 9(5): 803–827. doi: 10.12000/JR20068. [9] 郭瑞, 张月, 田彪, 等. 全息凝视雷达系统技术与发展应用综述[J]. 雷达学报, 2023, 12(2): 389–411. doi: 10.12000/JR22153.GUO Rui, ZHANG Yue, TIAN Biao, et al. Review of the technology, development and applications of holographic staring radar[J]. Journal of Radars, 2023, 12(2): 389–411. doi: 10.12000/JR22153. [10] GRIFFITHS D, JAHANGIR M, KANNANTHARA J, et al. Fully digital, urban networked staring radar: Simulation and experimentation[J]. IET Radar, Sonar & Navigation, 2024, 18(5): 657–673. doi: 10.1049/rsn2.12499. [11] 周红平, 李睿, 李刘林, 等. 基于涡旋雷达的飞鸟与旋翼无人机微动参数提取研究[J]. 雷达学报(中英文), 待出版. doi: 10.12000/JR25164.ZHOU Hongping, LI Rui, LI Liulin, et al. Micromotions parameter extraction of birds and rotary-wing unmanned aerial vehicles based on vortex radar[J]. Journal of Radars, in press. doi: 10.12000/JR25164. [12] BENNETT C, JAHANGIR M, FIORANELLI F, et al. Use of symmetrical peak extraction in drone micro-Doppler classification for staring radar[C]. 2020 IEEE Radar Conference (RadarConf20), Florence, Italy, 2020: 1–6. doi: 10.1109/RadarConf2043947.2020.9266702. [13] 张军, 田西兰. 基于雷达微多普勒特征的无人机集群识别[J]. 雷达科学与技术, 2025, 23(6): 692–699. doi: 10.3969/j.issn.1672-2337.2025.06.011.ZHANG Jun and TIAN Xilan. UAV swarm identification based on radar micro-Doppler features[J]. Radar Science and Technology, 2025, 23(6): 692–699. doi: 10.3969/j.issn.1672-2337.2025.06.011. [14] KIM K, ÜNEY M, and MULGREW B. Coherent track-before-detect with micro-Doppler signature estimation in array radars[J]. IET Radar, Sonar & Navigation, 2020, 14(4): 572–585. doi: 10.1049/iet-rsn.2019.0319. [15] LIU Jia, XU Qunyu, and CHEN Weishi. Classification of bird and drone targets based on motion characteristics and random forest model using surveillance radar data[J]. IEEE Access, 2021, 9: 160135–160144. doi: 10.1109/ACCESS.2021.3130231. [16] DAI Ting, XU Shiyou, TIAN Biao, et al. Extraction of micro-doppler feature using LMD algorithm combined supplement feature for UAVs and birds classification[J]. Remote Sensing, 2022, 14(9): 2196. doi: 10.3390/rs14092196. [17] 唐世尧. 基于点航迹与MTD-距离图像融合的低空目标识别[J]. 科学技术创新, 2026(4): 70–74. doi: 10.3969/j.issn.2096-4390.2026.04.019.TANG Shiyao. Low-altitude target recognition based on fusion of point tracks and MTD-range images[J]. Scientific and Technological Innovation, 2026(4): 70–74. doi: 10.3969/j.issn.2096-4390.2026.04.019. [18] JIANG Wen, LIU Zhen, WANG Yanping, et al. Realizing small UAV targets recognition via multi-dimensional feature fusion of high-resolution radar[J]. Remote Sensing, 2024, 16(15): 2710. doi: 10.3390/rs16152710. [19] 陈小龙, 袁旺, 杜晓林, 等. 多波段FMCW雷达低慢小探测数据集(LSS-FMCWR-1.0)及高分辨微动特征提取方法[J]. 雷达学报(中英文), 2024, 13(3): 539–553. doi: 10.12000/JR23142.CHEN Xiaolong, YUAN Wang, DU Xiaolin, et al. Multiband FMCW radar LSS-target detection dataset (LSS-FMCWR-1.0) and high-resolution micromotion feature extraction method[J]. Journal of Radars, 2024, 13(3): 539–553. doi: 10.12000/JR23142. [20] 陈小龙, 饶桂林, 关键, 等. 被动雷达低慢小探测数据集(LSS-PR-1.0)及多域特征提取和分析方法[J]. 雷达学报(中英文), 2025, 14(2): 249–268. doi: 10.12000/JR24145.CHEN Xiaolong, RAO Guilin, GUAN Jian, et al. Passive radar low slow small detection dataset (LSS-PR-1.0) and multi-domain feature extraction and analysis methods[J]. Journal of Radars, 2025, 14(2): 249–268. doi: 10.12000/JR24145. [21] 陈小龙, 袁旺, 杜晓林, 等. 多波段多角度FMCW雷达低慢小探测数据集(LSS-FMCWR-2.0)及特征融合分类方法[J]. 雷达学报(中英文), 2025, 14(5): 1276–1293. doi: 10.12000/JR25004.CHEN Xiaolong, YUAN Wang, DU Xiaolin, et al. Multi-band multi-angle FMCW radar low-slow-small target detection dataset (LSS-FMCWR-2.0) and feature fusion classification methods[J]. Journal of Radars, 2025, 14(5): 1276–1293. doi: 10.12000/JR25004. [22] 刘佳, 徐群玉, 陈唯实. 无人机雷达航迹运动特征提取及组合分类方法[J]. 系统工程与电子技术, 2023, 45(10): 3122–3131. doi: 10.12305/j.issn.1001-506X.2023.10.16.LIU Jia, XU Qunyu, and CHEN Weishi. Motion feature extraction and ensembled classification method based on radar tracks for drones[J]. Systems Engineering and Electronics, 2023, 45(10): 3122–3131. doi: 10.12305/j.issn.1001-506X.2023.10.16. [23] 陈唯实, 刘佳, 陈小龙, 等. 基于运动模型的低空非合作无人机目标识别[J]. 北京航空航天大学学报, 2019, 45(4): 687–694. doi: 10.13700/j.bh.1001-5965.2018.0447.CHEN Weishi, LIU Jia, CHEN Xiaolong, et al. Non-cooperative UAV target recognition in low-altitude airspace based on motion model[J]. Journal of Beijing University of Aeronautics and Astronautics, 2019, 45(4): 687–694. doi: 10.13700/j.bh.1001-5965.2018.0447. [24] 刘旗, 郭瑞, 王佳佳, 等. 低仰角目标高精度波束空间DOA估计方法[J]. 雷达学报(中英文), 待出版. doi: 10.12000/JR25173.LIU Qi, GUO Rui, WANG Jiajia, et al. A high-accuracy beamspace DOA estimation method for low-elevation angle targets[J]. Journal of Radars, in press. doi: 10.12000/JR25173. [25] LIU Zhuang, MAO Hanzi, WU Chaoyuan, et al. A ConvNet for the 2020s[C]. 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), New Orleans, USA, 2022: 11966–11976. doi: 10.1109/CVPR52688.2022.01167. [26] WANG C Y, LIAO H Y M, WU Y H, et al. CSPNet: A new backbone that can enhance learning capability of CNN[C]. 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Seattle, USA, 2020: 1571–1580. doi: 10.1109/CVPRW50498.2020.00203. [27] HUANG Gao, LIU Zhuang, VAN DER MAATEN L, et al. Densely connected convolutional networks[C]. 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, USA, 2017: 2261–2269. doi: 10.1109/CVPR.2017.243. [28] TAN Mingxing and LE Q V. EfficientNet: Rethinking model scaling for convolutional neural networks[C]. The 36th International Conference on Machine Learning, Long Beach, USA, 2019: 6105–6114. [29] TAN Mingxing and LE Q V. EfficientNetV2: Smaller models and faster training[C]. The 38th International Conference on Machine Learning, Online, 2021: 10096–10106. [30] HAN Kai, WANG Yunhe, TIAN Qi, et al. GhostNet: More features from cheap operations[C]. 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, USA, 2020: 1577–1586. doi: 10.1109/CVPR42600.2020.00165. [31] WANG Jingdong, SUN Ke, CHENG Tianheng, et al. Deep high-resolution representation learning for visual recognition[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2021, 43(10): 3349–3364. doi: 10.1109/TPAMI.2020.2983686. [32] CUI Cheng, GAO Tingquan, WEI Shengyu, et al. PP-LCNet: A lightweight CPU convolutional neural network[J]. arXiv preprint arXiv, 2109.15099, 2021. doi: 10.48550/arXiv.2109.15099. [33] HOWARD A, SANDLER M, CHEN Bo, et al. Searching for MobileNetV3[C]. 2019 IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Korea (South), 2019: 1314–1324. doi: 10.1109/ICCV.2019.00140. [34] RADOSAVOVIC I, KOSARAJU R P, GIRSHICK R, et al. Designing network design spaces[C]. 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, USA, 2020: 10425–10433. doi: 10.1109/CVPR42600.2020.01044. [35] GAO Shanghua, CHENG Mingming, ZHAO Kai, et al. Res2Net: A new multi-scale backbone architecture[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2021, 43(2): 652–662. doi: 10.1109/TPAMI.2019.2938758. [36] HE Kaiming, ZHANG Xiangyu, REN Shaoqing, et al. Deep residual learning for image recognition[C]. 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, USA, 2016: 770–778. doi: 10.1109/CVPR.2016.90. [37] HAN Kai, WANG Yunhe, ZHANG Qiulin, et al. Model Rubik’s cube: Twisting resolution, depth and width for TinyNets[C]. The 34th International Conference on Neural Information Processing Systems, Vancouver, Canada, 2020: 1623. [38] SIMONYAN K and ZISSERMAN A. Very deep convolutional networks for large-scale image recognition[C]. The 3rd International Conference on Learning Representations, San Diego, USA, 2015: 1–14. [39] WANG Zhiguang, YAN Weizhong, and OATES T. Time series classification from scratch with deep neural networks: A strong baseline[C]. 2017 International Joint Conference on Neural Networks (IJCNN), Anchorage, USA, 2017: 1578–1585. doi: 10.1109/IJCNN.2017.7966039. [40] LIU Hanxiao, DAI Zihang, SO D R, et al. Pay attention to MLPs[C]. The 35th International Conference on Neural Information Processing Systems, Online, 2021: 9204–9215. [41] ELSAYED N, MAIDA A S, and BAYOUMI M. Deep gated recurrent and convolutional network hybrid model for univariate time series classification[J]. International Journal of Advanced Computer Science and Applications. 2019, 10(5): 654–664. doi: 10.14569/IJACSA.2019.0100582. [42] ISMAIL FAWAZ H, LUCAS B, FORESTIER G, et al. InceptionTime: Finding AlexNet for time series classification[J]. Data Mining and Knowledge Discovery, 2020, 34(6): 1936–1962. doi: 10.1007/s10618-020-00710-y. [43] KARIM F, MAJUMDAR S, DARABI H, et al. LSTM fully convolutional networks for time series classification[J]. IEEE Access, 2018, 6: 1662–1669. doi: 10.1109/ACCESS.2017.2779939. [44] TAN Changwei, DEMPSTER A, BERGMEIR C, et al. MultiRocket: Multiple pooling operators and transformations for fast and effective time series classification[J]. Data Mining and Knowledge Discovery, 2022, 36(5): 1623–1646. doi: 10.1007/s10618-022-00844-1. [45] TANG Wensi, LONG Guodong, LIU Lu, et al. Omni-scale CNNs: A simple and effective kernel size configuration for time series classification[C]. The Tenth International Conference on Learning Representations, Online, 2022: 1–17. [46] NIE Yuqi, NGUYEN N H, SINTHONG P, et al. A time series is worth 64 words: Long-term forecasting with transformers[C]. The Eleventh International Conference on Learning Representations, Kigali, Rwanda, 2023: 1–24. [47] ZOU Xiaowu, WANG Zidong, LI Qi, et al. Integration of residual network and convolutional neural network along with various activation functions and global pooling for time series classification[J]. Neurocomputing, 2019, 367: 39–45. doi: 10.1016/j.neucom.2019.08.023. [48] DEMPSTER A, PETITJEAN F, and WEBB G I. ROCKET: Exceptionally fast and accurate time series classification using random convolutional kernels[J]. Data Mining and Knowledge Discovery, 2020, 34(5): 1454–1495. doi: 10.1007/s10618-020-00701-z. [49] JAEGLE A, BORGEAUD S, ALAYRAC J B, et al. Perceiver IO: A general architecture for structured inputs & outputs[C]. The Tenth International Conference on Learning Representations, Online, 2022: 1–29. [50] TATSUNAMI Y and TAKI M. Sequencer: Deep LSTM for image classification[C]. The 36th International Conference on Neural Information Processing Systems, New Orleans, USA, 2022: 2768. [51] RAHIMIAN E, ZABIHI S, ATASHZAR S F, et al. XceptionTime: A novel deep architecture based on depthwise separable convolutions for hand gesture classification[J]. arXiv preprint arXiv, 1911.03803, 2019. doi: 10.48550/arXiv.1911.03803. [52] FAUVEL K, LIN Tao, MASSON V, et al. XCM: An explainable convolutional neural network for multivariate time series classification[J]. Mathematics, 2021, 9(23): 3137. doi: 10.3390/math9233137. [53] NARAYANAN R M, TSANG B, and BHARADWAJ R. Classification and discrimination of birds and small drones using radar micro-doppler spectrogram images[J]. Signals, 2023, 4(2): 337–358. doi: 10.3390/signals4020018. -

作者中心

作者中心 专家审稿

专家审稿 责编办公

责编办公 编辑办公

编辑办公

下载:

下载: