| [1] |

DING Jinshan, WEN Liwu, ZHONG Chao, et al. Video SAR moving target indication using deep neural network[J]. IEEE Transactions on Geoscience and Remote Sensing, 2020, 58(10): 7194–7204. doi: 10.1109/TGRS.2020.2980419. |

| [2] |

丁金闪, 仲超, 温利武, 等. 视频合成孔径雷达双域联合运动目标检测方法[J]. 雷达学报, 2022, 11(3): 313–323. doi: 10.12000/JR22036. DING Jinshan, ZHONG Chao, WEN Liwu, et al. Joint detection of moving target in video synthetic aperture radar[J]. Journal of Radars, 2022, 11(3): 313–323. doi: 10.12000/JR22036. |

| [3] |

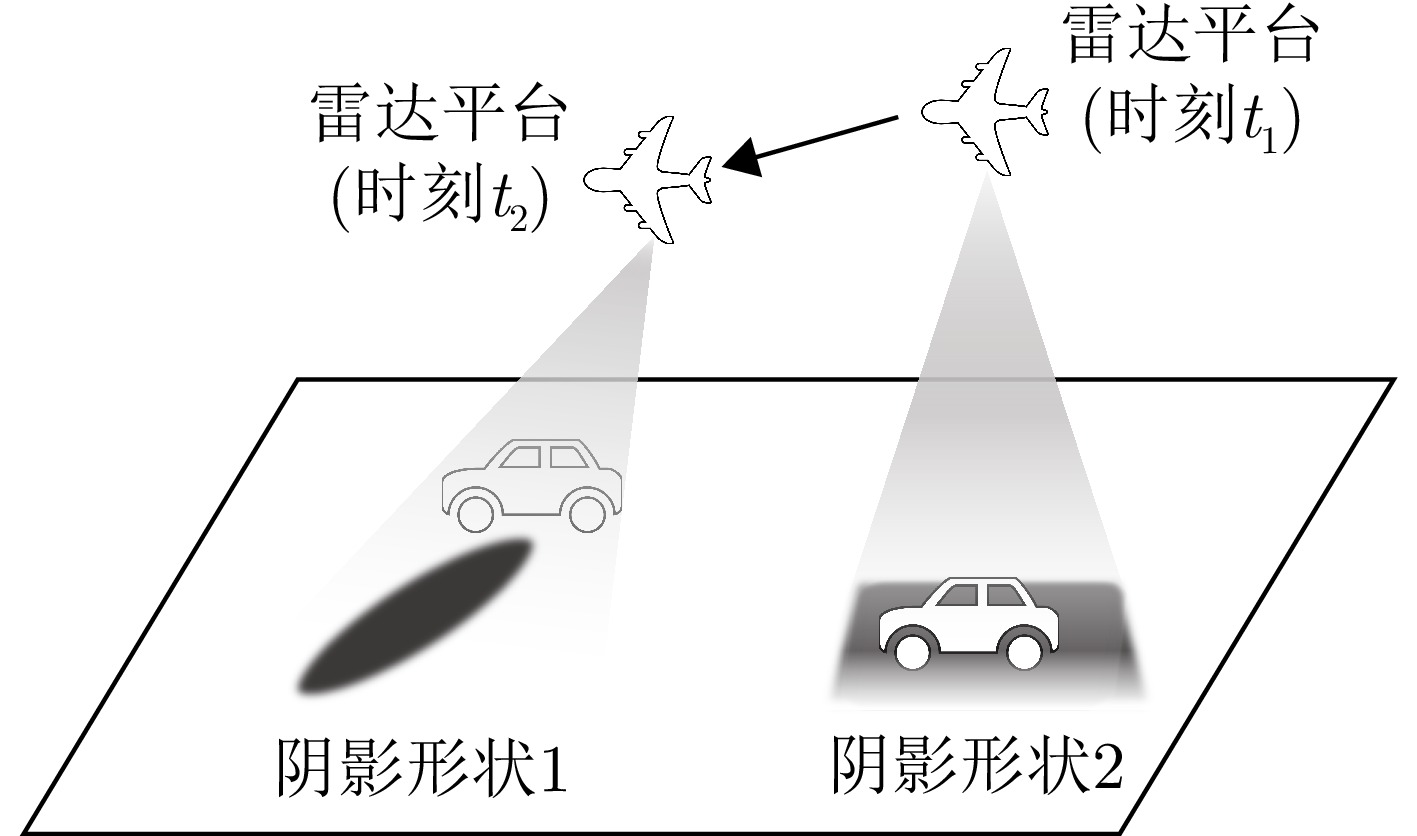

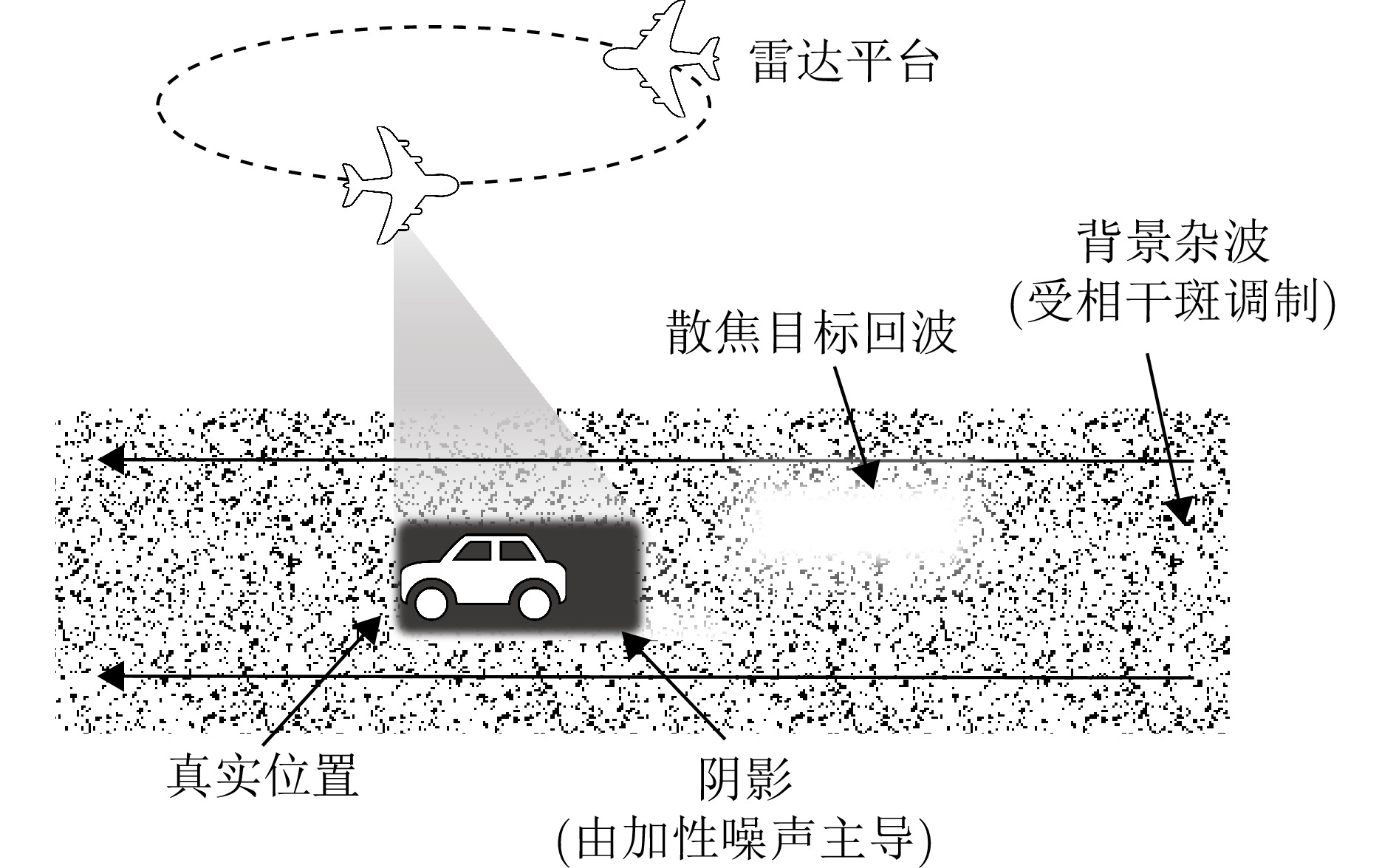

RAYNAL A M, BICKEL D L, and DOERRY A W. Stationary and moving target shadow characteristics in synthetic aperture radar[C]. Radar Sensor Technology XVIII, Baltimore, USA, 2014: 90771B. doi: 10.1117/12.2049729. |

| [4] |

ENDER J H G, GIERULL C H, and CERUTTI-MAORI D. Improved space-based moving target indication via alternate transmission and receiver switching[J]. IEEE Transactions on Geoscience and Remote Sensing, 2008, 46(12): 3960–3974. doi: 10.1109/TGRS.2008.2002266. |

| [5] |

TIAN Xiaoqing, LIU Jing, MALLICK M, et al. Simultaneous detection and tracking of moving-target shadows in ViSAR imagery[J]. IEEE Transactions on Geoscience and Remote Sensing, 2021, 59(2): 1182–1199. doi: 10.1109/TGRS.2020.2998782. |

| [6] |

ARGENTI F, LAPINI A, BIANCHI T, et al. A tutorial on speckle reduction in synthetic aperture radar images[J]. IEEE Geoscience and Remote Sensing Magazine, 2013, 1(3): 6–35. doi: 10.1109/MGRS.2013.2277512. |

| [7] |

SOHN K, ZHANG Zizhao, LI Chunliang, et al. A simple semi-supervised learning framework for object detection[OL]. arXiv preprint arXiv: 2005.04757, 2020. doi: 10.48550/arXiv.2005.04757. |

| [8] |

LIU Yencheng, MA C Y, HE Zijian, et al. Unbiased teacher for semi-supervised object detection[C]. 9th International Conference on Learning Representations (ICLR), Virtual, Austria, May 2021. doi: 10.48550/arXiv.2102.09480. |

| [9] |

WOO S, PARK J, LEE J Y, et al. CBAM: Convolutional block attention module[C]. 15th European Conference on Computer Vision – ECCV 2018, Munich, Germany, 2018: 3–19. doi: 10.1007/978-3-030-01234-2_1. |

| [10] |

QIN Zequn, ZHANG Pengyi, WU Fei, et al. FcaNet: Frequency channel attention networks[C]. 2021 IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, Canada, 2021: 763–772. doi: 10.1109/ICCV48922.2021.00082. |

| [11] |

ZHANG Peng, CHEN Lifu, LI Zhenhong, et al. Automatic extraction of water and shadow from SAR images based on a multi-resolution dense encoder and decoder network[J]. Sensors, 2019, 19(16): 3576. doi: 10.3390/s19163576. |

| [12] |

LI Qiupeng and KONG Yingying. An improved SAR image semantic segmentation Deeplabv3+ network based on the feature post-processing module[J]. Remote Sensing, 2023, 15(8): 2153. doi: 10.3390/rs15082153. |

| [13] |

WANG Wei, ZHOU Yuanyuan, XIE Zhikun, et al. Moving target shadow detection using transformer in video sar[C]. IGARSS 2022 - 2022 IEEE International Geoscience and Remote Sensing Symposium, Kuala Lumpur, Malaysia, 2022: 2614–2617. doi: 10.1109/IGARSS46834.2022.9884510. |

| [14] |

BAO Jinyu, ZHANG Xiaoling, ZHANG Tianwen, et al. ShadowDeNet: A moving target shadow detection network for video SAR[J]. Remote Sensing, 2022, 14(2): 320. doi: 10.3390/rs14020320. |

| [15] |

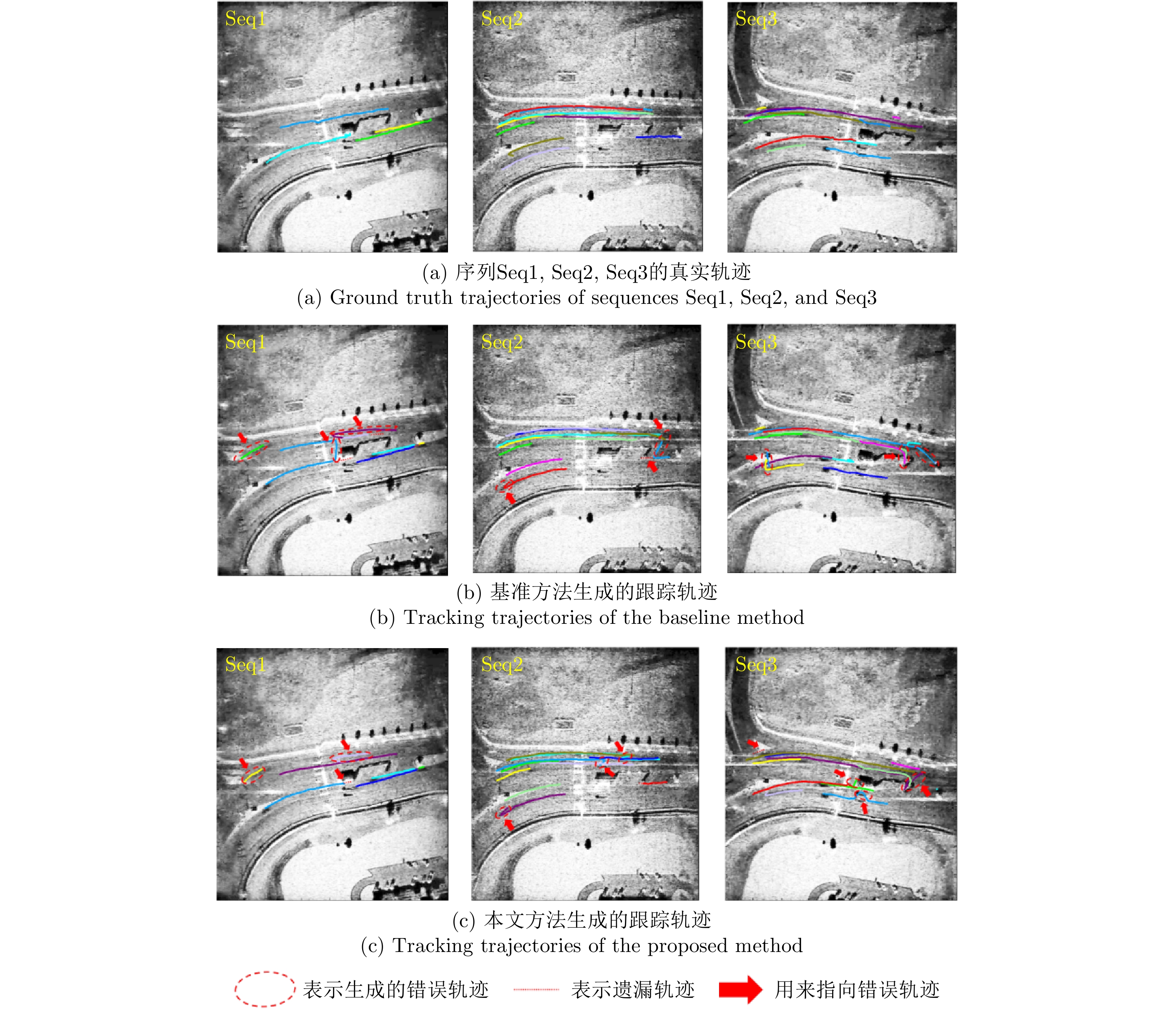

FANG Hui, LIAO Guisheng, LIU Yongjun, et al. Siam-Sort: Multi-target tracking in video SAR based on tracking by detection and Siamese network[J]. Remote Sensing, 2023, 15(1): 146. doi: 10.3390/rs15010146. |

| [16] |

ZHONG Chao, DING Jinshan, and ZHANG Yuhong. Video SAR moving target tracking using joint kernelized correlation filter[J]. IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, 2022, 15: 1481–1493. doi: 10.1109/JSTARS.2022.3146035. |

| [17] |

KUHN H W. The Hungarian method for the assignment problem[J]. Naval Research Logistics Quarterly, 1955, 2(1/2): 83–97. doi: 10.1002/nav.3800020109. |

| [18] |

KALMAN R E. A new approach to linear filtering and prediction problems[J]. Journal of Basic Engineering, 1960, 82(1): 35–45. doi: 10.1115/1.3662552. |

| [19] |

BEWLEY A, GE Zongyuan, OTT L, et al. Simple online and realtime tracking[C]. 2016 IEEE International Conference on Image Processing (ICIP), Phoenix, USA, 2016: 3464–3468. doi: 10.1109/ICIP.2016.7533003. |

| [20] |

ZHANG Yifu, SUN Peize, JIANG Yi, et al. ByteTrack: Multi-object tracking by associating every detection box[C]. 17th European Conference on Computer Vision – ECCV 2022, Tel Aviv, Israel, 2022: 1–21. doi: 10.1007/978-3-031-20047-2_1. |

| [21] |

YANG Lihe, ZHAO Zhen, and ZHAO Hengshuang. UniMatch V2: Pushing the limit of semi-supervised semantic segmentation[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2025, 47(4): 3031–3048. doi: 10.1109/TPAMI.2025.3528453. |

| [22] |

GOODMAN J W. Some fundamental properties of speckle[J]. Journal of the Optical Society of America, 1976, 66(11): 1145–1150. doi: 10.1364/JOSA.66.001145. |

| [23] |

GOODMAN J W. Speckle Phenomena in Optics: Theory and Applications[M]. Englewood, Roberts & Company Publishers, 2007 : 73-82.

|

| [24] |

GOODFELLOW I J, SHLENS J, and SZEGEDY C. Explaining and harnessing adversarial examples[C]. International Conference on Learning Representations (ICLR), San Diego, USA, 2015. doi: 10.48550/arXiv.1412.6572. |

| [25] |

OQUAB M, DARCET T, MOUTAKANNI T, et al. DINOv2: Learning robust visual features without supervision[OL]. arXiv preprint arXiv: 2304.07193, 2023. doi: 10.48550/arXiv.2304.07193. |

| [26] |

BERNARDIN K and STIEFELHAGEN R. Evaluating multiple object tracking performance: The CLEAR MOT metrics[J]. EURASIP Journal on Image and Video Processing, 2008, 2008(1): 246309. doi: 10.1155/2008/246309. |

| [27] |

RISTANI E, SOLERA F, ZOU R, et al. Performance measures and a data set for multi-target, multi-camera tracking[C]. Computer Vision – ECCV 2016 Workshops, Amsterdam, The Netherlands, 2016: 17–35. doi: 10.1007/978-3-319-48881-3_2. |

| [28] |

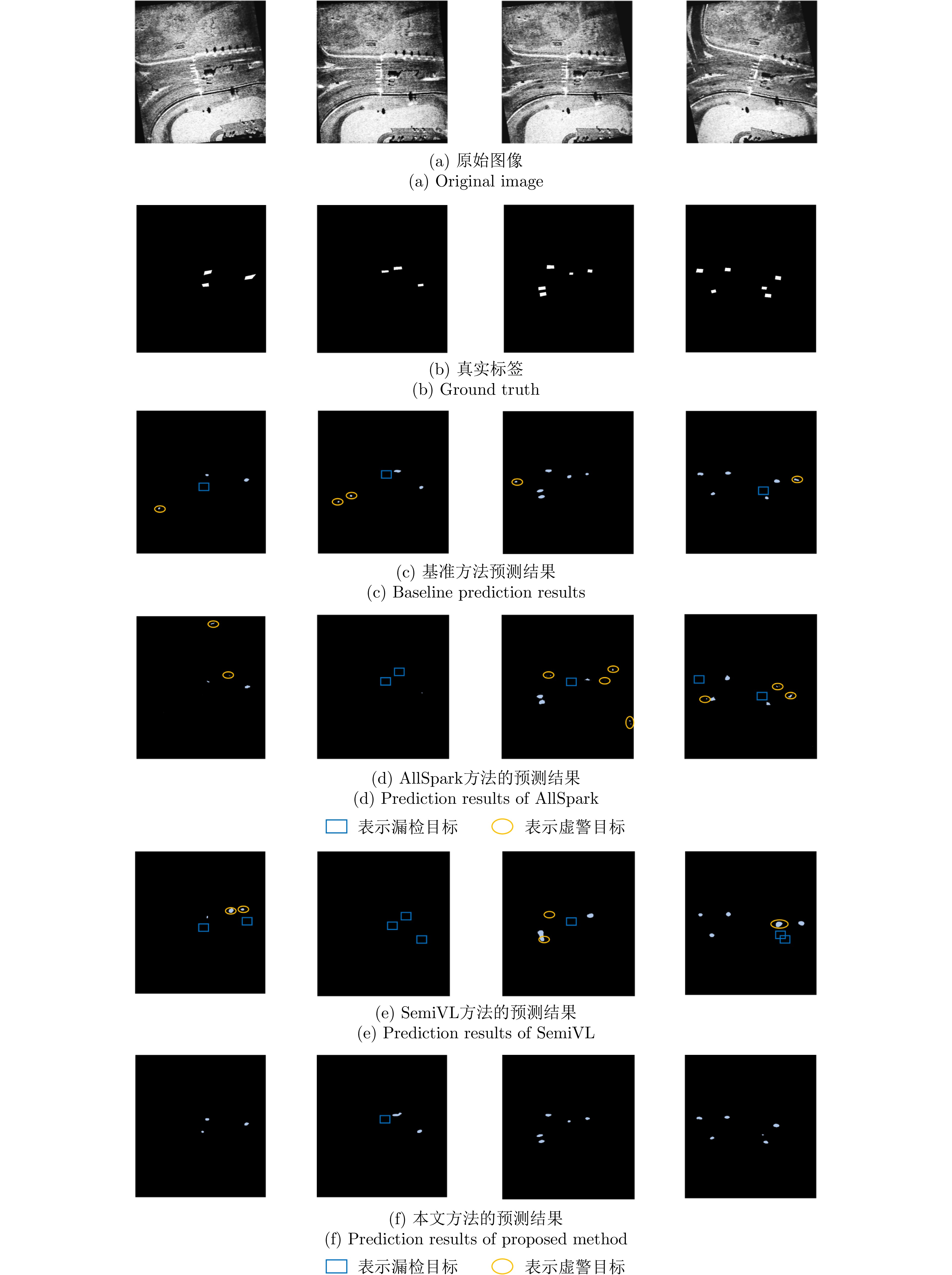

WANG Haonan, ZHANG Qixiang, LI Yi, et al. AllSpark: Reborn labeled features from unlabeled in transformer for semi-supervised semantic segmentation[C]. 2024 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, USA, 2024: 3627–3636. doi: 10.1109/CVPR52733.2024.00348. |

| [29] |

HOYER L, TAN D J, NAEEM M F, et al. SemiVL: Semi-supervised semantic segmentation with vision-language guidance[C]. 18th European Conference on Computer Vision – ECCV 2024, Milan, Italy, 2025: 257–275. doi: 10.1007/978-3-031-72933-1_15. |

| [30] |

ZHANG Yifu, WANG Chunyu, WANG Xinggang, et al. FairMOT: On the fairness of detection and re-identification in multiple object tracking[J]. International Journal of Computer Vision, 2021, 129(11): 3069–3087. doi: 10.1007/s11263-021-01513-4. |

| [31] |

CAO Jinkun, PANG Jiangmiao, WENG Xinshuo, et al. Observation-centric SORT: Rethinking SORT for robust multi-object tracking[C]. 2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Vancouver, Canada, 2023: 9686–9696. doi: 10.1109/CVPR52729.2023.00934. |

| [32] |

ZHANG Wensi, ZHANG Xiaoling, XU Xiaowo, et al. GNN-JFL: Graph neural network for video SAR shadow tracking with joint motion-appearance feature learning[J]. IEEE Transactions on Geoscience and Remote Sensing, 2024, 62: 5209117. doi: 10.1109/TGRS.2024.3383870. |

| [33] |

SU Mingjie, NI Peishuang, PEI Hao, et al. Graph feature representation for shadow-assisted moving target tracking in video SAR[J]. IEEE Geoscience and Remote Sensing Letters, 2025, 22: 4004905. doi: 10.1109/LGRS.2025.3539748. |

Submit Manuscript

Submit Manuscript Peer Review

Peer Review Editor Work

Editor Work

DownLoad:

DownLoad: