-

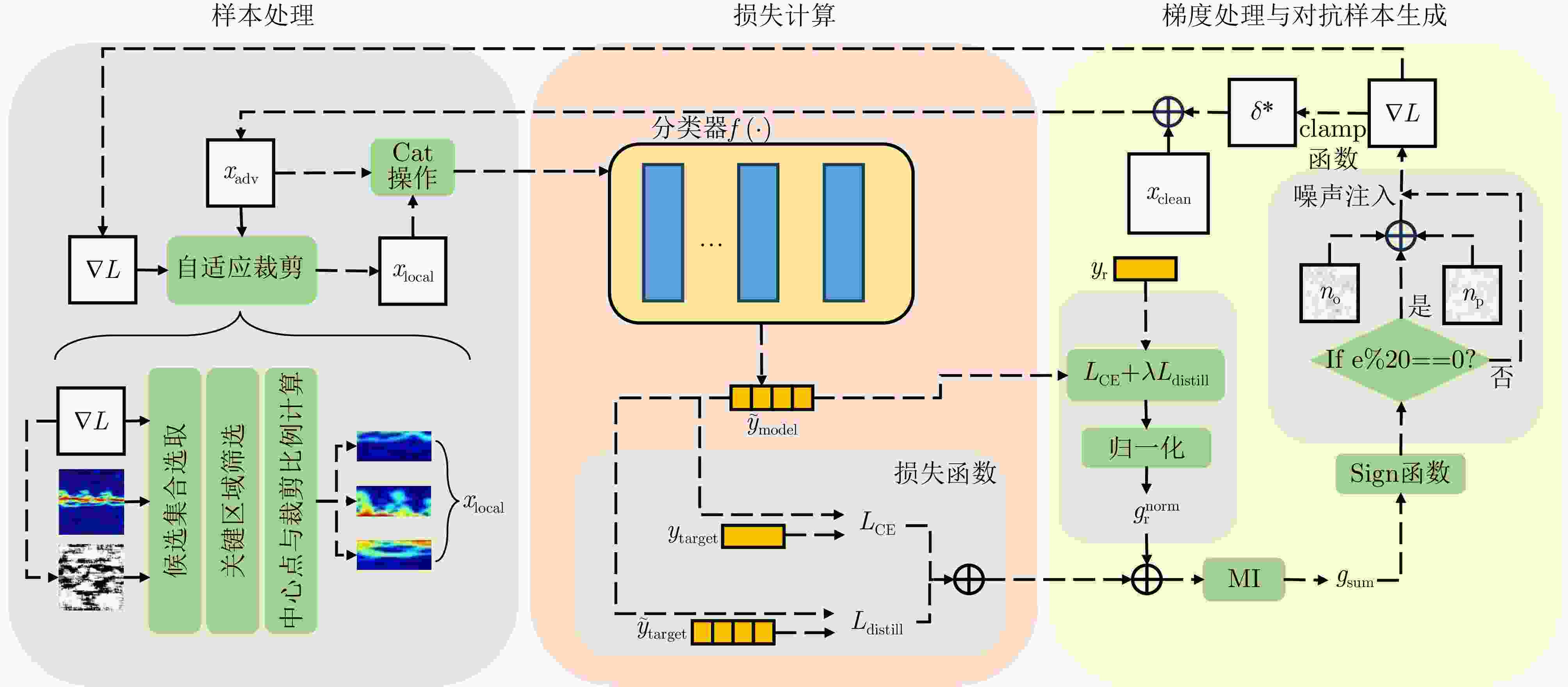

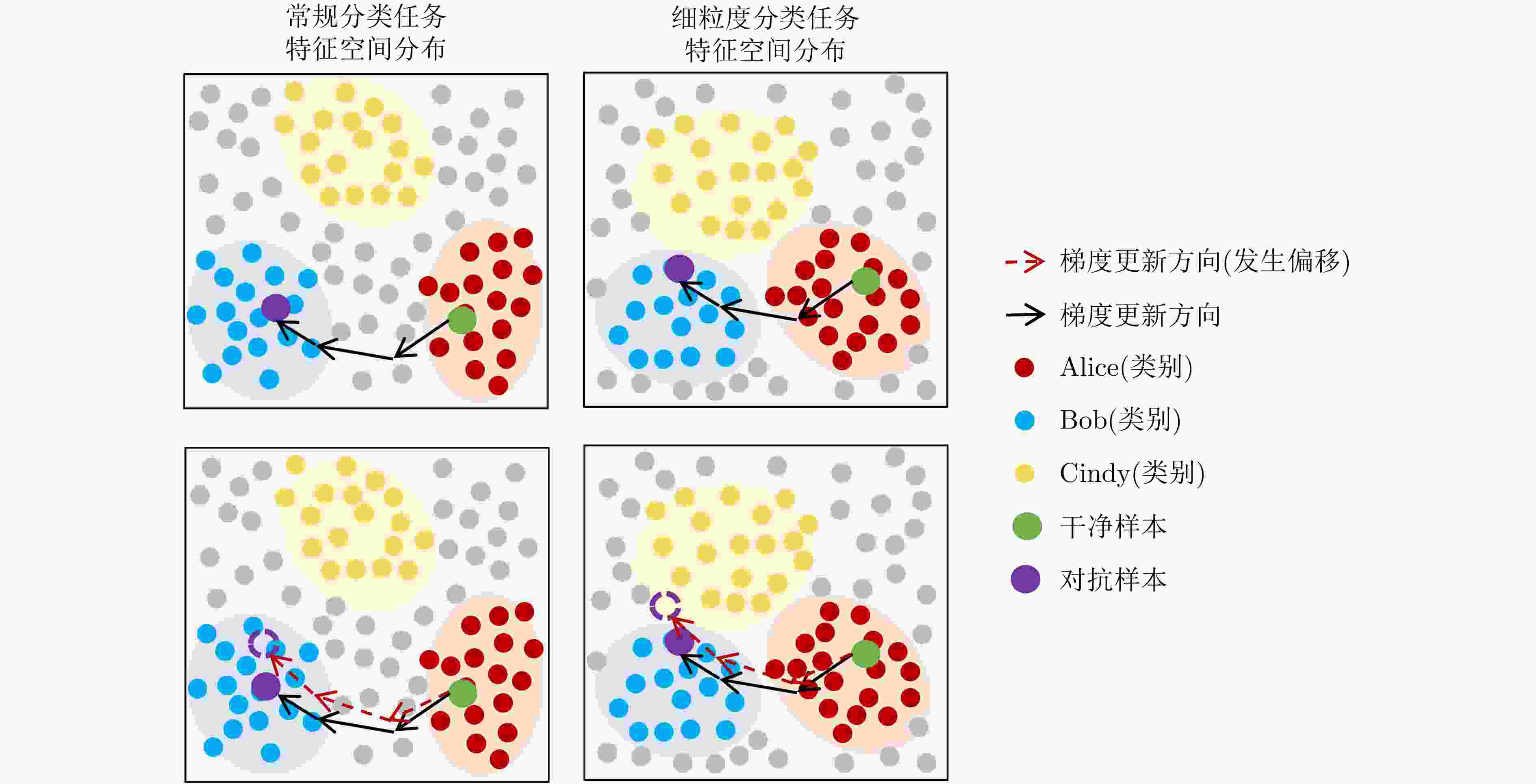

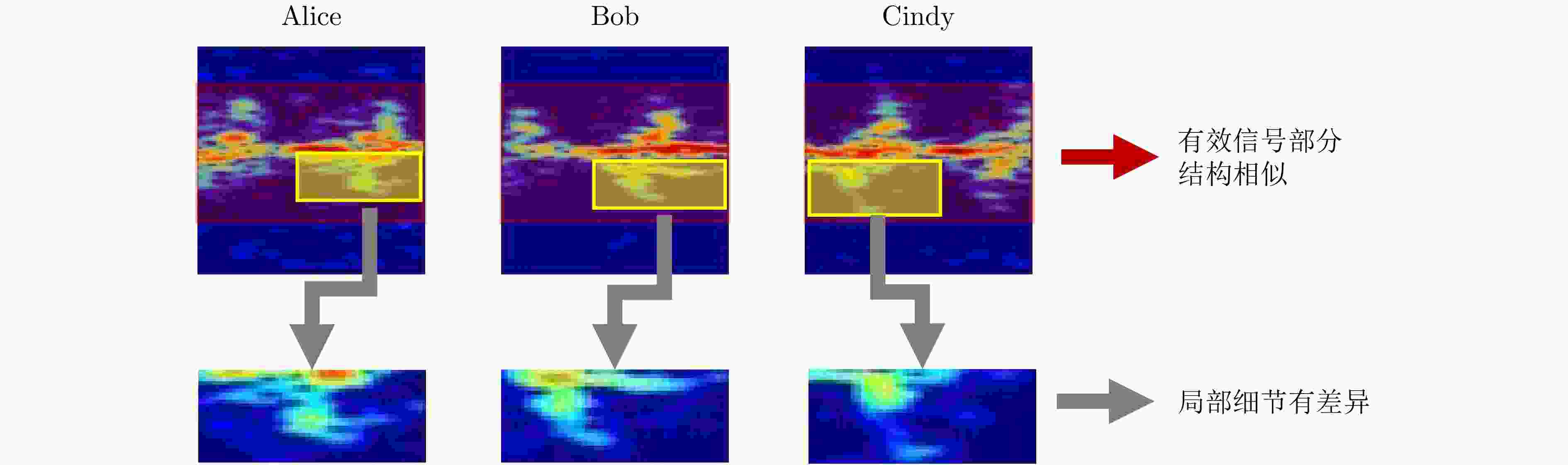

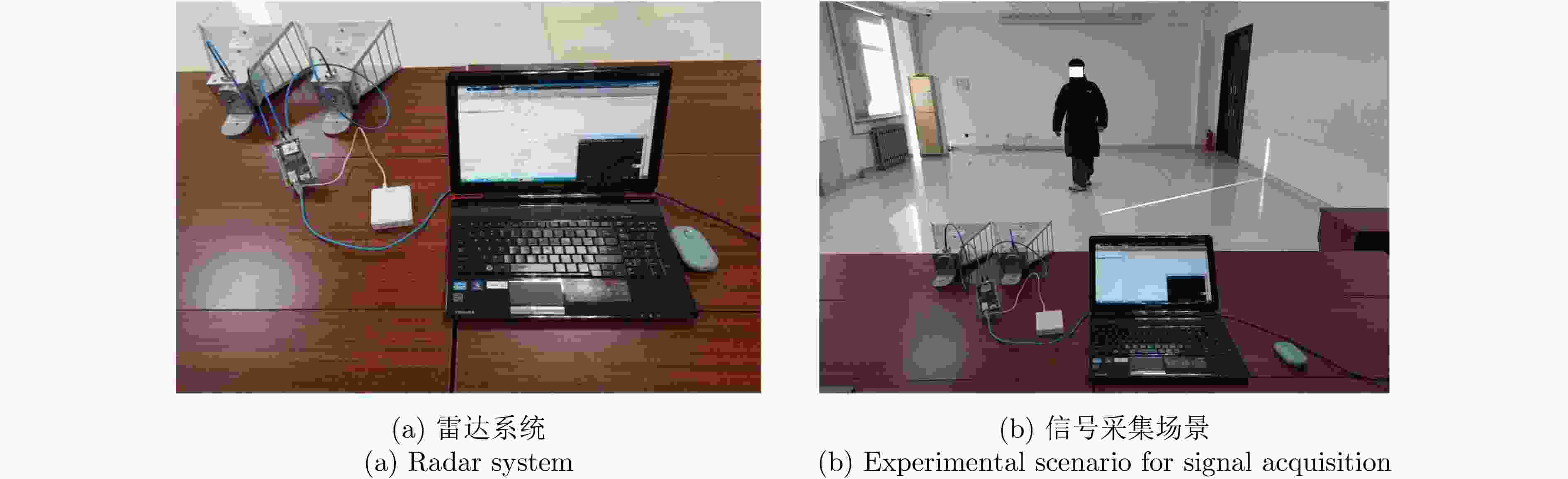

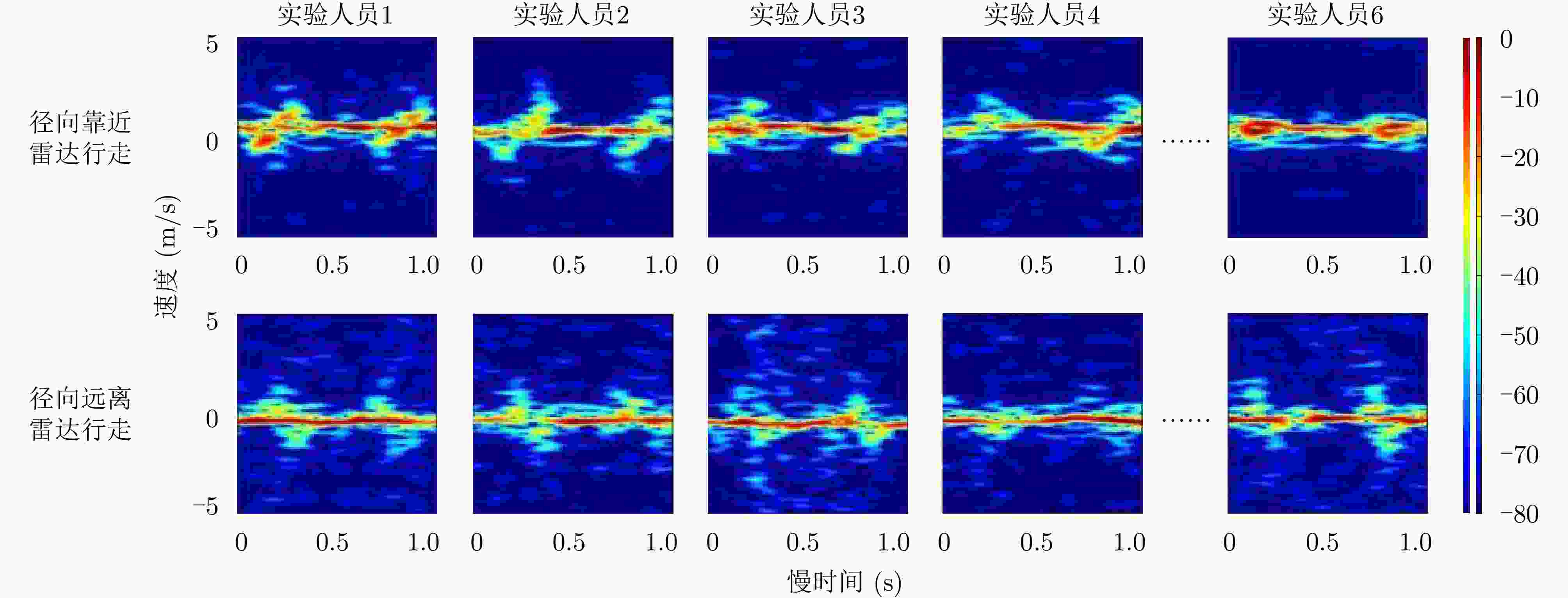

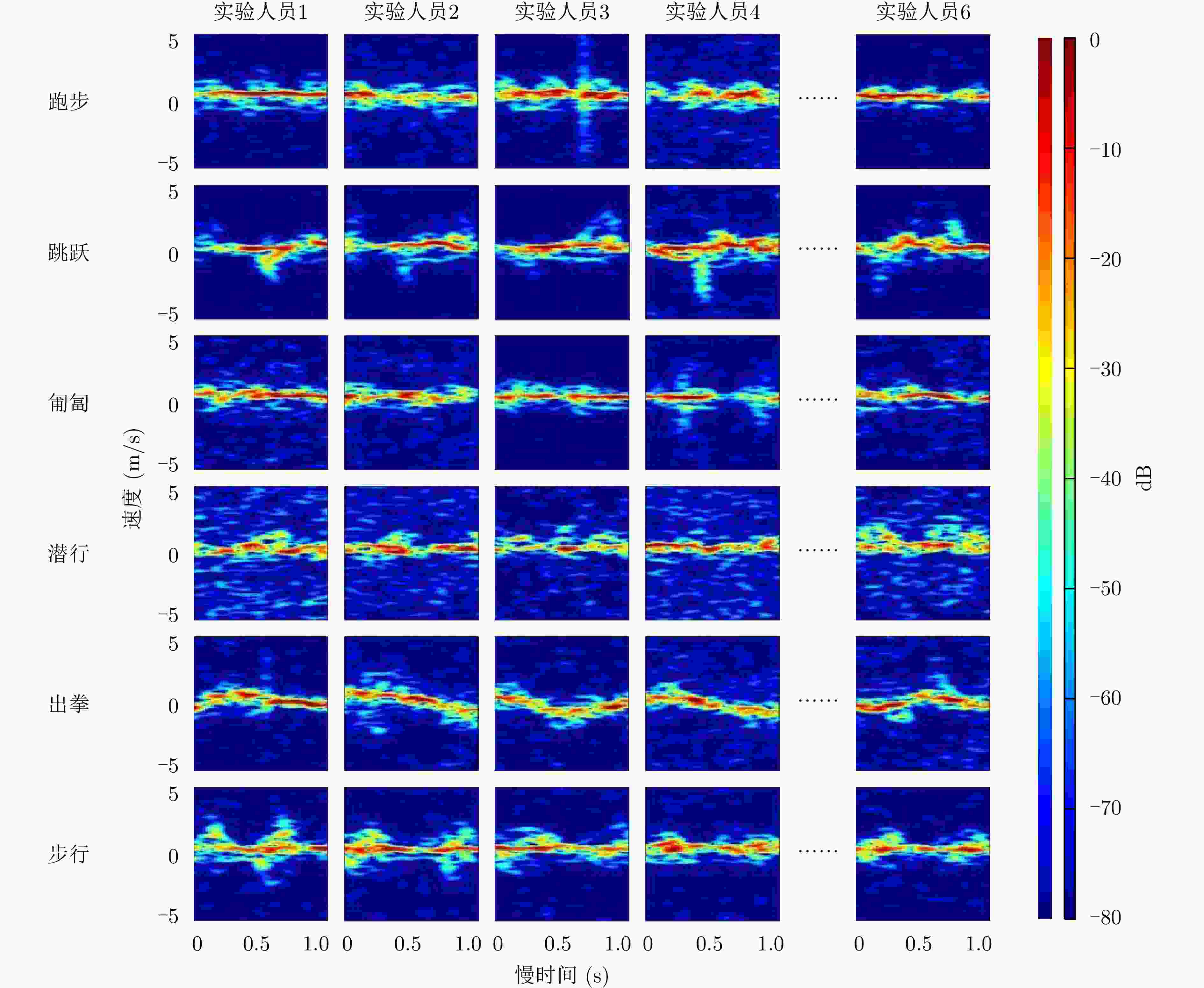

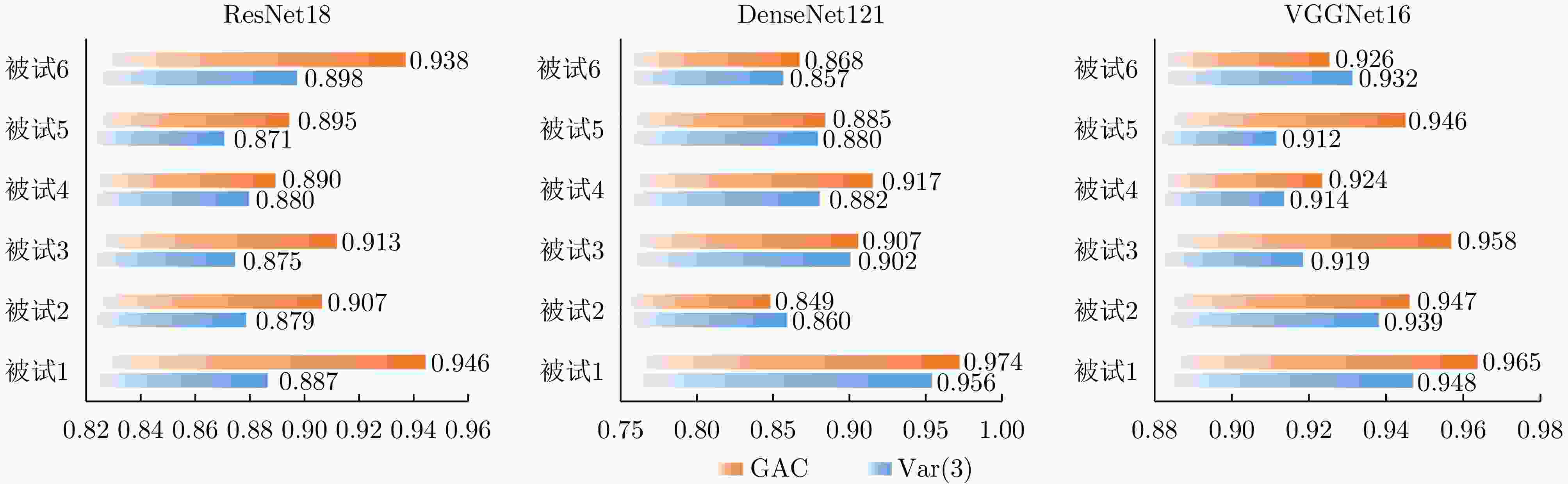

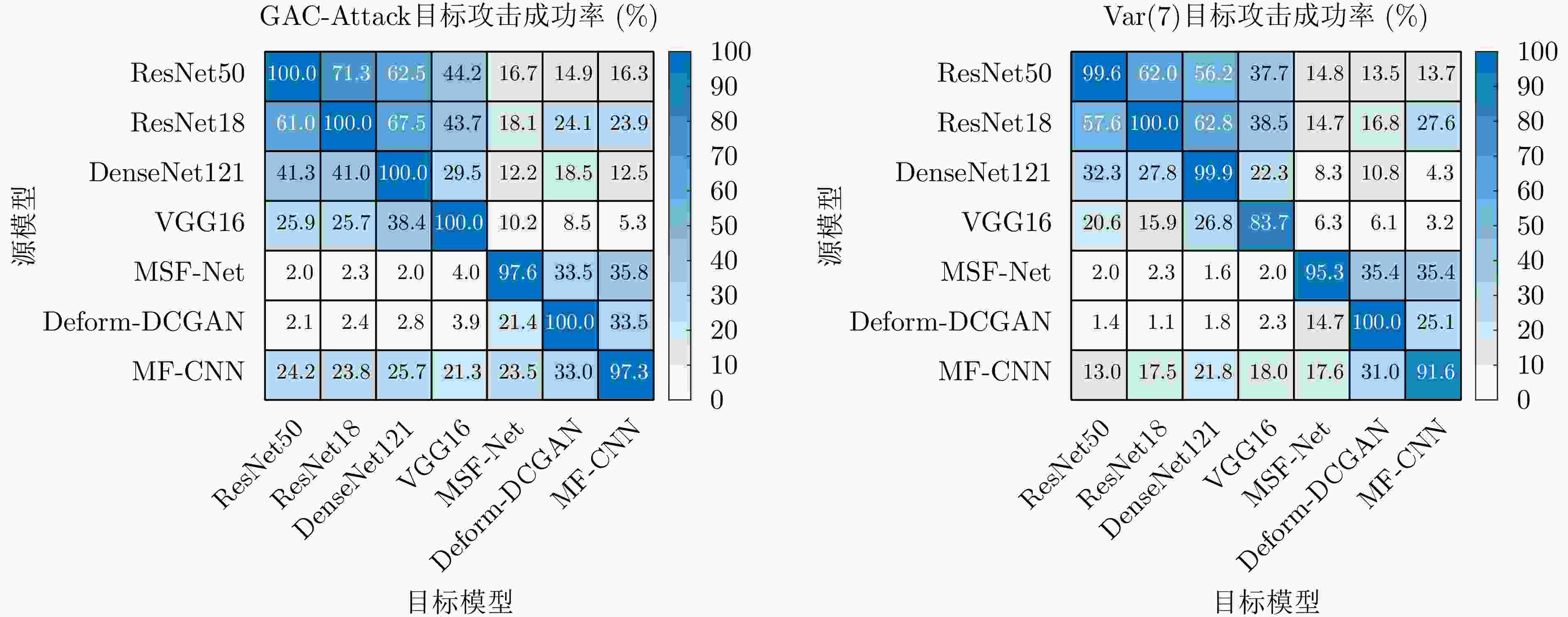

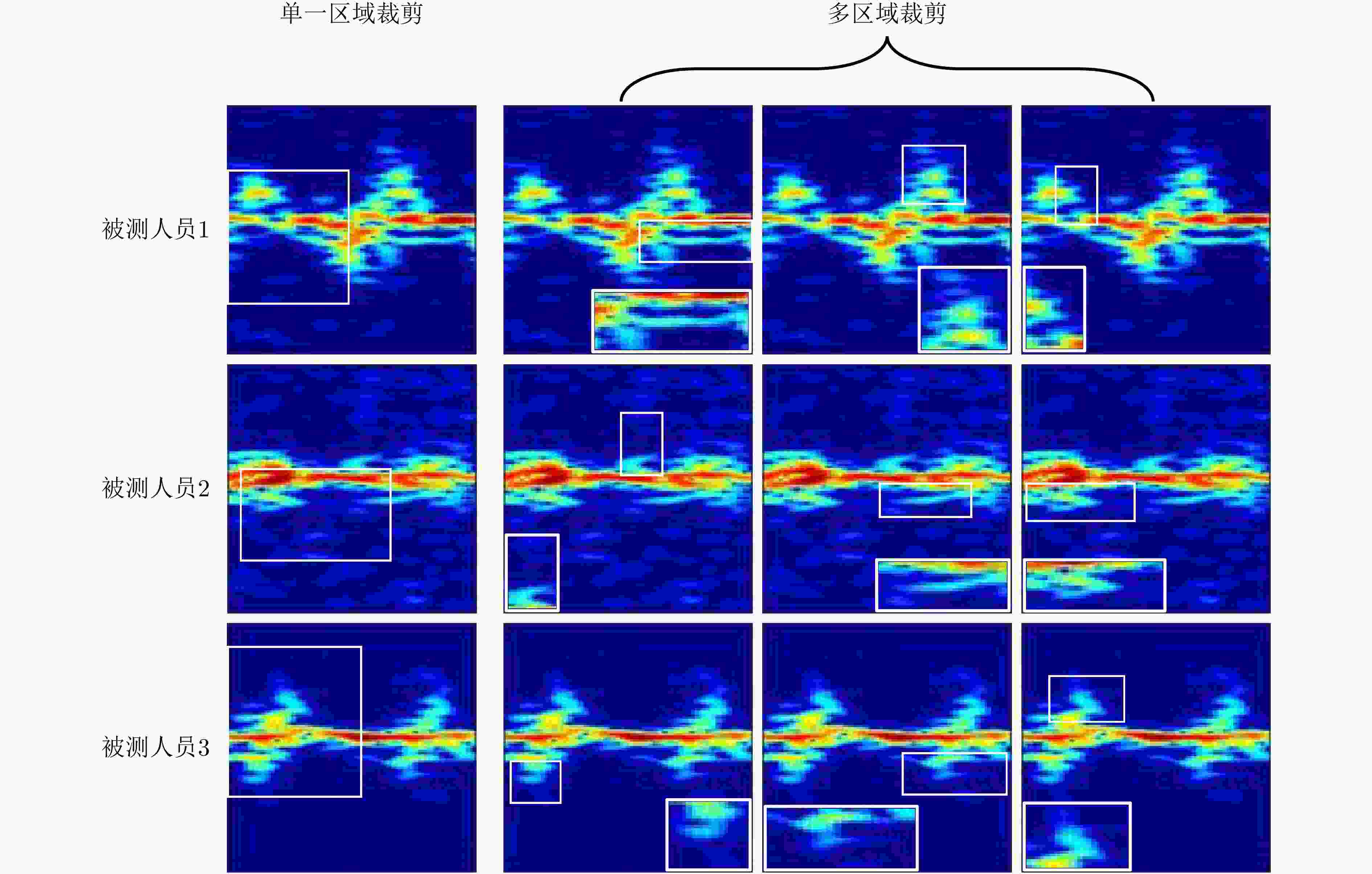

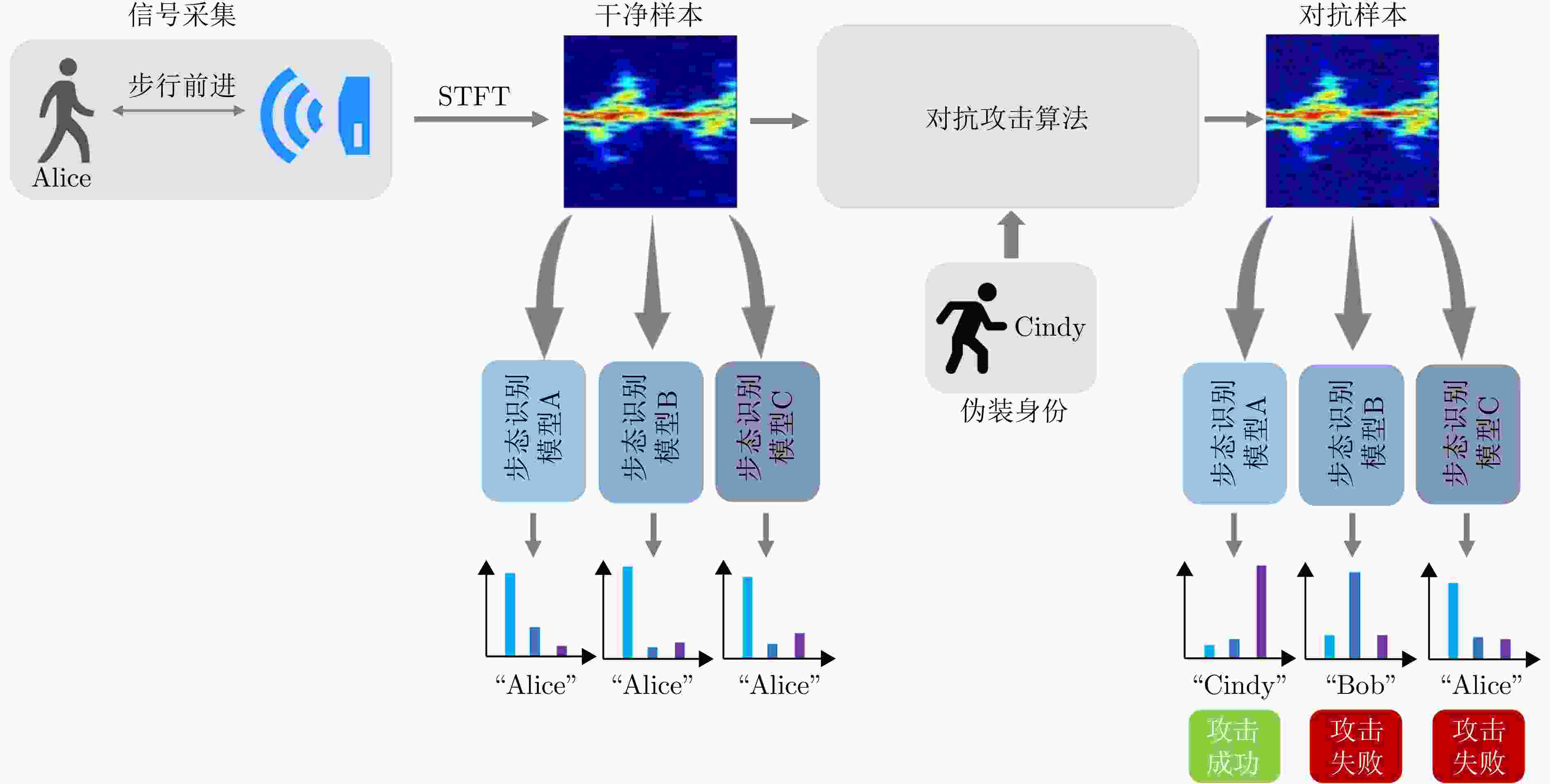

摘要: 雷达微多普勒步态识别系统在对抗攻击条件下的安全边界评估具有重要意义。现有攻击方法大多直接迁移自光学图像领域,忽略了微多普勒谱图在细粒度特征分布和时频结构上的特点,从而导致其在跨模型的黑盒目标攻击场景中的迁移性能受限。为此,该文提出一种面向人体步态微多普勒特征的黑盒目标攻击框架GAC-Attack。针对类别间特征分布接近、目标攻击方向易发生语义偏移的问题,构建类间关系引导的鲁棒梯度优化机制。针对判别信息主要集中于局部时频区域的特点,设计自适应局部裁剪机制,以增强扰动对跨模型共享判别特征的干扰能力。该文构建了单动作步态识别数据集与多动作身份识别数据集,并在7种网络架构和7种黑盒目标攻击算法下进行了系统对比实验。结果表明,所提方法在步态数据集和身份数据集上的目标攻击成功率分别较次优基线提升约7%和4%,并在多数模型组合中保持领先,该方法在细粒度复杂场景下的有效性与跨模型迁移稳定性得到验证。Abstract: The evaluation of the security limits of radar micro-Doppler gait recognition systems under adversarial conditions is of practical significance. Current attack methods, primarily adapted from the optical image domain, do not consider the detailed feature distribution and time-frequency characteristics of micro-Doppler spectrograms. This oversight leads to limited effectiveness in cross-model black-box targeted attack scenarios. To overcome this challenge, we propose Gradient Guidance and Adaptive Cropping Radar Gait Targeted Attack (GAC-Attack), a targeted black-box attack framework for human gait micro-Doppler signatures. To reduce the number of semantic shifts caused by high inter-class similarity and closely distributed features, an inter-class relationship-guided robust gradient optimization mechanism is developed. In addition, an adaptive local cropping mechanism is designed that takes advantage of the concentration of discriminative information in local time-frequency regions, thereby increasing perturbation interference on shared discriminative features across various models. We construct two datasets, one for single-action gait recognition and the other for multi-action identity recognition, and conduct systematic comparative experiments across seven network architectures and seven black-box targeted attack methods. The experimental results show that GAC-Attack improves the targeted attack success rate by approximately 7% and 4% compared to the strongest competing baseline on the gait and identity datasets, respectively, while consistently achieving top performance across most model combinations. These results validate the effectiveness of the proposed framework in complex scenarios and its robustness in cross-model transfer settings.

-

1 GAC-Attack对抗样本生成算法

1. Adversarial example generation procedure of GAC-Attack

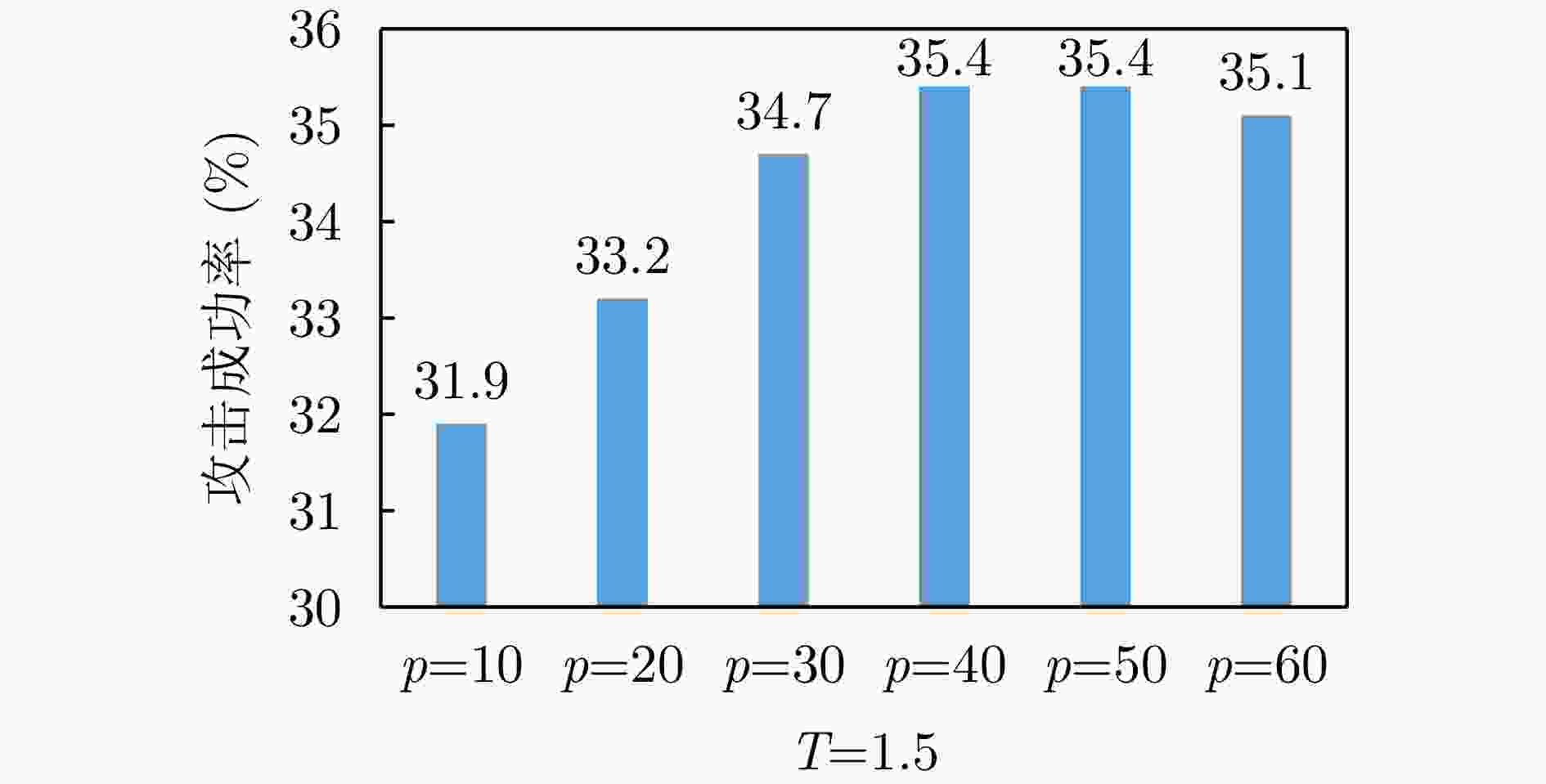

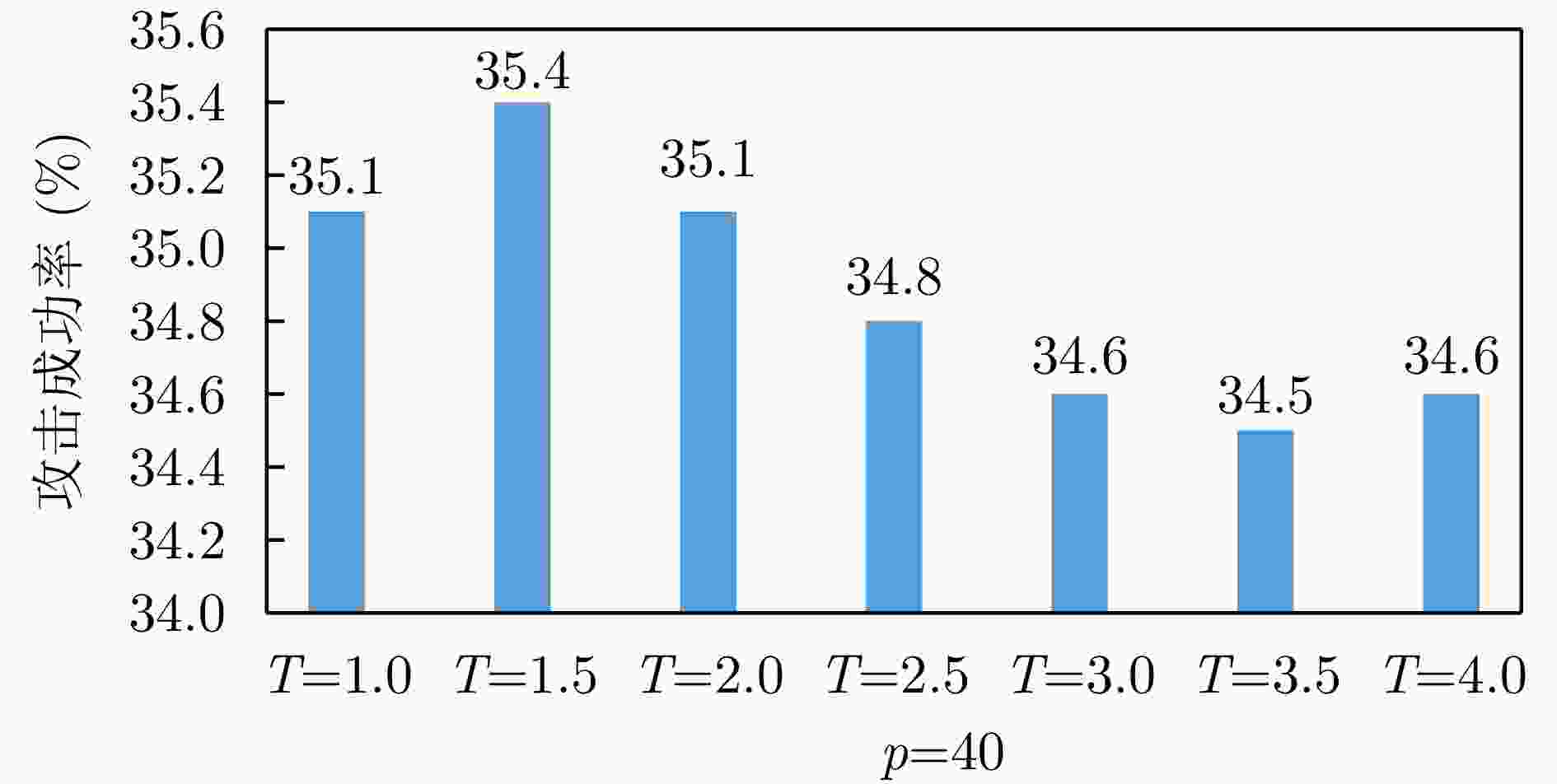

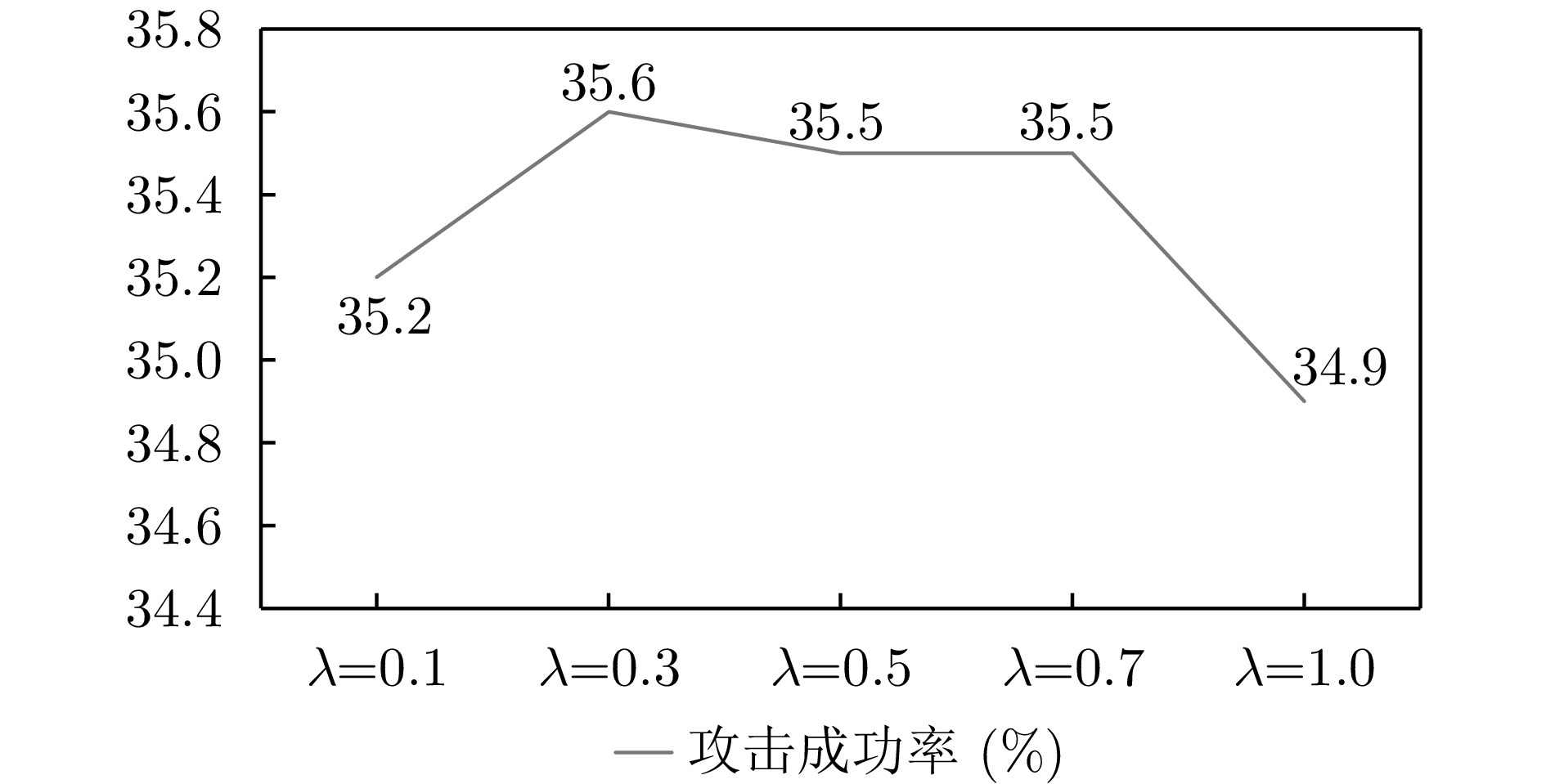

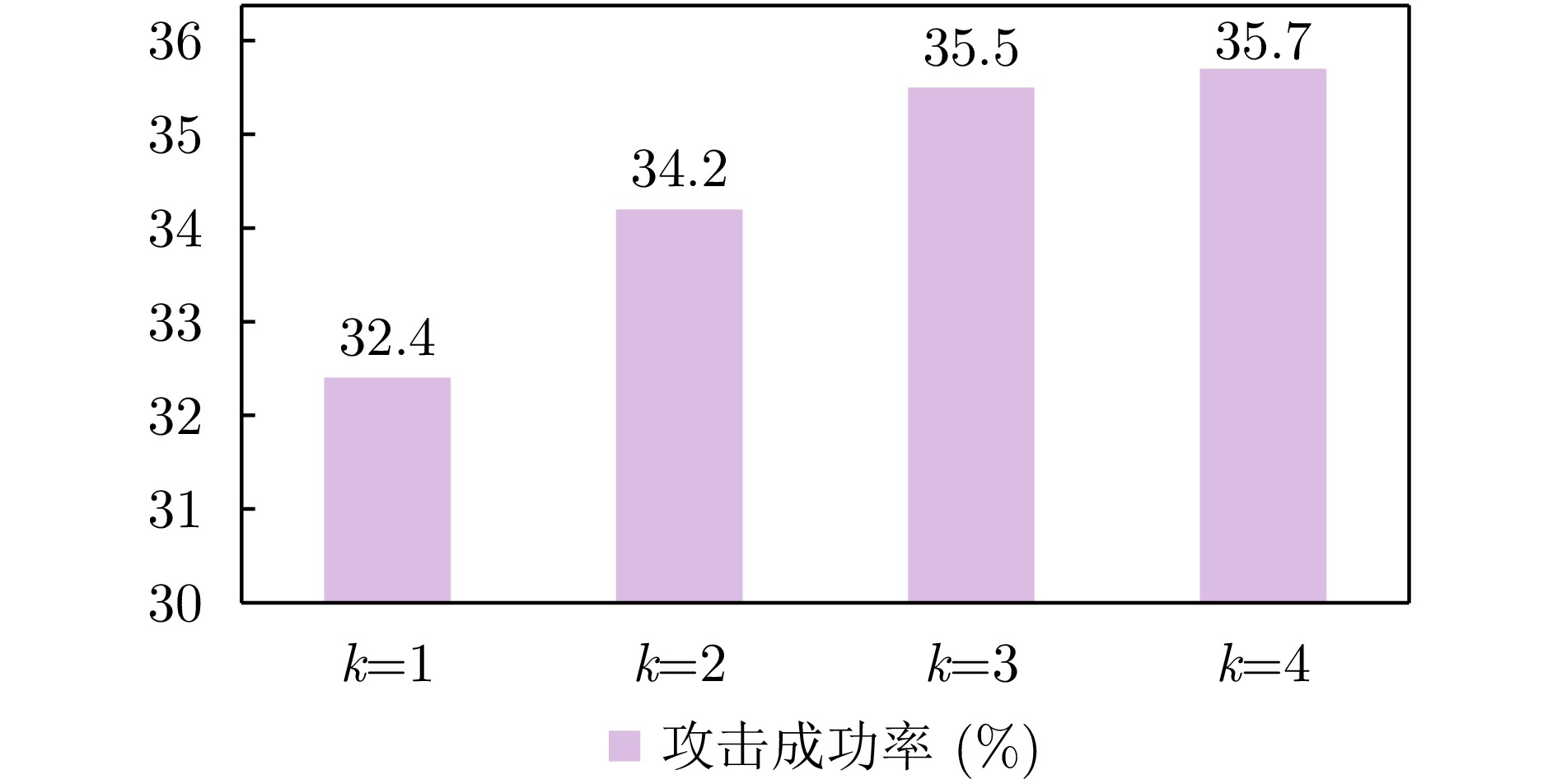

输入:干净样本$ {\boldsymbol{x}}_{\text{clean}} $,代理模型f,One-hot目标标签$ {\boldsymbol{y}}_{\text{target}} $,不同类的软标签$ {{\tilde{\boldsymbol{y}}}}_{\text{target}} $; 参数:迭代次数I,梯度更新步长$ \alpha $,扰动预算$ \epsilon $,裁剪数量k,温度T,阈值分割百分位数p,比例参数$ \beta $和损失系数$ \lambda $; 输出:对抗样本$ {\boldsymbol{x}}_{\text{adv}} $ 步骤1 $ \boldsymbol{x}_{\text{adv}}^{0}=\boldsymbol{x},\;{\boldsymbol{g}}_{0}=0,\;{\delta }_{0}=0 $ 步骤2 For $ i=0 $ to I do: 步骤3 If $ i=0 $ random crop else $ X_{\text{local}}^{i} $← crop by Eq. (32) 步骤4 利用背景值填充$ X_{\text{local}}^{i} $中的每一个局部对抗样本$ \boldsymbol{x}_{\text{local}}^{i,\mathrm{l}} $至与$ \boldsymbol{x}_{\text{adv}}^{i} $相同大小 步骤5 $ {{\tilde{\boldsymbol{y}}}}^{{\boldsymbol{x}_{\text{adv}}^{i}}}_{\text{model}}\leftarrow f(\boldsymbol{x}_{\text{adv}}^{i}),\,\,\,\,\,\,\,\,\,\,\, {{\tilde{\boldsymbol{y}}}}^{{X_{\text{local}}^{i}}}_{\text{model}}\leftarrow f(X_{\text{local}}^{i}) $,其中,$ {{\tilde{\boldsymbol{y}}}}^{{X_{\text{local}}^{t}}}_{\text{model}} $表示代理模型对局部样本集合$ X_{\text{local}}^{i} $逐一前向传播后得到

的预测结果集合。步骤6 计算损失:根据$ \boldsymbol{x}_{\text{adv}}^{i} $计算$ {L}_{\text{global}} $by Eq.(2)–Eq.(5) 根据$ X_{\text{local}}^{i} $中的每个局部样本$ \boldsymbol{x}_{\text{local}}^{({l})} $计算$ {L}_{\text{local,}{l}} $by Eq.(2)–Eq.(5),得到$ {L}_{\text{local}}=\displaystyle\sum \nolimits_{l=1}^{k}{L}_{\text{local,}{l}} $, $ {L}_{\text{total}}={L}_{\text{global}}+{L}_{\text{local}} $ 步骤7 计算$ \boldsymbol{g}_{\text{r}}^{\text{norm}} $by Eq.(10) 步骤8 梯度计算$ \boldsymbol{g}_{\text{sum}}^{i+1}=\nabla {L}_{\text{total}}-\boldsymbol{g}_{{}_{\text{r}}}^{\text{norm}} $ 步骤9 MI变换:$ \boldsymbol{g}_{\text{sum}}^{i+1}=\boldsymbol{g}_{\text{sum}}^{i}+\dfrac{\boldsymbol{g}_{\text{sum}}^{i+1}}{{\left|\left|\boldsymbol{g}_{\text{sum}}^{i+1}\right|\right|}_{2}} $ 步骤10 If $ i \gt 0 $且$ \,i\%20=0 $:计算$ {\boldsymbol{n}}_{\text{final}} $by Eq.(15) ;$ \boldsymbol{g}_{\text{total}}^{i+1}=\boldsymbol{g}_{\text{sum}}^{i+1}+{\boldsymbol{n}}_{\text{final}} $ 步骤11 $ \boldsymbol{x}_{\text{adv}}^{i+1}\text{=Clamp}_{\boldsymbol{x}}^{ \epsilon }(\boldsymbol{x}_{\text{adv}}^{i}+\alpha \cdot \text{sign}(\boldsymbol{g}_{\text{total}}^{i+1})) $ 步骤12 $ \boldsymbol{x}_{\text{adv}}^{i}=\boldsymbol{x}_{\text{adv}}^{i+1} $ End for Return $ \boldsymbol{x}_{\text{adv}}^{I-1} $ 表 1 步态数据集构成

Table 1. Composition of the gait dataset

目标类别 训练集数量 测试集数量 实验人员1 730 146 实验人员2 676 135 实验人员3 718 143 实验人员4 594 118 实验人员5 585 116 实验人员6 662 132 总计 3965 790 表 2 身份数据集构成

Table 2. Composition of the identity dataset

目标类别 训练集数量 测试集数量 实验人员1 133 27 实验人员2 120 24 实验人员3 132 27 实验人员4 103 21 实验人员5 57 12 实验人员6 149 30 总计 694 141 表 3 目标模型识别精度

Table 3. Recognition accuracy of the target model

模型 步态数据集ACC (%) 身份数据集ACC (%) ResNet50 98.61 97.16 ResNet18 99.24 99.29 DenseNet121 99.24 98.58 VGGNet16 97.72 98.58 MSF-Net 95.32 98.58 Deform-DCGAN 95.95 99.29 MF-CNN 97.97 93.62 表 4 基于步态数据集不同对比算法在多个代理模型下的平均攻击成功率(%)

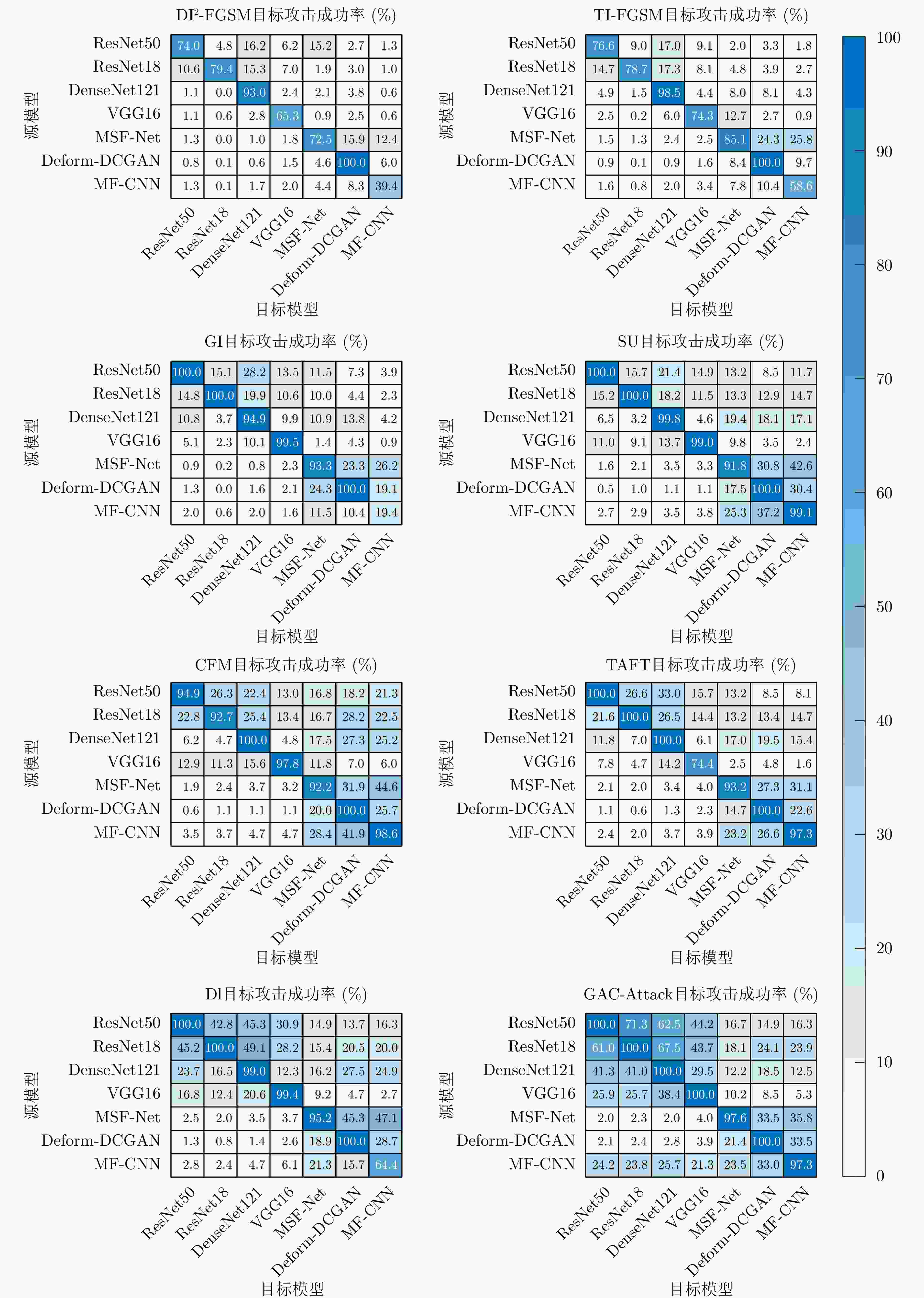

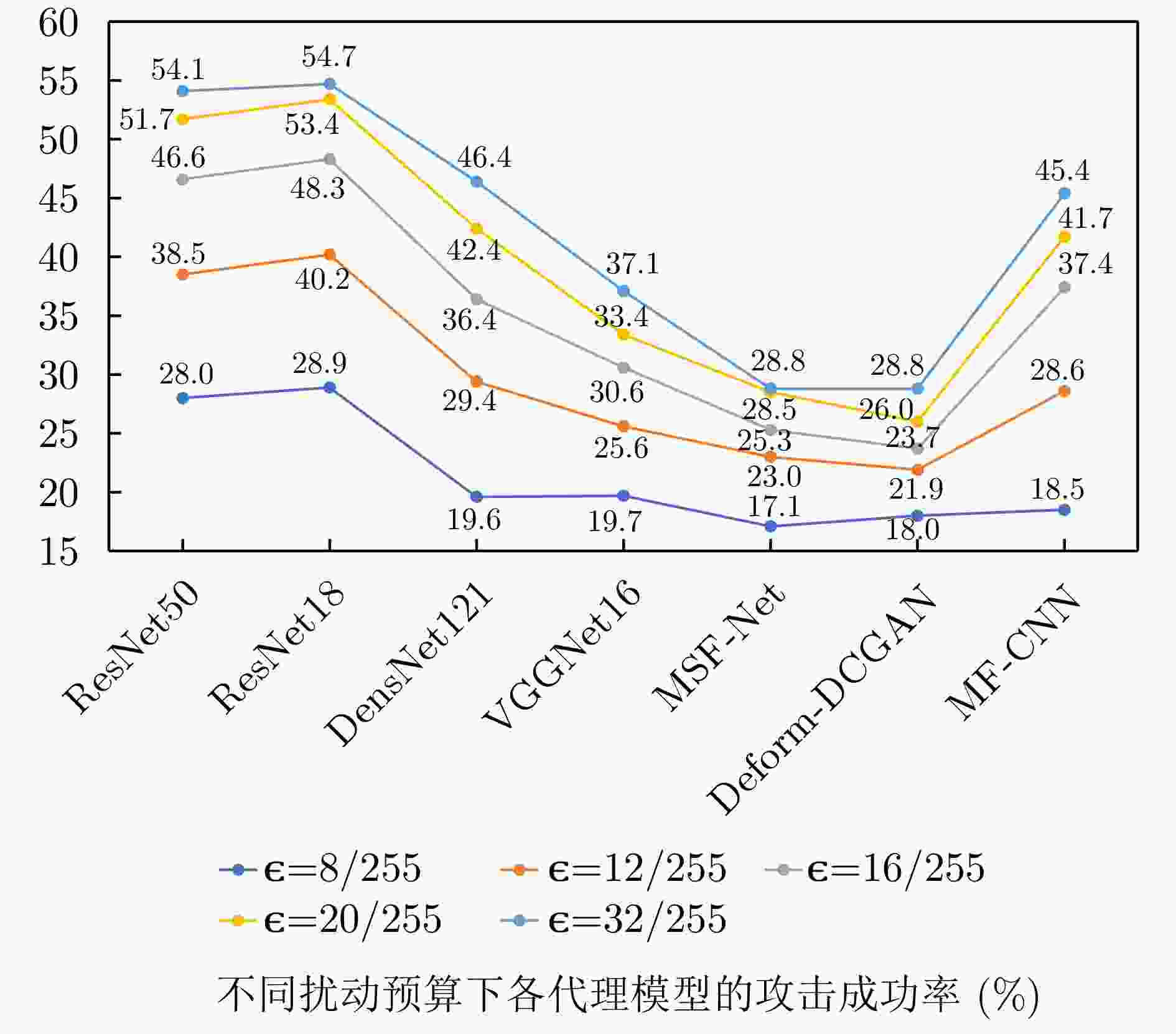

Table 4. Average attack success rates of different baseline algorithms across multiple surrogate models on the gait dataset (%)

代理模型 CFM Dl TAFT GI SU TI-FGSM DI2-FGSM GAC-Attack ResNet50 30.4 37.7 29.3 25.7 26.5 17.2 16.9 46.6 ResNet18 31.7 39.8 29.1 23.1 26.5 16.9 18.6 48.3 DenseNet121 26.5 31.4 25.2 21.2 24.1 14.7 18.5 36.4 VGGNet16 23.2 23.7 15.7 17.6 21.2 10.6 14.2 30.6 MSF-Net 25.7 28.5 23.3 21.0 25.1 15.0 20.4 25.3 Deform-DCGAN 21.4 21.9 20.4 21.2 21.7 16.2 17.4 23.7 MF-CNN 26.5 16.8 22.7 6.8 24.9 9.3 13.8 37.4 Average 26.5 28.5 23.7 19.5 24.3 14.3 17.1 35.5 表 5 基于身份数据集不同对比算法在多个代理模型下的平均攻击成功率(%)

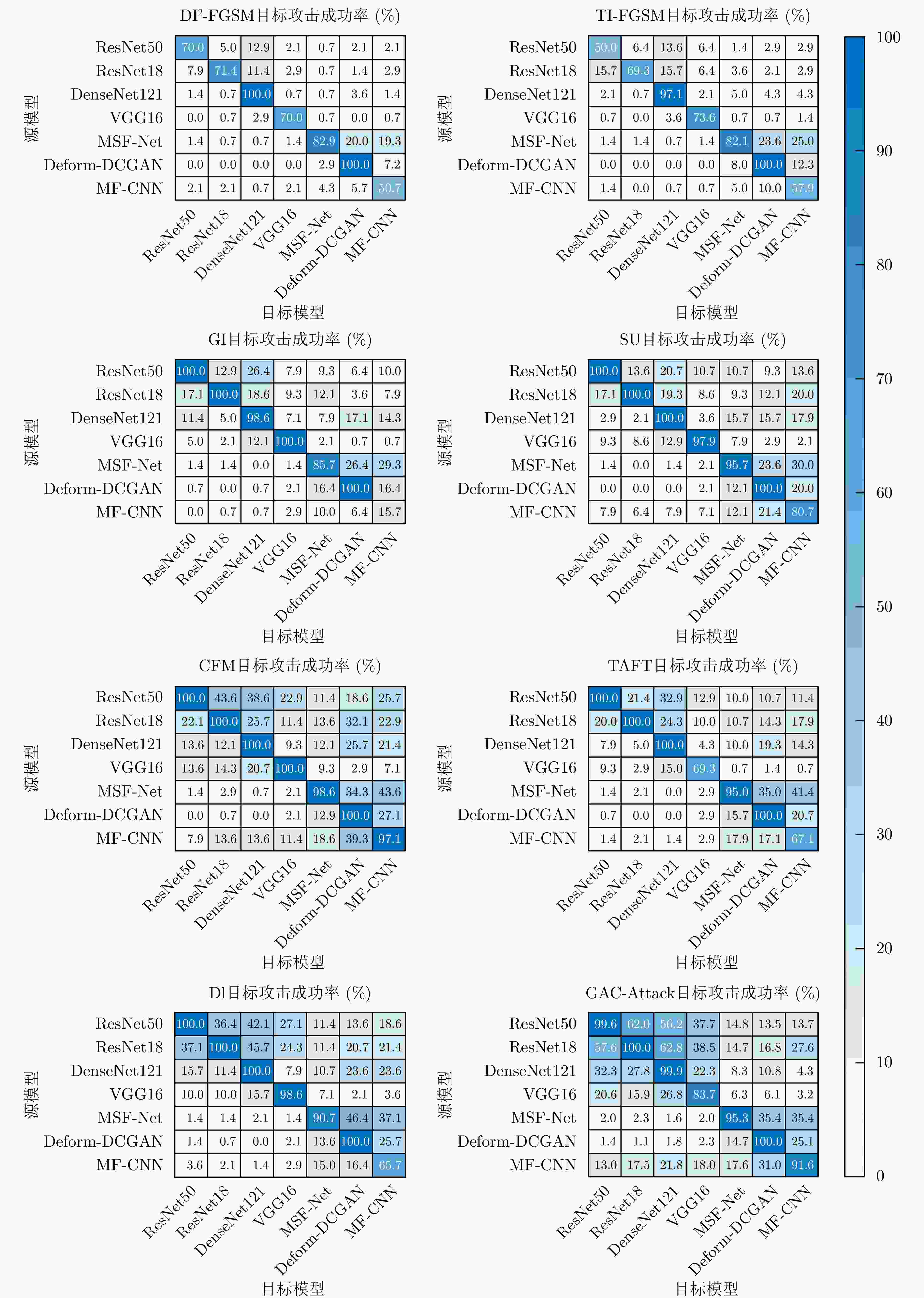

Table 5. Average attack success rates of different baseline algorithms across multiple surrogate models on the identity dataset (%)

代理模型 CFM Dl TAFT GI SU TI-FGSM DI2-FGSM GAC-Attack ResNet50 37.2 35.6 28.5 24.7 25.5 11.9 13.6 43.5 ResNet18 32.6 37.2 28.2 24.1 26.6 16.5 14.1 45.7 DenseNet121 27.8 27.6 23.0 23.1 22.6 16.5 15.5 31.0 VGGNet16 24.0 21.0 14.2 17.6 20.2 11.5 10.7 26.2 MSF-Net 26.2 25.8 25.4 20.8 22.0 19.4 18.1 24.0 Deform-DCGAN 20.4 20.5 20.0 19.5 19.2 15.1 15.7 21.2 MF-CNN 28.8 15.3 15.7 5.2 20.5 12.4 11.0 33.3 Average 28.1 26.1 22.1 19.3 22.4 14.8 14.1 32.1 表 6 不同消融变体的平均攻击成功率

Table 6. Average attack success rates of different ablation variants

消融变体 对数加权 软标签损失 梯度修正 噪声探索 候选区域选取 关键区域筛选 裁剪参数确定 平均攻击成功率(%) Baseline 20.5 Var(1) √ √ √ 25.4 Var(2) √ √ √ √ 28.6 Var(3) √ √ √ √ √ √ 31.7 Var(4) √ √ √ √ √ 31.5 Var(5) √ √ √ √ √ √ 33.5 Var(6) √ √ √ √ √ √ 34.5 Var(7) √ √ √ √ 30.9 Var(8) √ √ √ √ √ √ 33.9 Var(9) √ √ √ √ √ 34.0 GAC-Attack √ √ √ √ √ √ √ 35.5 表 7 不同加权方式下的平均目标攻击成功率(%)

Table 7. Average targeted attack success rate (%) under different weighting schemes

加权方式 ResNet50 ResNet18 DenseNet121 VGGNet16 MSF-Net Deform-DCGAN MF-CNN Average ln(·) 46.6 48.3 36.4 30.6 25.3 23.7 37.4 35.5 平方根 43.4 45.9 32.6 26.9 23.3 21.2 35.6 32.7 倒数 45.5 47.6 32.0 25.6 24.1 20.2 38.1 33.3 表 8 不同SNR条件下各代理模型的目标攻击成功率(%)

Table 8. Targeted attack success rates (%) of different surrogate models under different SNR conditions

SNR ResNet50 ResNet18 DenseNet121 VGGNet16 MSF-Net Deform-DCGAN MF-CNN Average Clean 46.6 48.3 36.4 30.6 25.3 23.7 37.4 35.5 30 dB 47.4 49.6 37.2 31.2 24.5 22.7 36.5 35.6 20 dB 47.9 49.6 37.2 31.6 24.9 22.8 36.5 35.8 10 dB 43.9 44.3 31.1 31.2 19.6 24.2 33.3 32.5 表 9 不同攻击算法在各代理模型下单样本生成时间开销对比(ms)

Table 9. Comparison of per-sample generation time (ms) of different attack algorithms across surrogate models

算法 ResNet18 DenseNet121 MSF-Net MF-CNN Dl 361.9 1106.1 196.8 222.3 CFM 326.8 1194.3 123.4 149.4 GI 419.4 1579.1 558.0 296.2 SU 408.5 1249.6 513.7 287.1 TAFT 633.9 2055.6 358.4 371.5 TI-FGSM 348.3 1099.4 139.8 166.4 DI2-FGSM 359.6 1191.8 213.4 209.7 GAC-Attack 1249.7 4943.4 779.7 776.9 表 10 GAC-Attack部分消融变体在各代理模型下单样本生成时间开销对比(ms)

Table 10. Comparison of per-sample generation time (ms) of GAC-Attack partial ablation variants across surrogate models

算法 ResNet18 DenseNet121 MSF-Net MF-CNN Var(7) 1275.8 3997.5 619.3 684.5 Var(1) 774.0 2739.6 427.3 423.9 Var(5) 994.6 3512.9 516.6 574.0 Var(4) 1106.8 3780.0 594.0 553.6 Var(6) 1231.2 4059.3 761.8 740.7 GAC-Attack 1249.7 4943.4 779.7 776.9 表 11 采集顺序划分条件下的目标模型识别精度(%)

Table 11. Target model recognition accuracy under data partitioning based on acquisition order (%)

模型 步态数据集ACC ResNet50 83.58 ResNet18 83.24 DenseNet121 82.34 VGGNet16 72.44 MSF-Net 78.18 Deform-DCGAN 75.93 MF-CNN 77.50 表 12 采集顺序划分条件下不同攻击算法在各代理模型下的目标攻击成功率(%)

Table 12. Targeted attack success rates (%) of different attack algorithms across surrogate models under data partitioning based on acquisition order

算法 ResNet50 ResNet18 DenseNet121 VGGNet16 MSF-Net Deform-DCGAN MF-CNN Average GAC-Attack 60.2 60.1 49.3 41.5 27.8 41.4 47.0 46.8 Dl 45.7 48.1 39.1 33.4 29.9 34.8 26.0 36.7 CFM 37.0 34.4 29.7 29.3 27.0 26.8 32.7 31.0 -

[1] FIGUEIREDO B, FRAZÃO Á, ROUCO A, et al. A review: Radar remote-based gait identification methods and techniques[J]. Remote Sensing, 2025, 17(7): 1282. doi: 10.3390/rs17071282. [2] HE Wentao, REN Jianfeng, BAI Ruibin, et al. Radar gait recognition using dual-branch swin transformer with asymmetric attention fusion[J]. Pattern Recognition, 2025, 159: 111101. doi: 10.1016/j.patcog.2024.111101. [3] 孙延鹏, 王爽, 屈乐乐, 等. 基于点云数据与微多普勒谱图多模态特征的雷达步态识别[J/OL]. 雷达科学与技术. https://link.cnki.net/urlid/34.1264.tn.20251112.1509.002.2025, 2025.SUN Yanpeng, WANG Shuang, QU Lele, et al. Radar gait recognition based on point cloud and micro-Doppler multimodal features[J/OL]. Radar Science and Technology. https://link.cnki.net/urlid/34.1264.tn.20251112.1509.002.2025, 2025. [4] 马进昇, 宋一轩, 刘家彤, 等. 基于DDPM-MBN的井下人员步态识别方法[J]. 工矿自动化, 2025, 51(9): 60–65. doi: 10.13272/j.issn.1671-251x.2025030104.MA Jinsheng, SONG Yixuan, LIU Jiatong, et al. Gait recognition method for underground personnel based on DDPM-MBN[J]. Journal of Mine Automation, 2025, 51(9): 60–65. doi: 10.13272/j.issn.1671-251x.2025030104. [5] BAI Xueru, HUI Ye, WANG Li, et al. Radar-based human gait recognition using dual-channel deep convolutional neural network[J]. IEEE Transactions on Geoscience and Remote Sensing, 2019, 57(12): 9767–9778. doi: 10.1109/TGRS.2019.2929096. [6] SHI Yu, DU Lan, CHEN Xiaoyang, et al. Robust gait recognition based on deep CNNs with camera and radar sensor fusion[J]. IEEE Internet of Things Journal, 2023, 10(12): 10817–10832. doi: 10.1109/JIOT.2023.3242417. [7] JIANG Xinrui, ZHANG Ye, YANG Qi, et al. Millimeter-wave array radar-based human gait recognition using multi-channel three-dimensional convolutional neural network[J]. Sensors, 2020, 20(19): 5466. doi: 10.3390/s20195466. [8] YANG Yang, MU Qingshuang, LI Beichen, et al. Few-shot open-set gait recognition based on radar micro-Doppler signatures[J]. IEEE Sensors Journal, 2025, 25(13): 25134–25145. doi: 10.1109/JSEN.2025.3571781. [9] GOODFELLOW I J, SHLENS J, and SZEGEDY C. Explaining and harnessing adversarial examples[C]. The 3rd International Conference on Learning Representations, San Diego, USA, 2015. [10] KURAKIN A, GOODFELLOW I J, and BENGIO S. Adversarial Examples in the Physical World[M]. YAMPOLSKIY R V. Artificial Intelligence Safety and Security. New York: Chapman and Hall/CRC, 2018: 99–112. doi: 10.1201/9781351251389. [11] LI Ang, WANG Yifei, GUO Yiwen, et al. Adversarial examples are not real features[C]. The 37th International Conference on Neural Information Processing Systems, New Orleans, USA, 2023: 754. [12] MAZZIERI R, PEGORARO J, and ROSSI M. Open-set gait recognition from sparse mmWave radar point clouds[J]. IEEE Sensors Journal, 2025, 25(17): 33051–33063. doi: 10.1109/JSEN.2025.3587503. [13] DIRACO G, RESCIO G, and LEONE A. Radar-based activity recognition in strictly privacy-sensitive settings through deep feature learning[J]. Biomimetics, 2025, 10(4): 243. doi: 10.3390/biomimetics10040243. [14] IBITOYE O, ABOU-KHAMIS R, EL SHEHABY M, et al. The threat of adversarial attacks on machine learning in network security: A survey[EB/OL]. https://arxiv.org/abs/1911.02621, 2019. [15] WEI Zhipeng, CHEN Jingjing, WEI Xingxing, et al. Heuristic black-box adversarial attacks on video recognition models[C]. The 34th AAAI Conference on Artificial Intelligence, New York, USA, 2020: 12338–12345. doi: 10.1609/aaai.v34i07.6918. [16] CARLINI N, ATHALYE A, PAPERNOT N, et al. On evaluating adversarial robustness[EB/OL]. https://arxiv.org/abs/1902.06705, 2019. [17] LIU Chang, DONG Yinpeng, XIANG Wenzhao, et al. A comprehensive study on robustness of image classification models: Benchmarking and rethinking[J]. International Journal of Computer Vision, 2025, 133(2): 567–589. doi: 10.1007/s11263-024-02196-3. [18] MADRY A, MAKELOV A, SCHMIDT L, et al. Towards deep learning models resistant to adversarial attacks[C]. The 6th International Conference on Learning Representations, Vancouver, Canada, 2018. [19] MOOSAVI-DEZFOOLI S M, FAWZI A, and FROSSARD P. DeepFool: A simple and accurate method to fool deep neural networks[C]. 2016 IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, USA, 2016: 2574–2582. doi: 10.1109/CVPR.2016.282. [20] CARLINI N and WAGNER D. Towards evaluating the robustness of neural networks[C]. 2017 IEEE Symposium on Security and Privacy (SP), San Jose, USA, 2017: 39–57. doi: 10.1109/SP.2017.49. [21] LI Maosen, DENG Cheng, LI Tengjiao, et al. Towards transferable targeted attack[C]. 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, USA, 2020: 641–649. doi: 10.1109/CVPR42600.2020.00072. [22] CHEN Pinyu, ZHANG Huan, SHARMA Y, et al. ZOO: Zeroth order optimization based black-box attacks to deep neural networks without training substitute models[C]. The 10th ACM Workshop on Artificial Intelligence and Security, Dallas, USA, 2017: 15–26. doi: 10.1145/3128572.3140448. [23] MURPHY K, SCHÖLKOPF B, WIERSTRA D, et al. Natural evolution strategies[J]. The Journal of Machine Learning Research, 2014, 15(1): 949–980. [24] DONG Yinpeng, LIAO Fangzhou, PANG Tianyu, et al. Boosting adversarial attacks with momentum[C]. 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Salt Lake City, USA, 2018: 9185–9193. doi: 10.1109/CVPR.2018.00957. [25] DONG Yinpeng, PANG Tianyu, SU Hang, et al. Evading defenses to transferable adversarial examples by translation-invariant attacks[C]. 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, USA, 2019: 4312–4321. doi: 10.1109/CVPR.2019.00444. [26] XIE Cihang, ZHANG Zhishuai, ZHOU Yuyin, et al. Improving transferability of adversarial examples with input diversity[C]. 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, USA, 2019: 2730–2739. doi: 10.1109/CVPR.2019.00284. [27] ZHOU Junfan, FENG Sijia, SUN Hao, et al. Attributed scattering center guided adversarial attack for DCNN SAR target recognition[J]. IEEE Geoscience and Remote Sensing Letters, 2023, 20: 4001805. doi: 10.1109/LGRS.2023.3235051. [28] GAO Fei, LI Mingyang, WANG Jun, et al. General sparse adversarial attack method for SAR images based on keypoints[J]. IEEE Transactions on Aerospace and Electronic Systems, 2025, 61(5): 14943–14960. doi: 10.1109/TAES.2025.3588821. [29] DU Chuan, CONG Yulai, ZHANG Lei, et al. A practical deceptive jamming method based on vulnerable location awareness adversarial attack for radar HRRP target recognition[J]. IEEE Transactions on Information Forensics and Security, 2022, 17: 2410–2424. doi: 10.1109/TIFS.2022.3170275. [30] WAN Xuanshen, LIU Wei, NIU Chaoyang, et al. Black-box universal adversarial attack for DNN-based models of SAR automatic target recognition[J]. IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, 2024, 17: 8673–8696. doi: 10.1109/JSTARS.2024.3384188. [31] YIN Fei, ZHANG Yong, WU Baoyuan, et al. Generalizable black-box adversarial attack with meta learning[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2024, 46(3): 1804–1818. doi: 10.1109/TPAMI.2022.3194988. [32] ZHOU Jie, PENG Bo, XIE Jianyue, et al. Conditional random field-based adversarial attack against SAR target detection[J]. IEEE Geoscience and Remote Sensing Letters, 2024, 21: 4004505. doi: 10.1109/LGRS.2024.3365788. [33] 苏薪元, 全斯农, 蔡志豪, 等. 联合误导性与逼真度优化的SAR ATR最优对抗样本生成方法[J]. 雷达学报(中英文), 2026, 15(2): 563–582. doi: 10.12000/JR25179.SU Xinyuan, QUAN Sinong, CAI Zhihao, et al. Optimal adversarial sample generation method in SAR ATR based on joint misleading and fidelity optimization[J]. Journal of Radars, 2026, 15(2): 563–582. doi: 10.12000/JR25179. [34] 万烜申, 刘伟, 牛朝阳, 等. 基于动量迭代快速梯度符号的SAR-ATR深度神经网络黑盒攻击算法[J]. 雷达学报(中英文), 2024, 13(3): 714–729. doi: 10.12000/JR23220.WAN Xuanshen, LIU Wei, NIU Chaoyang, et al. Black-box attack algorithm for SAR-ATR deep neural networks based on MI-FGSM[J]. Journal of Radars, 2024, 13(3): 714–729. doi: 10.12000/JR23220. [35] KIM H, PARK J, and LEE J. Generating transferable adversarial examples for speech classification[J]. Pattern Recognition, 2023, 137: 109286. doi: 10.1016/j.patcog.2022.109286. [36] YU Jianfeng, QIU Kai, WANG Pengju, et al. Perturbing BEAMs: EEG adversarial attack to deep learning models for epilepsy diagnosing[J]. BMC Medical Informatics and Decision Making, 2023, 23(1): 115. doi: 10.1186/s12911-023-02212-5. [37] EL HOUDA SAYAH BEN AISSA N, KORICHI A, LAKAS A, et al. Assessing robustness to adversarial attacks in attention-based networks: Case of EEG-based motor imagery classification[J]. SLAS Technology, 2024, 29(4): 100142. doi: 10.1016/j.slast.2024.100142. [38] 于振华, 胡旭飞, 叶鸥. 类别条件生成对抗网络的语音对抗样本生成方法[J]. 西安交通大学学报, 2024, 58(12): 153–164. doi: 10.7652/xjtuxb202412015.YU Zhenhua, HU Xufei, and YE Ou. Speech adversarial sample generation method based on class-conditional generative adversarial networks[J]. Journal of Xi’an Jiaotong University, 2024, 58(12): 153–164. doi: 10.7652/xjtuxb202412015. [39] YANG Yang, ZHAO Junyu, LI Beichen, et al. Semisupervised radar-based gait recognition in random walking conditions[J]. IEEE Transactions on Aerospace and Electronic Systems, 2026, 62: 2265–2279. doi: 10.1109/TAES.2025.3637174. [40] XUE Shikun, DU Lan, SHI Yu, et al. Fine-grained spatial-temporal gait recognition network based on millimeter-wave radar point cloud[J]. IEEE Transactions on Geoscience and Remote Sensing, 2024, 62: 5101316. doi: 10.1109/TGRS.2023.3345829. [41] YANG Yang, GE Yanyan, LI Beichen, et al. Multiscenario open-set gait recognition based on radar micro-Doppler signatures[J]. IEEE Transactions on Instrumentation and Measurement, 2022, 71: 2519813. doi: 10.1109/TIM.2022.3214271. [42] LIN T Y, ROYCHOWDHURY A, and MAJI S. Bilinear CNN models for fine-grained visual recognition[C]. 2015 IEEE International Conference on Computer Vision, Santiago, Chile, 2015: 1449–1457. doi: 10.1109/ICCV.2015.170. [43] KATSIKAS D, PASSALIS N, and TEFAS A. Inducing neural collapse via anticlasses and one-cold cross-entropy loss[J]. IEEE Transactions on Neural Networks and Learning Systems, 2025, 36(10): 18133–18144. doi: 10.1109/TNNLS.2025.3580892. [44] WANG Yujian, ZHANG Jianxun, and SUN Renhao. A facial expression recognition method integrating uncertainty estimation and active learning[J]. Computers, Materials & Continua, 2024, 81(1): 533–548. doi: 10.32604/cmc.2024.054644. [45] OH S J, BENENSON R, KHOREVA A, et al. Exploiting saliency for object segmentation from image level labels[C]. 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, USA, 2017: 5038–5047. doi: 10.1109/CVPR.2017.535. [46] SIMONYAN K, VEDALDI A, and ZISSERMAN A. Deep inside convolutional networks: Visualising image classification models and saliency maps[C]. The 2nd International Conference on Learning Representations, Banff, Canada, 2014. [47] LIU K H, LIU T J, LIU H H, et al. Facial makeup detection via selected gradient orientation of entropy information[C]. 2015 IEEE International Conference on Image Processing (ICIP), Quebec City, Canada, 2015: 4067–4071. doi: 10.1109/ICIP.2015.7351570. [48] ZHAO Jufeng, CUI Guangmang, GONG Xiaoli, et al. Fusion of visible and infrared images using global entropy and gradient constrained regularization[J]. Infrared Physics & Technology, 2017, 81: 201–209. doi: 10.1016/j.infrared.2017.01.012. [49] HE Kaiming, ZHANG Xiangyu, REN Shaoqing, et al. Deep residual learning for image recognition[C]. 2016 IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, USA, 2016: 770–778. doi: 10.1109/CVPR.2016.90. [50] SIMONYAN K and ZISSERMAN A. Very deep convolutional networks for large-scale image recognition[C]. The 3rd International Conference on Learning Representations, San Diego, USA, 2015. [51] HUANG Gao, LIU Zhuang, VAN DER MAATEN L, et al. Densely connected convolutional networks[C]. 2017 IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, USA, 2017: 4700–4708. doi: 10.1109/CVPR.2017.243. [52] CHEN Zhaoxi, LI Gang, FIORANELLI F, et al. Dynamic hand gesture classification based on multistatic radar micro-Doppler signatures using convolutional neural network[C]. 2019 IEEE Radar Conference (RadarConf), Boston, USA, 2019: 1–5. doi: 10.1109/RADAR.2019.8835796. [53] ZHANG Jiajun and SHI Zhiguo. Deformable deep convolutional generative adversarial network in microwave based hand gesture recognition system[C]. 2017 9th International Conference on Wireless Communications and Signal Processing (WCSP), Nanjing, China, 2017: 1–6. doi: 10.1109/WCSP.2017.8170976. [54] LANG Yue, WANG Qing, YANG Yang, et al. Person identification with limited training data using radar micro-Doppler signatures[J]. Microwave and Optical Technology Letters, 2020, 62(3): 1060–1068. doi: 10.1002/mop.32125. [55] WANG Jiafeng, CHEN Zhaoyu, JIANG Kaixun, et al. Boosting the transferability of adversarial attacks with global momentum initialization[J]. Expert Systems with Applications, 2024, 255: 124757. doi: 10.1016/j.eswa.2024.124757. [56] WEI Zhipeng, CHEN Jingjing, WU Zuxuan, et al. Enhancing the self-universality for transferable targeted attacks[C]. 2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Vancouver, Canada, 2023: 12281–12290. doi: 10.1109/CVPR52729.2023.01182. [57] BYUN J, KWON M J, CHO S, et al. Introducing competition to boost the transferability of targeted adversarial examples through clean feature mixup[C]. 2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Vancouver, Canada, 2023: 24648–24657. doi: 10.1109/CVPR52729.2023.02361. [58] ZENG Hui, CHEN Biwei, and PENG Anjie. Enhancing targeted transferability VIA feature space fine-tuning[C]. 2024 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Seoul, Republic of Korea, 2024: 4475–4479. doi: 10.1109/ICASSP48485.2024.10446654. [59] ZHANG Ming, CHEN Yongkang, LI Hu, et al. Dynamic loss yielding more transferable targeted adversarial examples[J]. Neurocomputing, 2024, 590: 127754. doi: 10.1016/j.neucom.2024.127754. [60] GU Jindong, JIA Xiaojun, DE JORGE P, et al. A survey on transferability of adversarial examples across deep neural networks[J]. Transactions on Machine Learning Research, in press, 2024. -

作者中心

作者中心 专家审稿

专家审稿 责编办公

责编办公 编辑办公

编辑办公

下载:

下载: