Research Progress and Prospects of Synthetic Aperture Radar Image Colorization Algorithms

-

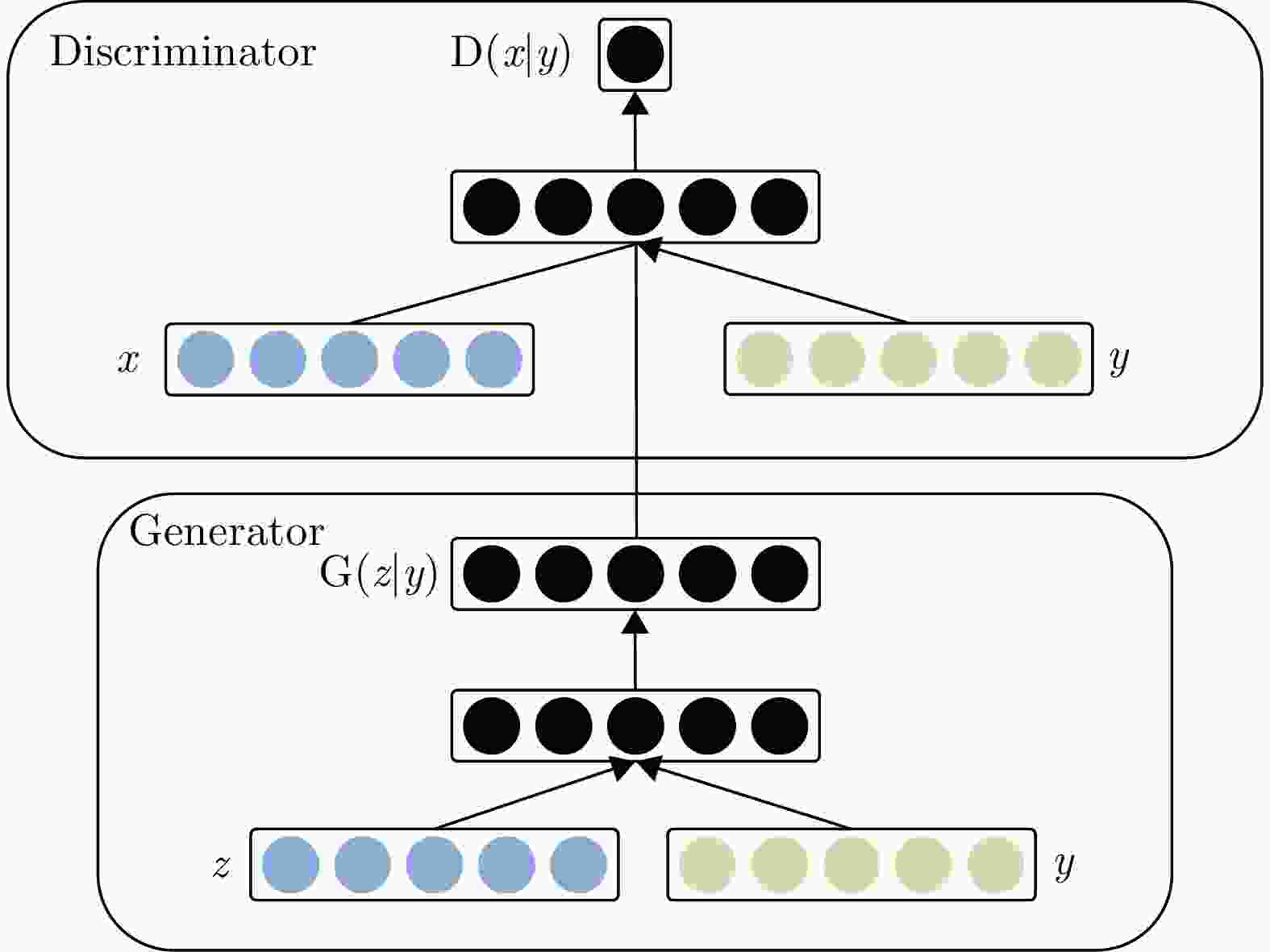

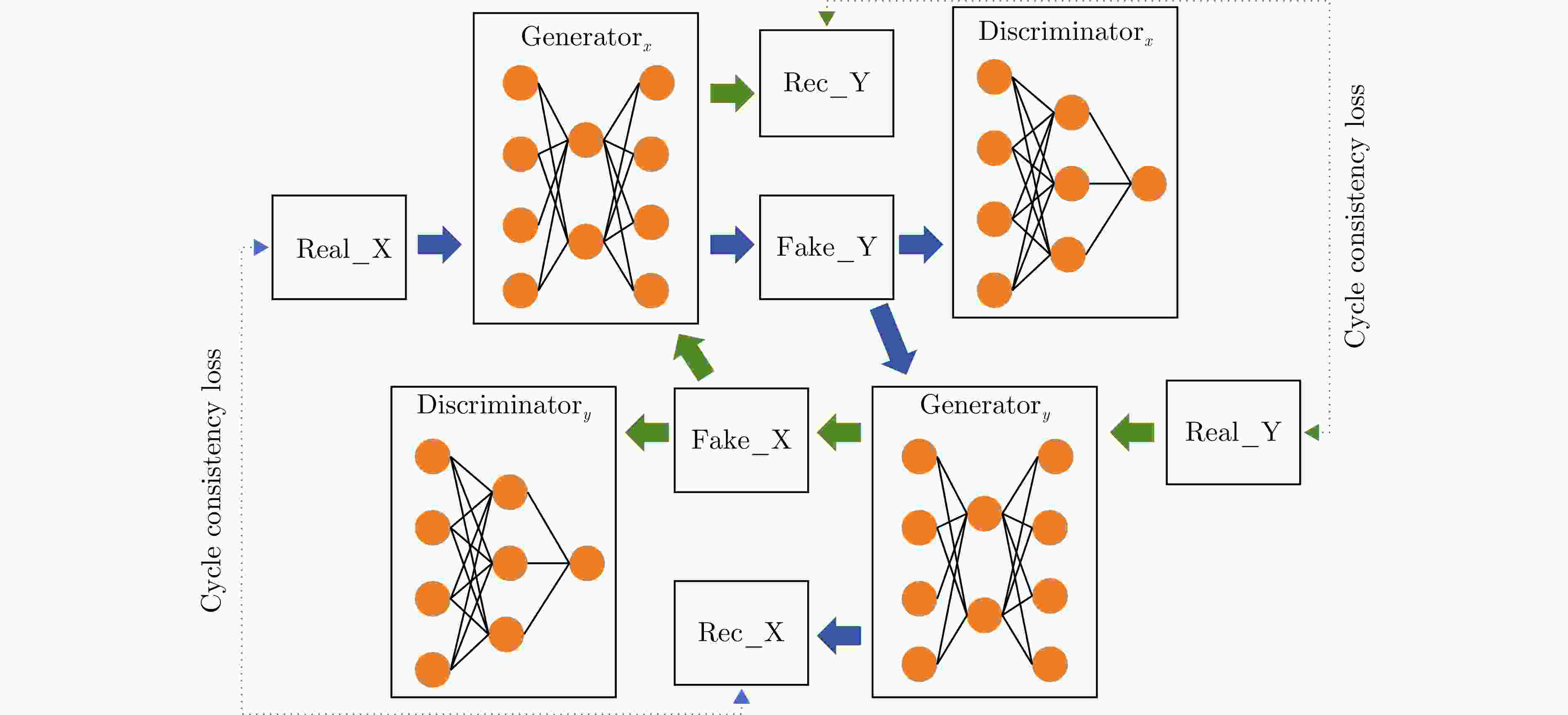

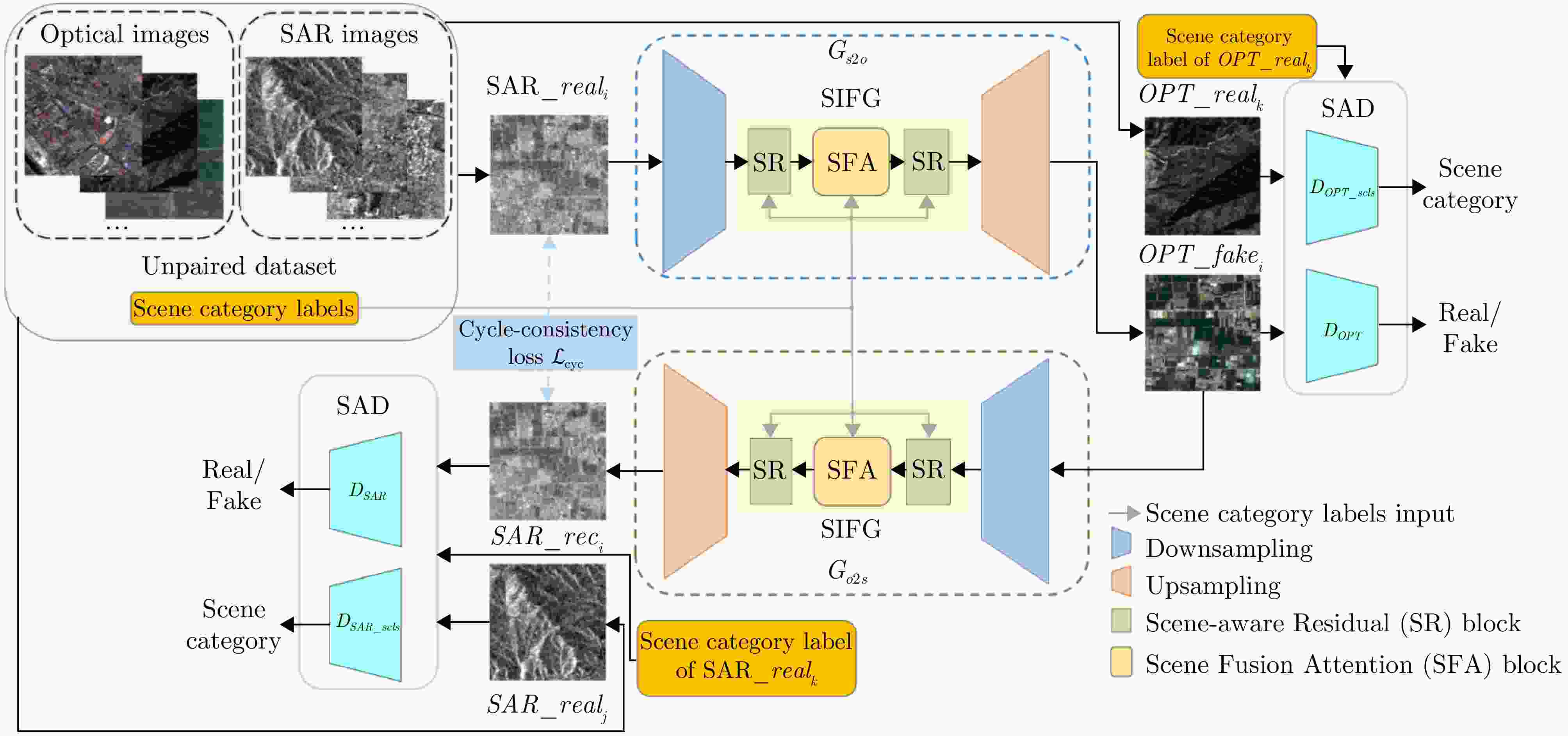

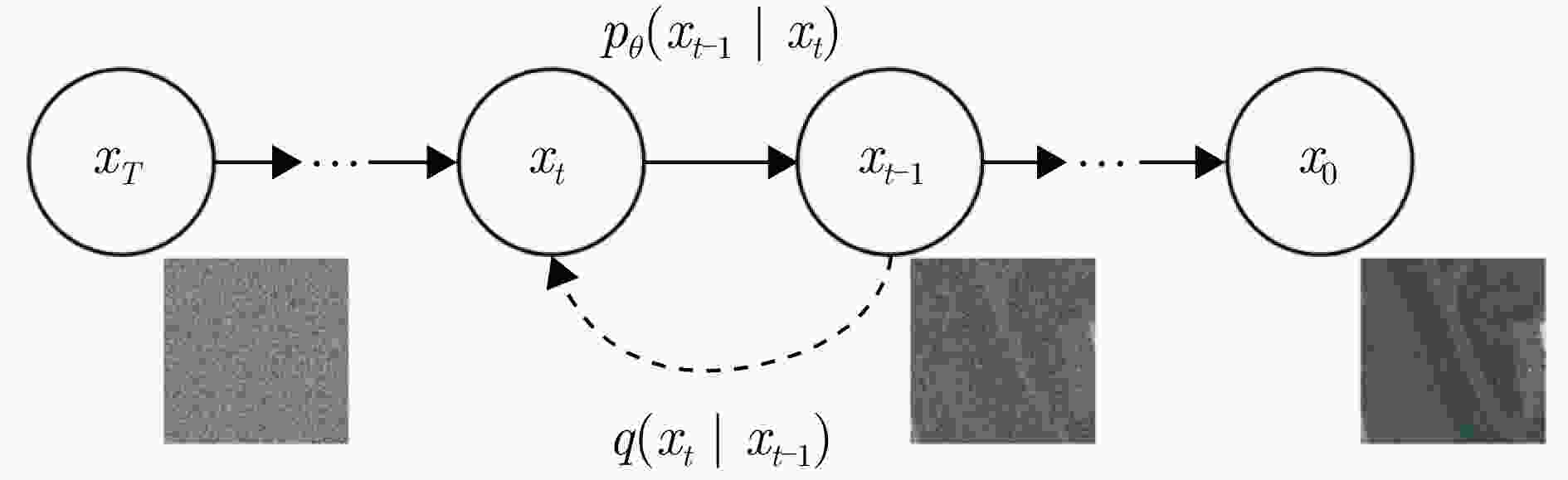

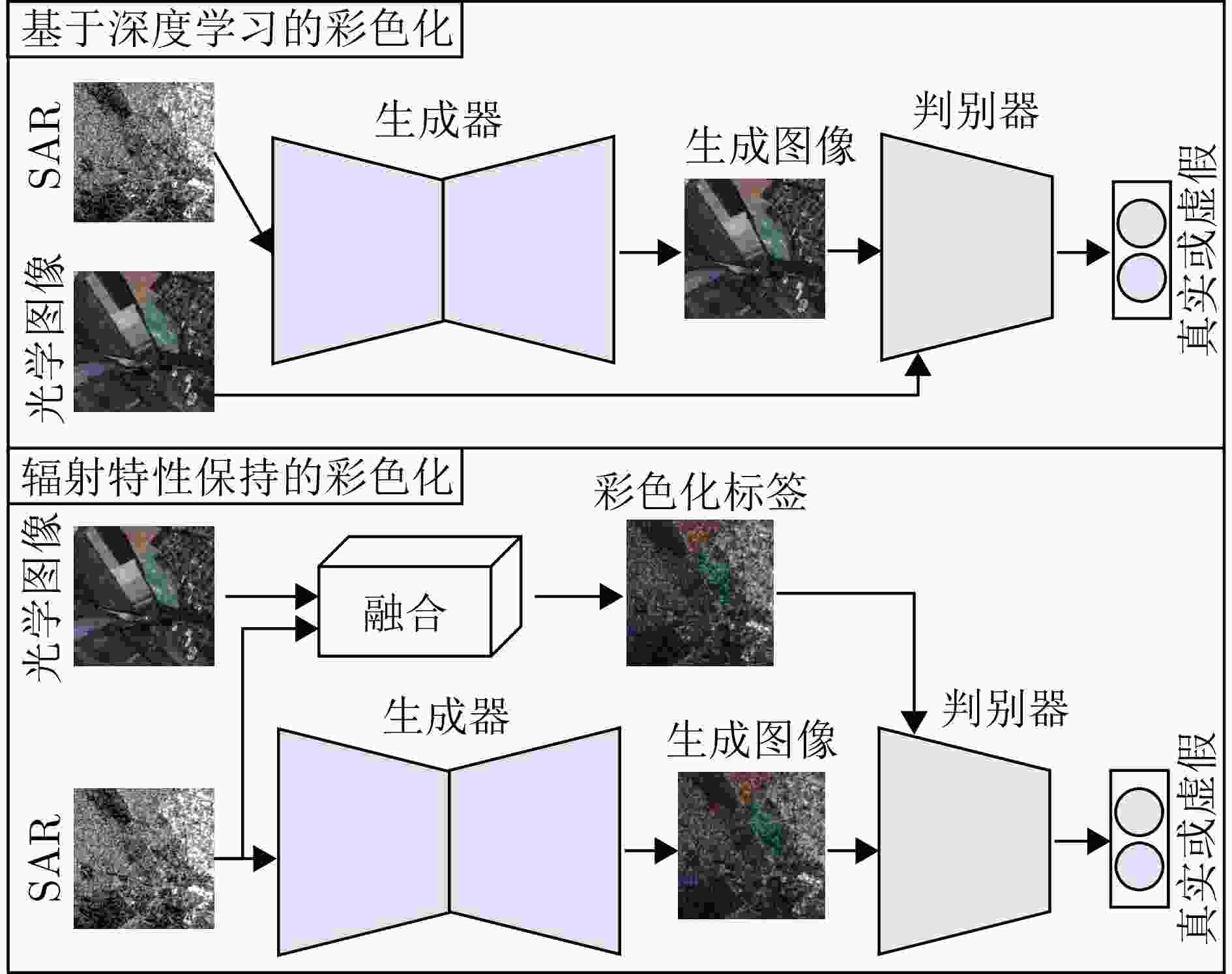

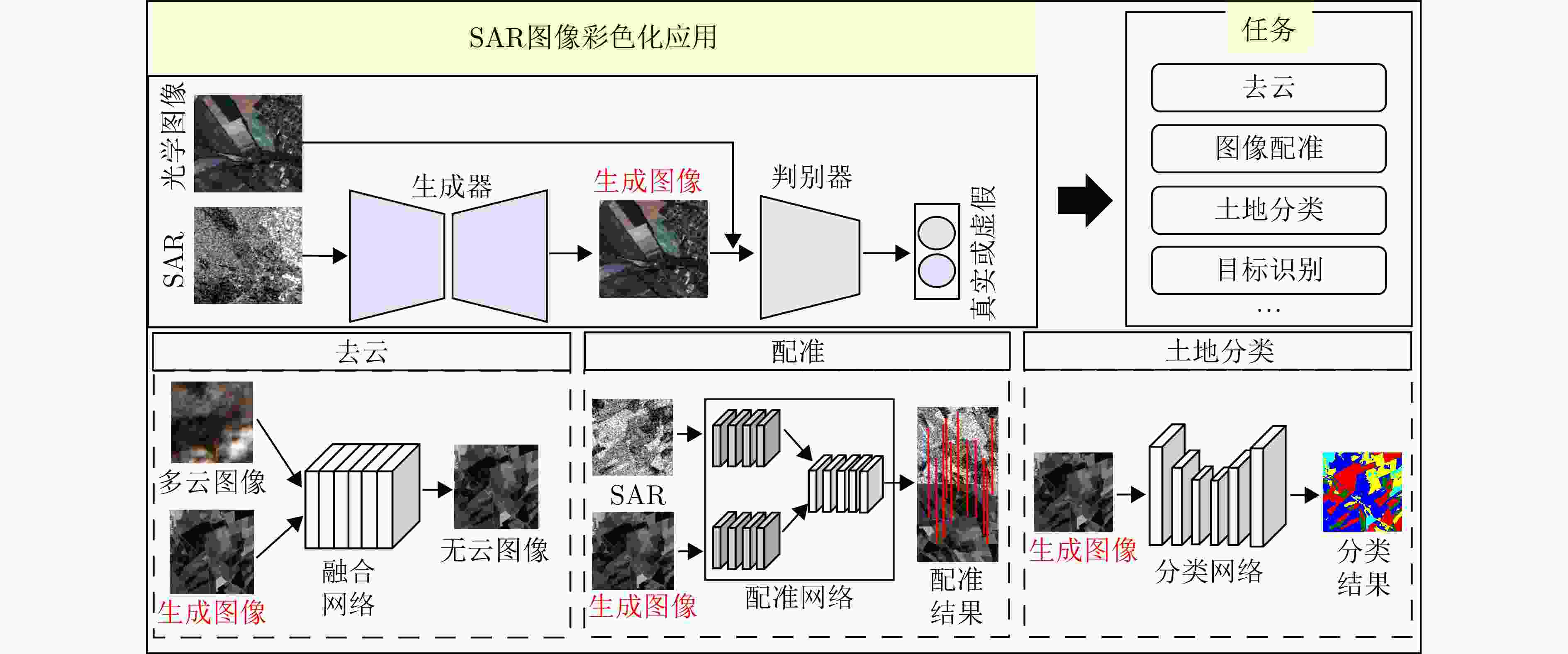

摘要: 合成孔径雷达(SAR)利用合成孔径原理实现高分辨微波成像的遥感技术,而SAR图像彩色化是遥感领域一项基础且关键的任务。与光学成像不同,SAR不受云雾干扰,可以实现对地全天候观测。然而,由于其成像原理,SAR图像属于灰度图像,严重缺乏光谱信息,视觉清晰度明显降低。因此,已有大量研究通过赋予SAR图像颜色信息来提高其可解释性。该文将综述现有的SAR图像彩色化技术,并归纳为3类:传统的SAR图像彩色化技术、基于深度学习的SAR-to-Optical图像彩色化技术和基于辐射特性保持的SAR图像彩色化技术。最后总结了其应用场景和未来的发展方向。Abstract: Synthetic Aperture Radar (SAR) is a remote sensing technology that utilizes the principle of synthetic aperture to achieve high-resolution microwave imaging. SAR image colorization is a fundamental and crucial task in remote sensing. Unlike optical imaging, SAR imaging is unaffected by clouds and fog, enabling all-weather observation of the Earth. However, owing to its imaging principle, SAR images are grayscale images; hence, they lack spectral information and have extremely low visual clarity. Therefore, numerous studies have focused on enhancing the interpretability of SAR images by incorporating color information. This paper reviews existing SAR image colorization techniques and categorizes them into three types: Traditional SAR image colorization techniques, deep-learning-based SAR-to-Optical image colorization techniques, and SAR image colorization techniques based on radiometric property preservation. Finally, we summarize the application scenarios and future development directions.

-

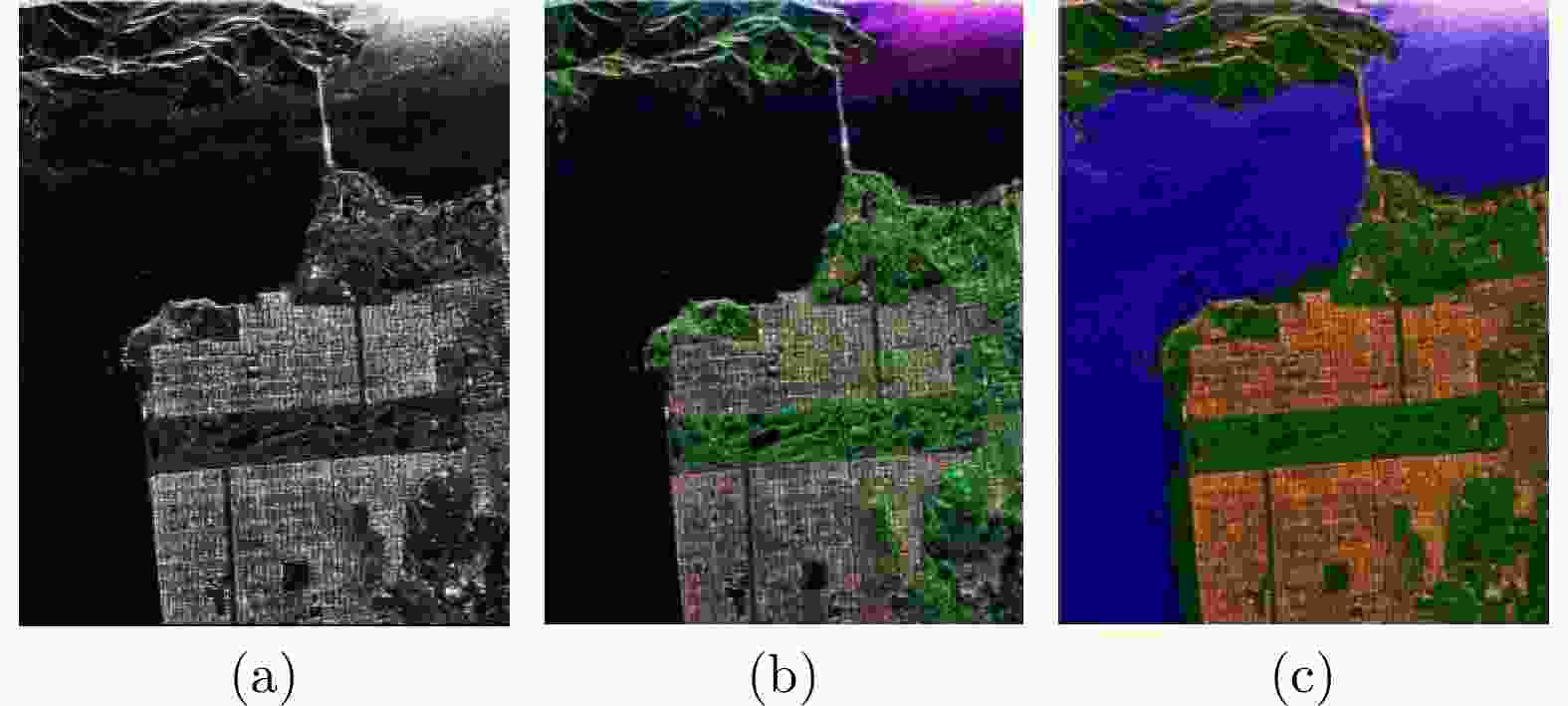

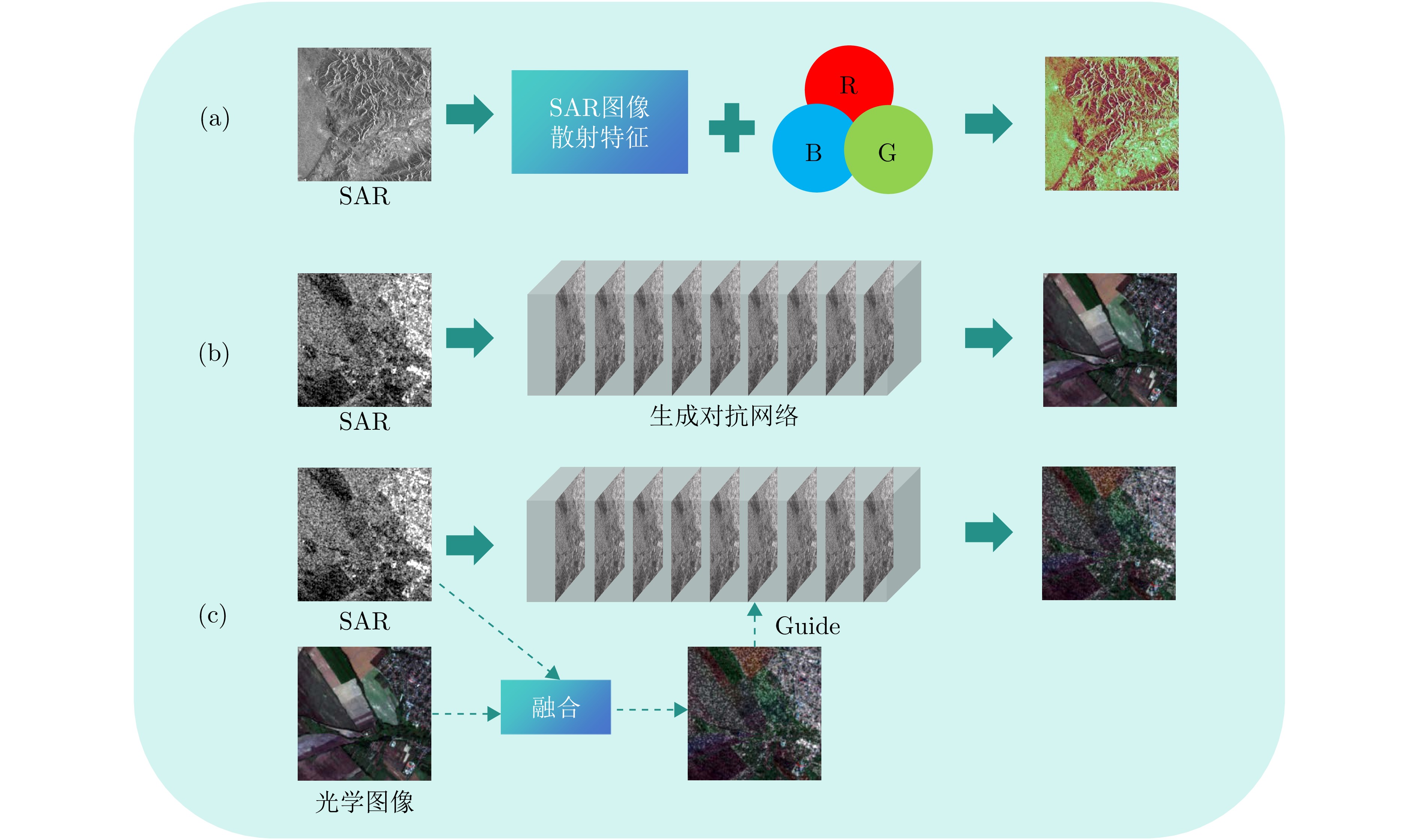

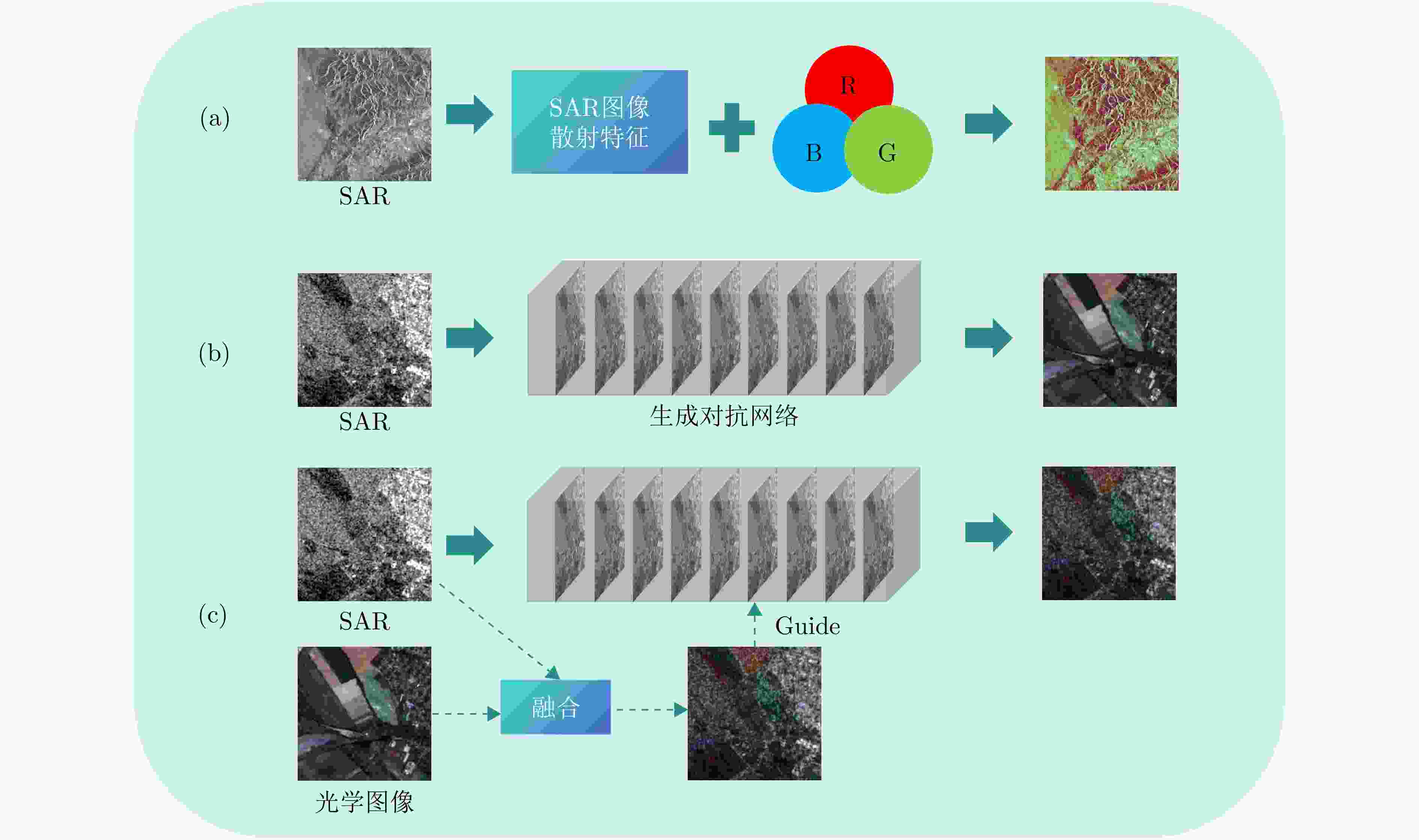

图 1 3种SAR图像彩色化方法的主要框架(a) 传统SAR图像彩色化[10] (b) 基于深度学习的SAR-to-Optical彩色化(c) 基于辐射特性保持的SAR图像彩色化

Figure 1. The main framework of three types of SAR image colorization methods (a) Traditional SAR image colorization[10] (b) Deep learning based SAR-to-Optical image colorization (c) Radiometric property preservation based SAR image colorization

表 1 基于cGAN的SAR彩色化主要技术汇总[31–53]

Table 1. Summary of key SAR colorization techniques based on cGAN[31–53]

主要创新点 方法 Loss function Modified cGAN[31] Modified Pix2Pix[32] cGAN based[33] SOPix2Pix[34] Feature enhancement & Loss function EAcGAN[35] Feature enhancement Atrous cGAN[36] CoIT cGAN[37] Feature enhancement &

Multi-scale discriminatorICGAN[38] CFRWD-GAN[39] Improved cGAN[40] Swin cGAN[41] LICGAN[42] CFIGAN[43] ADD-Unet[44] HFGAN[45] INR-ECGAN[46] EDCGAN[47] Vision transformer HVT-cGAN[48] Hybrid cGAN[49] ViT cGAN[50] Prior information RIcGAN[51] LULC Pix2pix[52] GFTT[53] 表 2 基于CycleGAN的SAR彩色化主要技术汇总[54–68]

Table 2. Summary of key SAR colorization techniques based on CycleGAN[54–68]

主要创新点 方法 Loss function S-CycleGAN[54] Improved CycleGAN[55] CycleGAN based[56] Feature enhancement GVAN[57] MSFGAN[58] Feature enhancement &

Loss functionCRAN[59] DCLGAN[60] Feature enhancement &

Modified discriminatorWFLM-GAN[61] FG-GAN[62] MS-GAN[63] S2MS-GAN[64] Physical driven S2O-TDN[65] S2O-NPDE[66] EPCGAN[67] Data driven Seg-CycleGAN[68] 表 5 彩色化模型的综合性能对比

Table 5. Comprehensive performance comparison of colorization models

方法类别 核心驱动机制 生成质量 训练难度 物理保真度 推理速度 传统物理方法 经验规则与物理映射 语义性差 低(无需训练) 高 快 基于cGAN 成对数据驱动 空间结构对其较好 中等(高度依赖严格配对数据) 低 快 基于CycleGAN 非成对数据驱动 易出现光谱与结构失真 高(存在模式崩溃风险) 低 快 基于扩散模型 概率分布渐进演化 高频纹理丰富且逼真 高(计算资源消耗大) 中等 慢 基于辐射特性保持 物理约束与数据联合驱动 兼顾色彩语义与物理特征 高(需设计物理特性融合方法) 高 中等 表 6 彩色化预处理对不同下游遥感任务的性能提升量化对比

Table 6. Quantitative performance improvement of different downstream tasks via colorization

表 7 彩色化中常用的公开数据集

Table 7. Commonly used public datasets for image colorization

类型 数据集 传感器

(SAR/光学)极化方式 分辨率(m) 数量 场景 低分辨率 SEN1-2[123] Sentinel-1/Sentinel-2 单极化(VV) 10 约 280000 对覆盖全球4个季节 BigEarthNet-MM[124] Sentinel-1/Sentinel-2 双极化(VV, VH) 10 约 590000 涵盖欧洲10个国家不同季节地貌 WHU-OPT-SAR[125] Sentinel-1/Sentinel-2 双极化(VV, VH) 10 32大场景 包含32个中国城市 SEN12MS-CR[126] Sentinel-1/Sentinel-2 双极化(VV, VH) 10 约 180000 对覆盖全球4个季节 高分辨率 QXS-SAROPT[127] GF-3/Google Earth 单极化(VV/HH) 1 约20000对 涵盖3个港口城市:圣地亚哥、

上海和青岛SAR2Opt[128] TerraSAR-X/Google Earth 单极化(VV/HH) 1 约2000对 涵盖亚洲、北美、欧洲等10个城市 MSAW[129] Capella Space/

WorldView-2全极化(VV, VH,

HV, HH)0.5 120 km2 荷兰鹿特丹港 SARoptical[130] TerraSAR-X/UltraCAM 单极化(VV/HH) 1 约 10000 对主要覆盖柏林城区 表 8 主流彩色化方法的评价指标

Table 8. Evaluation metrics for mainstream colorization methods

方法 期刊 SEN1-2 创新点 PSNR (dB) SSIM FID HVT-cGAN[48] TGRS 2025 15.97 0.2830 99.98 生成器采用卷积梗结构,并行CNN分支和ViT分支

分别提取信息和地图信息Hybrid cGAN[49] TGRS 2022 21.48 0.5106 68.32 所提模型生成器包含CNN和Transformer分支,

并改进残差块提取,以融合局部和全局特征GFTT[53] J-STARS 2025 12.61 0.1214 95.79 针对光学图像中地面材料的成像风格特征,提出一种新的分词方法GIT WFLM-GAN[61] TGRS 2022 26.19 0.8699 50.49 生成器首先学习SAR图像到小波特征的映射,

然后通过灰度图像重构优化内容MS-GAN[63] J-STARS 2023 13.09 0.2211 128.70 设计了MRG和MFD模块对SAR图像中复杂场景进行特征描述,

具有较强的场景记忆能力Parallel-GAN[73] TGRS 2022 18.29 0.3838 87.60 该模型采用并行生成对抗架构,包括一个SAR到光学图像

平移子网络和一个光学图像重建子网络SFDiff[86] TGRS 2024 16.14 0.4000 - 利用SCPE有效推导SAR图像先验信息,

利用SFCL模块控制去噪过程中的特征级条件CM-Diffsuion[92] J-STARS 2024 15.86 0.3510 - 设计了一种用于颜色注意力的布朗桥扩散结构,通过双向扩散过程和

颜色注意力机制直接学习两个图像域之间的颜色相关性和转换表 9 部分方法复现的评价指标

Table 9. Evaluation metrics for the reproduction of some methods

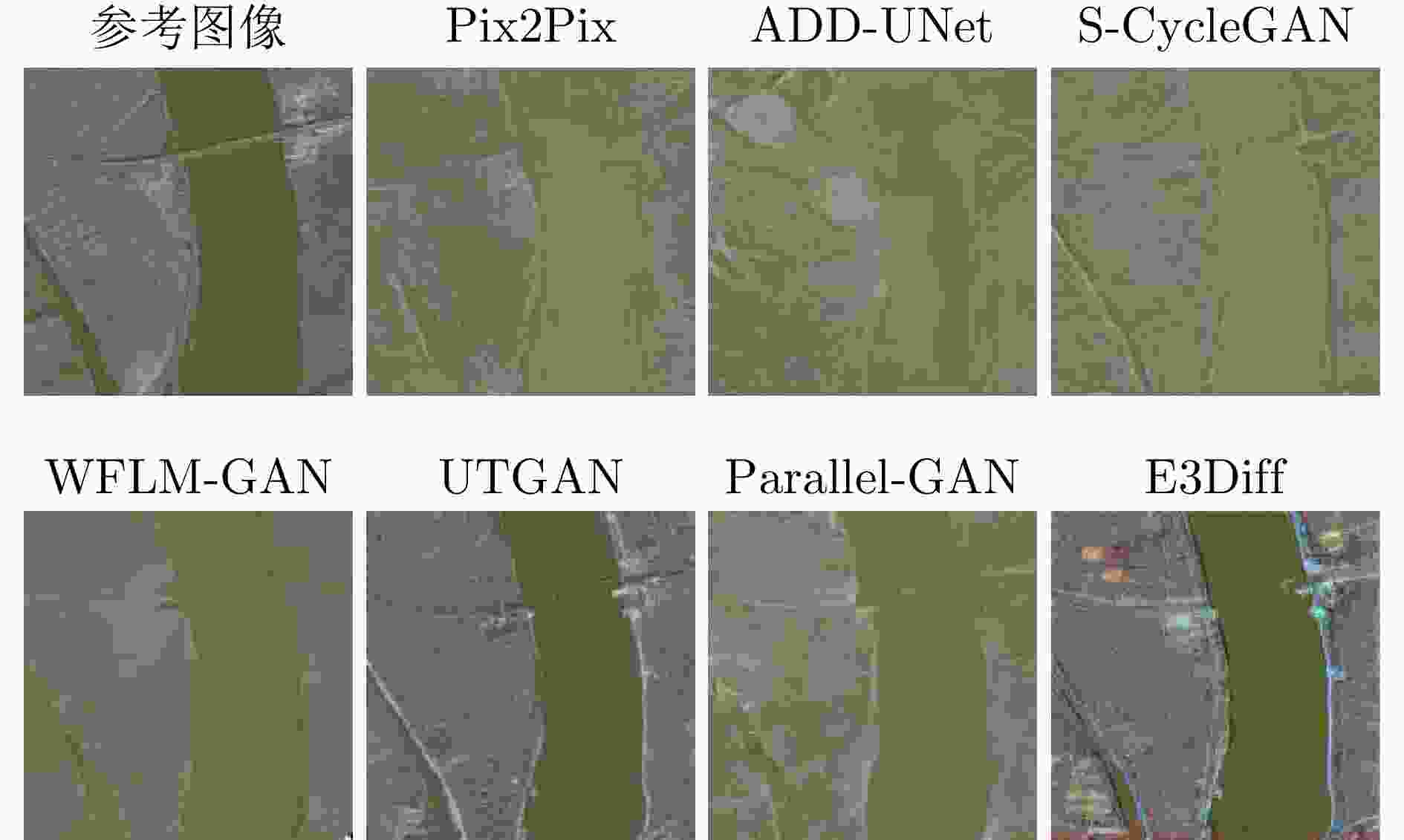

方法 期刊 WHU-OPT-SAR 创新点 PSNR (dB) SSIM FID ADD-UNet[44] RS 2023 27.6824 0.5384 288.6572 引入双解码器机制以聚合多尺度特征,实现更锐利的边缘 S-CycleGAN[54] Ieee Access 2019 27.4453 0.4672 201.2482 将配对L1损失函数整合至CycleGAN中,以平衡结构一致性与像素精度 WFLM-GAN[61] TGRS 2022 28.3352 0.6058 225.3457 在小波域中执行翻译操作,以分离高频噪声与低频结构 UTGAN[70] JAIHC 2023 27.8617 0.4251 182.3694 结合U-Net(用于结构)与T-Net(用于纹理补偿)以增强细节 Parallel-GAN[73] TGRS 2022 28.1284 0.5835 164.2157 通过辅助的“伴随网络”来强制执行隐式层次特征约束 E3Diff[91] GRSL 2024 28.2396 0.5421 152.3472 一种利用蒸馏技术进行端到端扩散的高效模型,

适用于基于SAR空间先验的实时推理cGAN-based[131] IGRASS 2018 27.1573 0.5192 301.5517 采用粗到细生成器结构的cGAN早期应用 注:加粗数值表示最优。 -

[1] 吴谨. 关于合成孔径激光雷达成像研究[J]. 雷达学报, 2012, 1(4): 353–360. doi: 10.3724/SP.J.1300.2012.20076.WU Jin. On the development of synthetic aperture ladar imaging[J]. Journal of Radars, 2012, 1(4): 353–360. doi: 10.3724/SP.J.1300.2012.20076. [2] 徐丰, 王海鹏, 金亚秋. 深度学习在SAR目标识别与地物分类中的应用[J]. 雷达学报, 2017, 6(2): 136–148. doi: 10.12000/JR16130.XU Feng, WANG Haipeng, and JIN Yaqiu. Deep learning as applied in SAR target recognition and terrain classification[J]. Journal of Radars, 2017, 6(2): 136–148. doi: 10.12000/JR16130. [3] ZHU Xiaoxiang, TUIA D, MOU Lichao, et al. Deep learning in remote sensing: A comprehensive review and list of resources[J]. IEEE Geoscience and Remote Sensing Magazine, 2017, 5(4): 8–36. doi: 10.1109/MGRS.2017.2762307. [4] ZHANG Yuanzhi, ZHANG Hongsheng, and LIN Hui. Improving the impervious surface estimation with combined use of optical and SAR remote sensing images[J]. Remote Sensing of Environment, 2014, 141: 155–167. doi: 10.1016/j.rse.2013.10.028. [5] KALECINSKI N I, SKAKUN S, TORBICK N, et al. Crop yield estimation at different growing stages using a synergy of SAR and optical remote sensing data[J]. Science of Remote Sensing, 2024, 10: 100153. doi: 10.1016/j.srs.2024.100153. [6] 云烨, 吕孝雷, 付希凯, 等. 星载InSAR技术在地质灾害监测领域的应用[J]. 雷达学报, 2020, 9(1): 73–85. doi: 10.12000/JR20007.YUN Ye, LÜ Xiaolei, FU Xikai, et al. Application of spaceborne interferometric synthetic aperture radar to geohazard monitoring[J]. Journal of Radars, 2020, 9(1): 73–85. doi: 10.12000/JR20007. [7] LIU Chenfang, SUN Yulin, XU Yanjie, et al. A review of optical and SAR image deep feature fusion in semantic segmentation[J]. IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, 2024, 17: 12910–12930. doi: 10.1109/JSTARS.2024.3424831. [8] 杜兰, 王兆成, 王燕, 等. 复杂场景下单通道SAR目标检测及鉴别研究进展综述[J]. 雷达学报, 2020, 9(1): 34–54. doi: 10.12000/JR19104.DU Lan, WANG Zhaocheng, WANG Yan, et al. Survey of research progress on target detection and discrimination of single-channel SAR images for complex scenes[J]. Journal of Radars, 2020, 9(1): 34–54. doi: 10.12000/JR19104. [9] 张帆, 孟凡乐, 马飞, 等. 基于主散射时间熵的多时相极化SAR盐田区域分类[J]. 雷达学报(中英文), 2026, 15(2): 523–542. doi: 10.12000/JR25087.ZHANG Fan, MENG Fanle, MA Fei, et al. Multi-temporal polarimetric synthetic aperture radar salt field regional classification based on dominant scattering temporal entropy[J]. Journal of Radars, 2026, 15(2): 523–542. doi: 10.12000/JR25087. [10] ZHOU Xiaodong, ZHANG Chunhua, and LI Song. A perceptive uniform pseudo-color coding method of SAR images[C]. 2006 CIE International Conference on Radar, Shanghai, China, 2006: 1–4. doi: 10.1109/ICR.2006.343253. [11] FENG Zicheng, LIU Xiaolin, and PEI Bingzhi. Pseudo-color coding method for high-dynamic single-polarization SAR images[C]. 9th International Conference on Graphic and Image Processing (ICGIP 2017), Qingdao, China, 2018, 10615: 1222–1228. doi: 10.1117/12.2302684. [12] UHLMANN S, KIRANYAZ S, and GABBOUJ M. Polarimetric SAR classification using visual color features extracted over pseudo color images[C]. 2013 IEEE International Geoscience and Remote Sensing Symposium-IGARSS, Melbourne, Australia, 2013: 1999–2002. doi: 10.1109/IGARSS.2013.6723201. [13] UHLMANN S and KIRANYAZ S. Classification of dual- and single polarized SAR images by incorporating visual features[J]. ISPRS Journal of Photogrammetry and Remote Sensing, 2014, 90: 10–22. doi: 10.1016/j.isprsjprs.2014.01.005. [14] LI Zhijiang, LIU Jianping, and HUANG Jing. Dynamic range compression and pseudo-color presentation based on Retinex for SAR images[C]. 2008 Internation73al Conference on Computer Science and Software Engineering, Wuhan, China, 2008: 257–260. doi: 10.1109/CSSE.2008.1459. [15] UHLMANN S and KIRANYAZ S. Integrating color features in polarimetric SAR image classification[J]. IEEE Transactions on Geoscience and Remote Sensing, 2014, 52(4): 2197–2216. doi: 10.1109/TGRS.2013.2258675. [16] DENG Qiming, CHEN Yilun, ZHANG Weijie, et al. Colorization for polarimetric SAR image based on scattering mechanisms[C]. 2008 Congress on Image and Signal Processing, Sanya, China, 2008: 697–701. doi: 10.1109/CISP.2008.366. [17] LI Zewen, LIU Fan, YANG Wenjie, et al. A survey of convolutional neural networks: Analysis, applications, and prospects[J]. IEEE Transactions on Neural Networks and Learning Systems, 2022, 33(12): 6999–7019. doi: 10.1109/TNNLS.2021.3084827. [18] GOODFELLOW I, POUGET-ABADIE J, MIRZA M, et al. Generative adversarial networks[J]. Communications of the ACM, 2020, 63(11): 139–144. doi: 10.1145/3422622. [19] MIRZA M and OSINDERO S. Conditional generative adversarial nets[EB/OL]. https://arxiv.org/abs/1411.1784, 2014. [20] ZHU Junyan, PARK T, ISOLA P, et al. Unpaired image-to-image translation using cycle-consistent adversarial networks[C]. IEEE International Conference on Computer Vision, Venice, Italy, 2017: 2242–2251. doi: 10.1109/ICCV.2017.244. [21] HO J, JAIN A, and ABBEEL P. Denoising diffusion probabilistic models[C]. 34th International Conference on Neural Information Processing Systems, Vancouver, Canada, 2020: 574. [22] EVANS D L, FARR T G, VAN ZYL J J, et al. Radar polarimetry: Analysis tools and applications[J]. IEEE Transactions on Geoscience and Remote Sensing, 1988, 26(6): 774–789. doi: 10.1109/36.7709. [23] JORDAN R L, HUNEYCUTT B L, and WERNER M. The SIR-C/X-SAR synthetic aperture radar system[J]. Proceedings of the IEEE, 1991, 79(6): 827–838. doi: 10.1109/5.90161. [24] VAN ZYL J J. Unsupervised classification of scattering behavior using radar polarimetry data[J]. IEEE Transactions on Geoscience and Remote Sensing, 1989, 27(1): 36–45. doi: 10.1109/36.20273. [25] CLOUDE S R and POTTIER E. An entropy based classification scheme for land applications of polarimetric SAR[J]. IEEE Transactions on Geoscience and Remote Sensing, 1997, 35(1): 68–78. doi: 10.1109/36.551935. [26] POTTIER E, LEE J S, and FERRO-FAMIL L. Advanced concepts in polarimetry—Part 1 (Polarimetric target description, speckle filtering and decomposition theorems)[R]. RTO-EN-SET-081, 2005. [27] SWAIN M J and BALLARD D H. Color indexing[J]. International Journal of Computer Vision, 1991, 7(1): 11–32. doi: 10.1007/BF00130487. [28] MANJUNATH B S, OHM J R, VASUDEVAN V V, et al. Color and texture descriptors[J]. IEEE Transactions on Circuits and Systems for Video Technology, 2001, 11(6): 703–715. doi: 10.1109/76.927424. [29] LIU Hongying, WANG Shuang, HOU Biao, et al. Unsupervised classification of polarimetric SAR images integrating color features[C]. 2014 IEEE Geoscience and Remote Sensing Symposium, Quebec City, Canada, 2014: 2762–2765. doi: 10.1109/IGARSS.2014.6947048. [30] LIU P, WANG L, RANJAN R, et al. A survey on active deep learning: From model driven to data driven[J]. ACM Computing Surveys (CSUR), 2022, 54(10s): 1–34. doi: 10.1145/3510414. [31] LI Yu, FU Randi, MENG Xiangchao, et al. A SAR-to-Optical image translation method based on conditional generation adversarial network (cGAN)[J]. IEEE Access, 2020, 8: 60338–60343. doi: 10.1109/ACCESS.2020.2977103. [32] ZUO Zongcheng and LI Yuanxiang. A SAR-to-Optical image translation method based on PIX2PIX[C]. 2021 IEEE International Geoscience and Remote Sensing Symposium IGARSS, Brussels, Belgium, 2021: 3026–3029. doi: 10.1109/IGARSS47720.2021.9555111. [33] HU Pengcheng, WANG Yong, LIU Yifan, et al. Improved cGAN for SAR-to-Optical image translation[C]. 2024 43rd Chinese Control Conference (CCC), Kunming, China, 2024: 7675–7680. doi: 10.23919/CCC63176.2024.10661422. [34] CHENG Fujian, KANG Yashu, CHEN Chunlei, et al. A strategy optimized Pix2pix approach for SAR-to-Optical image translation task[EB/OL]. https://arxiv.org/abs/2206.13042, 2022. [35] YU Tianzhu, ZHANG Jiexin, and ZHOU Jianjiang. Conditional GAN with effective attention for SAR-to-Optical image translation[C]. 2021 3rd International Conference on Advances in Computer Technology, Information Science and Communication (CTISC), Shanghai, China, 2021: 7–11. doi: 10.1109/CTISC52352.2021.00009. [36] TURNES J N, CASTRO J D B, TORRES D L, et al. Atrous cGAN for SAR to optical image translation[J]. IEEE Geoscience and Remote Sensing Letters, 2022, 19: 4003905. doi: 10.1109/IGARSS.2018.8518719. [37] LUO Jinjin, YANG Xuezhi, LIANG Hongbo, et al. Contextual inference feature extraction approach based on generative adversarial network for SAR-to-Optical image translation[C]. IGARSS 2024–2024 IEEE International Geoscience and Remote Sensing Symposium, Athens, Greece, 2024: 7334–7337. doi: 10.1109/IGARSS53475.2024.10642046. [38] YANG Xi, ZHAO Jingyi, WEI Ziyu, et al. SAR-to-Optical image translation based on improved CGAN[J]. Pattern Recognition, 2022, 121: 108208. doi: 10.1016/j.patcog.2021.108208. [39] WEI Juan, ZOU Huanxin, SUN Li, et al. CFRWD-GAN for SAR-to-Optical image translation[J]. Remote Sensing, 2023, 15(10): 2547. doi: 10.3390/rs15102547. [40] ZHAN Tao, BIAN Jiarong, YANG Jing, et al. Improved conditional generative adversarial networks for SAR-to-Optical image translation[C]. 6th Chinese Conference on Pattern Recognition and Computer Vision (PRCV), Xiamen, China, 2023: 279–291. doi: 10.1007/978-981-99-8462-6_23. [41] KONG Yingying, LIU Siyuan, and PENG Xiangyang. Multi-scale translation method from SAR to optical remote sensing images based on conditional generative adversarial network[J]. International Journal of Remote Sensing, 2022, 43(8): 2837–2860. doi: 10.1080/01431161.2022.2072179. [42] GUO Zhe, GUO Haojie, LIU Xuewen, et al. SAR2color: Learning imaging characteristics of SAR images for SAR-to-Optical transformation[J]. Remote Sensing, 2022, 14(15): 3740. doi: 10.3390/rs14153740. [43] WEI Juan, ZOU Huanxin, SUN Li, et al. Generative adversarial network for SAR-to-Optical image translation with feature cross-fusion inference[C]. IGARSS 2022–2022 IEEE International Geoscience and Remote Sensing Symposium, Kuala Lumpur, Malaysia, 2022: 6025–6028. doi: 10.1109/IGARSS46834.2022.9884166. [44] LUO Qingli, LI Hong, CHEN Zhiyuan, et al. Add-UNet: An adjacent dual-decoder UNET for SAR-to-Optical translation[J]. Remote Sensing, 2023, 15(12): 3125. doi: 10.3390/rs15123125. [45] YU Ning, MA Ailong, ZHONG Yanfei, et al. HFGAN: A heterogeneous fusion generative adversarial network for SAR-to-Optical image translation[C]. IGARSS 2022–2022 IEEE International Geoscience and Remote Sensing Symposium, Kuala Lumpur, Malaysia, 2022: 2864–2867. doi: 10.1109/IGARSS46834.2022.9883519. [46] FENG Chenguo, LIU Yang, WANG Nan, et al. INR-ECGAN: An enhanced conditional GAN with Implicit neural representation for SAR-to-Optical image translation[C]. 2024 China Automation Congress (CAC), Qingdao, China, 2024: 4358–4363. doi: 10.1109/CAC63892.2024.10864577. [47] CHOUHAN A, JINDAL N, SUR A, et al. EDCGAN: Encoder decoder based conditional GAN for SAR to optical image translation[C]. The 13th Indian Conference on Computer Vision, Graphics and Image Processing, Gandhinagar, India, 2022: 38. doi: 10.1145/3571600.3571639. [48] ZHAO Wenbo, JIANG Nana, LIAO Xiaoxin, et al. HVT-cGAN: Hybrid vision transformer cGAN for SAR-to-Optical image translation[J]. IEEE Transactions on Geoscience and Remote Sensing, 2025, 63: 5202017. doi: 10.1109/TGRS.2024.3523040. [49] WANG Zhaobin, MA Yikun, and ZHANG Yaonan. Hybrid cGAN: Coupling global and local features for SAR-to-Optical image translation[J]. IEEE Transactions on Geoscience and Remote Sensing, 2022, 60: 5236016. doi: 10.1109/TGRS.2022.3212208. [50] PARK S, LEE H, and LEE S. SAR-to-Optical image translation using vision transformer-based CGAN[J]. IEEE Sensors Journal, 2025, 25(10): 18503–18514. doi: 10.1109/JSEN.2025.3555933. [51] DOI K, SAKURADA K, ONISHI M, et al. GAN-based SAR-to-Optical image translation with region information[C]. IGARSS 2020–2020 IEEE International Geoscience and Remote Sensing Symposium, Waikoloa, USA, 2020: 2069–2072. doi: 10.1109/IGARSS39084.2020.9323085. [52] FU Xuanchao, KOUYAMA T, SEKI S, et al. Advanced SAR-to-Optical image translation techniques using Jaxa’s high-resolution land-use and land-cover map[C]. IGARSS 2024–2024 IEEE International Geoscience and Remote Sensing Symposium, Athens, Greece, 2024: 7367–7370. doi: 10.1109/IGARSS53475.2024.10641911. [53] LIANG Hongbo, YANG Xuezhi, YANG Xiangyu, et al. GFTT: Geographical feature tokenization transformer for SAR-to-Optical image translation[J]. IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, 2025, 18: 2975–2989. doi: 10.1109/JSTARS.2024.3523274. [54] WANG Lei, XU Xin, YU Yue, et al. SAR-to-Optical image translation using supervised cycle-consistent adversarial networks[J]. IEEE Access, 2019, 7: 129136–129149. doi: 10.1109/ACCESS.2019.2939649. [55] HWANG J, YU Chushi, and SHIN Y. SAR-to-Optical image translation using SSIM and perceptual loss based cycle-consistent GAN[C]. 2020 International Conference on Information and Communication Technology Convergence (ICTC), Jeju, Korea (South), 2020: 191–194. doi: 10.1109/ICTC49870.2020.9289381. [56] SIDDIQUI A A, K S P, and AMBASTHA S. Converting SAR images to optical images using CycleGAN[C]. 2024 2nd International Conference on Emerging Trends in Information Technology and Engineering (ICETITE), Vellore, India, 2024: 1–5. doi: 10.1109/ic-ETITE58242.2024.10493784. [57] SHI Hao, ZHANG Bocheng, WANG Yupei, et al. SAR-to-Optical image translating through generate-validate adversarial networks[J]. IEEE Geoscience and Remote Sensing Letters, 2022, 19: 4506905. doi: 10.1109/LGRS.2022.3168391. [58] CHEN Yongkang, ZHU Zuguo, HUANG Yan, et al. MSF: A multi-scale fusion generative adversarial network for SAR-to-Optical image translation[C]. IGARSS 2024–2024 IEEE International Geoscience and Remote Sensing Symposium, Athens, Greece, 2024: 9058–9061. doi: 10.1109/IGARSS53475.2024.10640486. [59] FU Shilei, XU Feng, and JIN Yaqiu. Reciprocal translation between SAR and optical remote sensing images with cascaded-residual adversarial networks[J]. Science China Information Sciences, 2021, 64(2): 122301. doi: 10.1007/s11432-020-3077-5. [60] HWANG J and SHIN Y. SAR-to-Optical image translation using SSIM loss based unpaired GAN[C]. 2022 13th International Conference on Information and Communication Technology Convergence (ICTC), Jeju Island, Korea, Republic of, 2022: 917–920. doi: 10.1109/ICTC55196.2022.9952649. [61] LI Huihui, GU Cang, WU Dongqing, et al. Multiscale generative adversarial network based on wavelet feature learning for SAR-to-Optical image translation[J]. IEEE Transactions on Geoscience and Remote Sensing, 2022, 60: 5236115. doi: 10.1109/TGRS.2022.3211415. [62] YANG Xing, WANG Zihan, ZHAO Jingyi, et al. FG-GAN: A fine-grained generative adversarial network for unsupervised SAR-to-Optical image translation[J]. IEEE Transactions on Geoscience and Remote Sensing, 2022, 60: 5621211. doi: 10.1109/TGRS.2022.3165371. [63] GUO Zhe, ZHANG Zhibo, CAI Qinglin, et al. MS-GAN: Learn to memorize scene for unpaired SAR-to-Optical image translation[J]. IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, 2024, 17: 11467–11484. doi: 10.1109/JSTARS.2024.3411691. [64] LIU Yang, HAN Qingcen, YANG Hong, et al. High-resolution SAR-to-multispectral image translation based on S2MS-GAN[J]. Remote Sensing, 2024, 16(21): 4045. doi: 10.3390/rs16214045. [65] ZHANG Mingjin, ZHANG Peng, ZHANG Yuhan, et al. SAR-to-Optical image translation via an interpretable network[J]. Remote Sensing, 2024, 16(2): 242. doi: 10.3390/rs16020242. [66] ZHANG Mingjin, HE Chengyu, ZHANG Jing, et al. SAR-to-Optical image translation via neural partial differential equations[C]. The 31st International Joint Conference on Artificial Intelligence, Vienna, Austria, 2022: 1644–1650. [67] GUO Jie, HE Chengyu, ZHANG Mingjin, et al. Edge-preserving convolutional generative adversarial networks for SAR-to-Optical image translation[J]. Remote Sensing, 2021, 13(18): 3575. doi: 10.3390/rs13183575. [68] ZHANG Hannuo, LI Huihui, LIN Jiarui, et al. Seg-CycleGAN: SAR-to-Optical image translation guided by a downstream task[J]. IEEE Geoscience and Remote Sensing Letters, 2025, 22: 4005205. doi: 10.1109/LGRS.2025.3538868. [69] ZHANG Jiexin, ZHOU Jianjiang, and LU Xiwen. Feature-guided SAR-to-Optical image translation[J]. IEEE Access, 2020, 8: 70925–70937. doi: 10.1109/ACCESS.2020.2987105. [70] LUO Yi and PI Dechang. SAR-to-Optical image translation for quality enhancement[J]. Journal of Ambient Intelligence and Humanized Computing, 2023, 14(8): 9985–10000. doi: 10.1007/s12652-021-03665-0. [71] BRALET A, ATTO A M, CHANUSSOT J, et al. Deep learning of radiometrical and geometrical SAR distorsions for image modality translations[C]. 2022 IEEE International Conference on Image Processing (ICIP), Bordeaux, France, 2022: 1766–1770. doi: 10.1109/ICIP46576.2022.9897713. [72] YAN Weidong, YAN Pei, and CAO Li. Domain transfer net based on U-Net and transformer for synthetic aperture radar-to-optical image translation[J]. Journal of Applied Remote Sensing, 2024, 18(4): 046509. doi: 10.1117/1.JRS.18.046509. [73] WANG Haixia, ZHANG Zhigang, HU Zhanyi, et al. SAR-to-Optical image translation with hierarchical latent features[J]. IEEE Transactions on Geoscience and Remote Sensing, 2022, 60: 5233812. doi: 10.1109/TGRS.2022.3200996. [74] PAN Yue, KHAN I A, and MENG Han. SAR-to-Optical image translation using multi-stream deep ResCNN of information reconstruction[J]. Expert Systems with Applications, 2023, 224: 120040. doi: 10.1016/j.eswa.2023.120040. [75] HE Jingfei, MAO Yongfei, ZHAO Liangbo, et al. A dual network approach with enhanced feature guidance for SAR-to-Optical image translation[C]. 2024 IEEE International Conference on Signal, Information and Data Processing (ICSIDP), Zhuhai, China, 2024: 1–6. doi: 10.1109/ICSIDP62679.2024.10868756. [76] ZHOU Hao, XIAO Xiao, and LU Yilong. Multi-modality translation from SAR image to optical image for remote sensing applications[C]. 2021 7th Asia-Pacific Conference on Synthetic Aperture Radar (APSAR), Bali, Indonesia, 2021: 1–4. doi: 10.1109/APSAR52370.2021.9688420. [77] DU Wenliang, ZHOU Yong, ZHU Hancheng, et al. A semi-supervised image-to-image translation framework for SAR-optical image matching[J]. IEEE Geoscience and Remote Sensing Letters, 2022, 19: 4516305. doi: 10.1109/LGRS.2022.3223353. [78] WANG Jinyu, YANG Haitao, LIU Zhengjun, et al. A semi-supervised method using cycle consistency and multi-perspective dilated for SAR-to-Optical translation[J]. iScience, 2025, 28(5): 112401. doi: 10.1016/j.isci.2025.112401. [79] GUO Zhe, LUO Rui, CAI Qinglin, et al. Scene-embedded generative adversarial networks for semi-supervised SAR-to-Optical image translation[J]. IEEE Geoscience and Remote Sensing Letters, 2024, 21: 4018005. doi: 10.1109/LGRS.2024.3471553. [80] ZHAO Jiaqi, NI Wenxin, ZHOU Yong, et al. SAR-to-Optical image translation by a variational generative adversarial network[J]. Remote Sensing Letters, 2022, 13(7): 672–682. doi: 10.1080/2150704X.2022.2068986. [81] BRALET A, NGO T N, TROUVÉ E, et al. DEM-assisted neural network for SAR-to-Optical image translation[C]. IGARSS 2024–2024 IEEE International Geoscience and Remote Sensing Symposium, Athens, Greece, 2024: 7649–7652. doi: 10.1109/IGARSS53475.2024.10641788. [82] BAI Xinyu, PU Xinyang, and XU Feng. Conditional diffusion for SAR to optical image translation[J]. IEEE Geoscience and Remote Sensing Letters, 2024, 21: 4000605. doi: 10.1109/LGRS.2023.3337143. [83] SHI Hao, CUI Zihan, CHEN Liang, et al. A brain-inspired approach for SAR-to-Optical image translation based on diffusion models[J]. Frontiers in Neuroscience, 2024, 18: 1352841. doi: 10.3389/fnins.2024.1352841. [84] BAI Xinyu and XU Feng. SAR to optical image translation with color supervised diffusion model[C]. IGARSS 2024–2024 IEEE International Geoscience and Remote Sensing Symposium, Athens, Greece, 2024: 963–966. doi: 10.1109/IGARSS53475.2024.10640647. [85] QIN Jiang, ZOU Bin, ZHANG Lamei, et al. SAR-to-Optical image translation using conditional denoising diffusion probabilistic models[C]. 2024 International Radar Conference (RADAR), Rennes, France, 2024: 1–5. doi: 10.1109/RADAR58436.2024.10993599. [86] QIN Jiang, WANG Kai, ZOU Bin, et al. Conditional diffusion model with spatial-frequency refinement for SAR-to-Optical image translation[J]. IEEE Transactions on Geoscience and Remote Sensing, 2024, 62: 5226914. doi: 10.1109/TGRS.2024.3491826. [87] YOU Ruixi, JIA Hecheng, and XU Feng. Unpaired object-level SAR-to-Optical image translation for aircraft with keypoints-guided diffusion models[EB/OL]. https://arxiv.org/abs/2503.19798, 2025. [88] AYDIN K, HANNA J, and BORTH D. SAR-to-RGB translation with latent diffusion for earth observation[EB/OL]. https://arxiv.org/abs/2504.11154, 2025. [89] GOU Shuiping, WANG Xin, WANG Xinlin, et al. Interpretable matching of optical-SAR image via dynamically conditioned diffusion models[C]. The 32nd ACM International Conference on Multimedia, Melbourne, Australia, 2024: 4358–4367. doi: 10.1145/3664647.3681700. [90] BAI Xinyu and XU Feng. Accelerating diffusion for SAR-to-Optical image translation via adversarial consistency distillation[EB/OL]. https://arxiv.org/abs/2407.06095, 2024. [91] QIN Jiang, ZOU Bin, LI Haolin, et al. Efficient end-to-end diffusion model for one-step SAR-to-Optical translation[J]. IEEE Geoscience and Remote Sensing Letters, 2025, 22: 4000405. doi: 10.1109/LGRS.2024.3506566. [92] GUO Zhe, LIU Jiayi, CAI Qinglin, et al. Learning SAR-to-Optical image translation via diffusion models with color memory[J]. IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, 2024, 17: 14454–14470. doi: 10.1109/JSTARS.2024.3439516. [93] KIM S H and CHUNG D. Conditional brownian bridge diffusion model for VHR SAR to optical image translation[J]. IEEE Geoscience and Remote Sensing Letters, 2025, 22: 4008305. doi: 10.1109/LGRS.2025.3562401. [94] 王雪松, 陈思伟. 合成孔径雷达极化成像解译识别技术的进展与展望[J]. 雷达学报, 2020, 9(2): 259–276. doi: 10.12000/JR19109.WANG Xuesong and CHEN Siwei. Polarimetric synthetic aperture radar interpretation and recognition: Advances and perspectives[J]. Journal of Radars, 2020, 9(2): 259–276. doi: 10.12000/JR19109. [95] SCHMITT M, HUGHES L H, KÖRNER M, et al. Colorizing sentinel-1 SAR images using a variational autoencoder conditioned on sentinel-2 imagery[C]. The International Archives of the Photogrammetry, Remote Sensing and Spatial Information Sciences, Riva del Garda, Italy, 2018: 1045–1051. doi: 10.5194/isprs-archives-XLII-2-1045-2018. [96] SHEN Kangqing, VIVONE G, YANG Xiaoyuan, et al. A benchmarking protocol for SAR colorization: From regression to deep learning approaches[J]. Neural Networks, 2024, 169: 698–712. doi: 10.1016/j.neunet.2023.10.058. [97] SHEN Kangqing, VIVONE G, LOLLI S, et al. IcGAN4CoLSAR: A novel multispectral conditional generative adversarial network approach for SAR image colorization[J]. IEEE Geoscience and Remote Sensing Letters, 2025, 22: 4004605. doi: 10.1109/LGRS.2025.3534795. [98] GAO Jianhao, YUAN Qiangqiang, LI Jie, et al. Cloud removal with fusion of high resolution optical and SAR images using generative adversarial networks[J]. Remote Sensing, 2020, 12(1): 191. doi: 10.3390/rs12010191. [99] XIANG Xuanyu, TAN Yihua, and YAN Longfei. Cloud-guided fusion with SAR-to-Optical translation for thick cloud removal[J]. IEEE Transactions on Geoscience and Remote Sensing, 2024, 62: 5633715. doi: 10.1109/TGRS.2024.3431556. [100] WANG Peng, CHEN Yongkang, HUANG Bo, et al. MT_GAN: A SAR-to-Optical image translation method for cloud removal[J]. ISPRS Journal of Photogrammetry and Remote Sensing, 2025, 225: 180–195. doi: 10.1016/j.isprsjprs.2025.04.011. [101] CHRISTOVAM L E, SHIMABUKURO M H, GALO M L B T, et al. Evaluation of SAR to optical image translation using conditional generative adversarial network for cloud removal in a crop dataset[C]. The International Archives of the Photogrammetry, Remote Sensing and Spatial Information Sciences, Nice, France, 2021: 823–828. doi: 10.5194/isprs-archives-XLIII-B3-2021-823-2021. [102] BERMUDEZ J D, HAPP P N, OLIVEIRA D A B, et al. SAR to optical image synthesis for cloud removal with generative adversarial networks[C]. ISPRS Annals of the Photogrammetry, Remote Sensing and Spatial Information Sciences, Karlsruhe, Germany, 2018: 5–11. doi: 10.5194/isprs-annals-IV-1-5-2018. [103] LIU Rui, MENG Siqi, PENG Yini, et al. TransFusion-CR: Two-phase SAR-to-Optical translation and deep feature fusion for cloud removal[J]. IEEE Transactions on Geoscience and Remote Sensing, 2024, 62: 5635911. doi: 10.1109/TGRS.2024.3439854. [104] DARBAGHSHAHI F N, MOHAMMADI M R, and SORYANI M. Cloud removal in remote sensing images using generative adversarial networks and SAR-to-Optical image translation[J]. IEEE Transactions on Geoscience and Remote Sensing, 2022, 60: 4105309. doi: 10.1109/TGRS.2021.3131035. [105] HAN Zhen, LV Ning, SU Tao, et al. A structure consistency generative adversarial network for SAR-optical image registration[C]. 2024 IEEE International Conference on Smart Internet of Things (SmartIoT), Shenzhen, China, 2024: 197–203. doi: 10.1109/SmartIoT62235.2024.00037. [106] NIE Han, FU Zhitao, TANG Bohui, et al. A dual-generator translation network fusing texture and structure features for SAR and optical image matching[J]. Remote Sensing, 2022, 14(12): 2946. doi: 10.3390/rs14122946. [107] TORIYA H, DEWAN A, and KITAHARA I. SAR2OPT: Image alignment between multi-modal images using generative adversarial networks[C]. IGARSS 2019–2019 IEEE International Geoscience and Remote Sensing Symposium, Yokohama, Japan, 2019: 923–926. doi: 10.1109/IGARSS.2019.8898605. [108] JAMES L, NIDAMANURI R R, KRISHNAN S M, et al. A novel approach for SAR to optical image registration using deep learning[C]. 2023 International Conference on Machine Intelligence for GeoAnalytics and Remote Sensing (MIGARS), Hyderabad, India, 2023: 1–4. doi: 10.1109/MIGARS57353.2023.10064578. [109] HUANG Xuejun, WEN Liwu, and DING Jinshan. SAR and optical image registration method based on improved CycleGAN[C]. 2019 6th Asia-Pacific Conference on Synthetic Aperture Radar (APSAR), Xiamen, China, 2019: 1–6. doi: 10.1109/APSAR46974.2019.9048448. [110] HONG Yumeng, PAN Jun, XU Jiangong, et al. SMAF-net: Semantics-guided modality transfer and hierarchical feature fusion for optical-SAR image registration[J]. International Journal of Applied Earth Observation and Geoinformation, 2025, 143: 104827. doi: 10.1016/j.jag.2025.104827. [111] SONOBE R, TANI H, ZADI M, et al. Improving crop classification accuracy using Sentinel-1 C-SAR data and GAN-generated optical images[J]. Journal of Applied Remote Sensing, 2025, 19(2): 024505. doi: 10.1117/1.JRS.19.024505. [112] ZHANG Jiexin, ZHOU Jianjiang, LI Minglei, et al. Quality assessment of SAR-to-Optical image translation[J]. Remote Sensing, 2020, 12(21): 3472. doi: 10.3390/rs12213472. [113] KWAK G H and PARK N W. Assessing the potential of multi-temporal conditional generative adversarial networks in SAR-to-Optical image translation for early-stage crop monitoring[J]. Remote Sensing, 2024, 16(7): 1199. doi: 10.3390/rs16071199. [114] HU Xikun, ZHANG Puzhao, and BAN Yifang. Gan-based SAR to optical image translation in fire-disturbed regions[C]. IGARSS 2022–2022 IEEE International Geoscience and Remote Sensing Symposium, Kuala Lumpur, Malaysia, 2022: 1456–1459. doi: 10.1109/IGARSS46834.2022.9884234. [115] HU Xikun, ZHANG Puzhao, BAN Yifang, et al. GAN-based SAR and optical image translation for wildfire impact assessment using multi-source remote sensing data[J]. Remote Sensing of Environment, 2023, 289: 113522. doi: 10.1016/j.rse.2023.113522. [116] LEY A, DHONDT O, VALADE S, et al. Exploiting GAN-based SAR to optical image transcoding for improved classification via deep learning[C]. 12th European Conference on Synthetic Aperture Radar, Aachen, Germany, 2018: 1–6. [117] AMITRANO D. Multitemporal SAR-to-Optical image translation using Pix2Pix with application to vegetation monitoring[J]. IEEE Access, 2024, 12: 124402–124413. doi: 10.1109/ACCESS.2024.3454513. [118] ZHANG Qian, LIU Xiangnan, LIU Meiling, et al. Comparative analysis of edge information and polarization on SAR-to-Optical translation based on conditional generative adversarial networks[J]. Remote Sensing, 2021, 13(1): 128. doi: 10.3390/rs13010128. [119] BRUNE E and BAN Yifang. SAR-to-Optical translation using conditional diffusion models for wildfire-burned area segmentation[C]. IGARSS 2024–2024 IEEE International Geoscience and Remote Sensing Symposium, Athens, Greece, 2024: 7011–7015. doi: 10.1109/IGARSS53475.2024.10641072. [120] LEE I H and PARK C G. SAR-to-virtual optical image translation for improving SAR automatic target recognition[J]. IEEE Geoscience and Remote Sensing Letters, 2023, 20: 4010105. doi: 10.1109/LGRS.2023.3312140. [121] SUN Yuchuang, YAN Kaijia, and LI Wangzhe. CycleGAN-based SAR-optical image fusion for target recognition[J]. Remote Sensing, 2023, 15(23): 5569. doi: 10.3390/rs15235569. [122] SUN Yuchuang, JIANG Wen, YANG Jiyao, et al. SAR target recognition using cGAN-based SAR-to-Optical image translation[J]. Remote Sensing, 2022, 14(8): 1793. doi: 10.3390/rs14081793. [123] SCHMITT M, HUGHES L H, and ZHU X X. The SEN1-2 dataset for deep learning in SAR-optical data fusion[C]. ISPRS Annals of the Photogrammetry, Remote Sensing and Spatial Information Sciences, Karlsruhe, Germany, 2018: 141–146. doi: 10.5194/isprs-annals-IV-1-141-2018. [124] SUMBUL G, DE WALL A, KREUZIGER T, et al. BigEarthNet-MM: A large-scale, multimodal, multilabel benchmark archive for remote sensing image classification and retrieval [software and data sets][J]. IEEE Geoscience and Remote Sensing Magazine, 2021, 9(3): 174–180. doi: 10.1109/MGRS.2021.3089174. [125] LI Xue, ZHANG Guo, CUI Hao, et al. MCANet: A joint semantic segmentation framework of optical and SAR images for land use classification[J]. International Journal of Applied Earth Observation and Geoinformation, 2022, 106: 102638. doi: 10.1016/j.jag.2021.102638. [126] SCHMITT M, HUGHES L H, QIU Chunping, et al. SEN12MS--A curated dataset of georeferenced multi-spectral sentinel-1/2 imagery for deep learning and data fusion[EB/OL]. https://arxiv.org/abs/1906.07789, 2019. [127] HUANG Meiyu, XU Yao, QIAN Lixin, et al. The QXS-SAROPT dataset for deep learning in SAR-optical data fusion[EB/OL]. https://arxiv.org/abs/2103.08259, 2021. [128] ZHAO Yitao, CELIK T, LIU Nanqing, et al. A comparative analysis of GAN-based methods for SAR-to-Optical image translation[J]. IEEE Geoscience and Remote Sensing Letters, 2022, 19: 3512605. doi: 10.1109/LGRS.2022.3177001. [129] SHERMEYER J, HOGAN D, BROWN J, et al. SpaceNet 6: Multi-sensor all weather mapping dataset[C]. IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops, Seattle, USA, 2020: 768–777. doi: 10.1109/CVPRW50498.2020.00106. [130] WANG Yuanyuan and ZHU Xiaoxiang. The SARptical dataset for joint analysis of SAR and optical image in dense urban area[C]. IGARSS 2018–2018 IEEE International Geoscience and Remote Sensing Symposium, Valencia, Spain, 2018: 6840–6843. doi: 10.1109/IGARSS.2018.8518298. [131] ENOMOTO K, SAKURADA K, WANG Weiming, et al. Image translation between SAR and optical imagery with generative adversarial nets[C]. IGARSS 2018–2018 IEEE International Geoscience and Remote Sensing Symposium, Valencia, Spain, 2018: 1752–1755. [132] KIRILLOV A, MINTUN E, RAVI N, et al. Segment anything[C]. IEEE/CVF International Conference on Computer Vision, Paris, France, 2023: 3992–4003. doi: 10.1109/ICCV51070.2023.00371. [133] RADFORD A, KIM J W, HALLACY C, et al. Learning transferable visual models from natural language supervision[C]. 38th International Conference on Machine Learning, PmLR, Virtual Event, 2021: 8748–8763. -

作者中心

作者中心 专家审稿

专家审稿 责编办公

责编办公 编辑办公

编辑办公

下载:

下载: