- Home

- Articles & Issues

-

Data

- Dataset of Radar Detecting Sea

- SAR Dataset

- SARGroundObjectsTypes

- SARMV3D

- AIRSAT Constellation SAR Land Cover Classification Dataset

- 3DRIED

- UWB-HA4D

- LLS-LFMCWR

- FAIR-CSAR

- MSAR

- SDD-SAR

- FUSAR

- SpaceborneSAR3Dimaging

- Sea-land Segmentation

- SAR Multi-domain Ship Detection Dataset

- SAR-Airport

- Hilly and mountainous farmland time-series SAR and ground quadrat dataset

- SAR images for interference detection and suppression

- HP-SAR Evaluation & Analytical Dataset

- GDHuiYan-ATRNet

- Multi-System Maritime Low Observable Target Dataset

- DatasetinthePaper

- DatasetintheCompetition

- Report

- Course

- About

- Publish

- Editorial Board

- Chinese

Article Navigation >

Journal of Radars

>

2026

> Online First

| Citation: | ZHONG Xiaoling, ZHOU Junlin, JIA Yong, et al. Multipath contrastive learning for non-line-of-sight human activity recognition using an ultrawideband radar[J]. Journal of Radars, in press. doi: 10.12000/JR25241 |

Multipath Contrastive Learning for Non-line-of-sight Human Activity Recognition Using an Ultrawideband Radar

DOI: 10.12000/JR25241 CSTR: 32380.14.JR25241

More Information-

Abstract

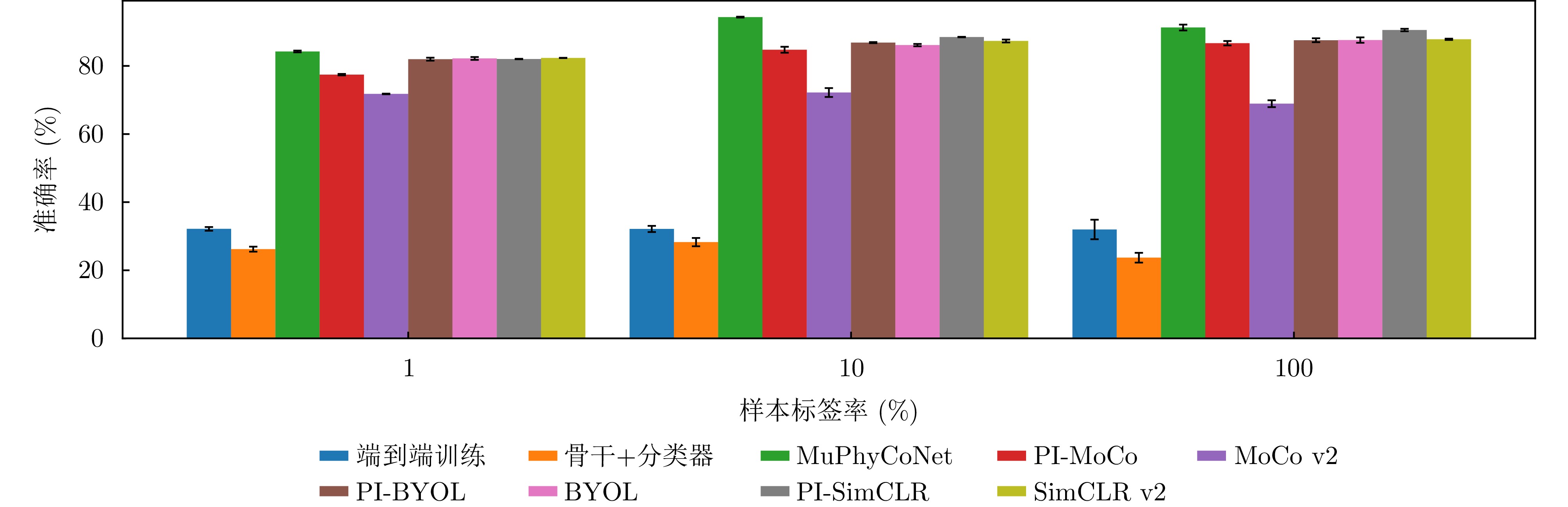

Non-Line-of-Sight (NLOS) human activity recognition using multipath-assisted radar has significant potential applications in urban warfare, autonomous driving, and emergency rescue. Existing studies typically rely on supervised deep learning frameworks, which require large labeled datasets and exhibit limited robustness to noise. To address these limitations, this study treats different propagation paths as multiview observational channels. Through path separation and Time-Frequency (T-F) analysis, we construct equivalent multiview T-F spectrograms of human activities. Furthermore, we propose a multipath physics-embedded contrastive network (MuPhyCoNet). In this framework, multiview spectrograms from different propagation paths serve as inherent positive pairs for contrastive learning, enabling the model to extract discriminative features without extensive manual labeling. Moreover, we introduce two categories of physical constraints—observational and predictive, together with a physical consistency loss. The observational constraints compute physical divergence directly from the raw spectrograms, while the predictive constraints align the physical parameters regressed by the projection head with their observed counterparts to verify the learned physical characteristics. The integration of both constraints enhances the model’s robustness to noise and modeling errors while preserving high discriminative capability. We evaluate the proposed method on a self-collected NLOS human activity dataset (comprising 6 action classes and 19,500 spectrograms) acquired using an ultrawideband stepped-frequency continuous wave radar, following a “self-supervised pretraining + downstream classifier” strategy. Experimental results demonstrate that MuPhyCoNet achieves a classification accuracy of 94.32% with only 10% labeling data, outperforming MoCo v2 (72.19%) by 22.13 percentage points while exhibiting superior noise robustness. -

-

References

[1] 孔令讲, 郭世盛, 陈家辉, 等. 多径利用雷达目标探测技术综述与展望[J]. 雷达学报(中英文), 2024, 13(1): 23–45. doi: 10.12000/JR23134.KONG Lingjiang, GUO Shisheng, CHEN Jiahui, et al. Overview and prospects of multipath exploitation radar target detection technology[J]. Journal of Radars, 2024, 13(1): 23–45. doi: 10.12000/JR23134.[2] 蔡响, 韦顺军, 文彦博, 等. 基于非视距雷达三维成像的隐藏目标精确重构方法[J]. 雷达学报(中英文), 2024, 13(4): 791–806. doi: 10.12000/JR24060.CAI Xiang, WEI Shunjun, WEN Yanbo, et al. Precise reconstruction method for hidden targets based on non-line-of-sight radar 3D imaging[J]. Journal of Radars, 2024, 13(4): 791–806. doi: 10.12000/JR24060.[3] 金添, 宋勇平, 崔国龙, 等. 低频电磁波建筑物内部结构透视技术研究进展[J]. 雷达学报, 2021, 10(3): 342–359. doi: 10.12000/JR20119.JIN Tian, SONG Yongping, CUI Guolong, et al. Advances on penetrating imaging of building layout technique using low frequency radio waves[J]. Journal of Radars, 2021, 10(3): 342–359. doi: 10.12000/JR20119.[4] WU Peilun, CHEN Jiahui, GUO Shisheng, et al. NLOS positioning for building layout and target based on association and hypothesis method[J]. IEEE Transactions on Geoscience and Remote Sensing, 2023, 61: 5101913. doi: 10.1109/TGRS.2023.3250831.[5] AHMED S and CHO S H. Machine learning for healthcare radars: Recent progresses in human vital sign measurement and activity recognition[J]. IEEE Communications Surveys & Tutorials, 2024, 26(1): 461–495. doi: 10.1109/COMST.2023.3334269.[6] RAEIS H, KAZEMI M, and SHIRMOHAMMADI S. Human activity recognition with device-free sensors for well-being assessment in smart homes[J]. IEEE Instrumentation & Measurement Magazine, 2021, 24(6): 46–57. doi: 10.1109/MIM.2021.9513637.[7] TANG Longzhen, GUO Shisheng, JIA Chao, et al. Human activity recognition based on multipath fusion in non-line-of-sight corner[J]. IEEE Internet of Things Journal, 2025, 12(23): 51467–51482. doi: 10.1109/JIOT.2025.3613792.[8] GUENDEL R G, KRUSE N C, FIORANELLI F, et al. Multipath exploitation for human activity recognition using a radar network[J]. IEEE Transactions on Geoscience and Remote Sensing, 2024, 62: 5103013. doi: 10.1109/TGRS.2024.3363631.[9] 王俊, 郑彤, 雷鹏, 等. 深度学习在雷达中的研究综述[J]. 雷达学报, 2018, 7(4): 395–411. doi: 10.12000/JR18040.WANG Jun, ZHENG Tong, LEI Peng, et al. Study on deep learning in radar[J]. Journal of Radars, 2018, 7(4): 395–411. doi: 10.12000/JR18040.[10] ZHENG Zhijie, ZHANG Diankun, LIANG Xiao, et al. RadarFormer: End-to-end human perception with through-wall radar and transformers[J]. IEEE Transactions on Neural Networks and Learning Systems, 2024, 35(12): 18285–18299. doi: 10.1109/TNNLS.2023.3314031.[11] ZHANG Rui, GENG Ruixu, LI Yadong, et al. RFMamba: Frequency-aware state space model for RF-based human-centric perception[C]. The 13th International Conference on Learning Representations, Singapore, Singapore, 2025: 1–17.[12] HE Jianghaomiao, TERASHIMA S, YAMADA H, et al. Diffraction signal-based human recognition in non-line-of-sight (NLOS) situation for millimeter wave radar[J]. IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, 2021, 14: 4370–4380. doi: 10.1109/JSTARS.2021.3073678.[13] SCHEINER N, KRAUS F, WEI Fangyin, et al. Seeing around street corners: Non-line-of-sight detection and tracking in-the-wild using doppler radar[C]. IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, USA, 2020: 2065–2074. doi: 10.1109/CVPR42600.2020.00214.[14] WAQAR S, MUAAZ M, and PÄTZOLD M. Direction-independent human activity recognition using a distributed MIMO radar system and deep learning[J]. IEEE Sensors Journal, 2023, 23(20): 24916–24929. doi: 10.1109/JSEN.2023.3310620.[15] SALEH A A M and VALENZUELA R. A statistical model for indoor multipath propagation[J]. IEEE Journal on Selected Areas in Communications, 1987, 5(2): 128–137. doi: 10.1109/JSAC.1987.1146527.[16] 张锐, 龚汉钦, 宋瑞源, 等. 基于4D成像雷达的隔墙人体姿态重建与行为识别研究[J]. 雷达学报(中英文), 2025, 14(1): 44–61. doi: 10.12000/JR24132.ZHANG Rui, GONG Hanqin, SONG Ruiyuan, et al. Through-wall human pose reconstruction and action recognition using four-dimensional imaging radar[J]. Journal of Radars, 2025, 14(1): 44–61. doi: 10.12000/JR24132.[17] GE Yun, WANG Yiyu, LI Gen, et al. Multipath feature expansion for detection of human behaviors in NLOS region using mmWave radar[J]. IEEE Transactions on Radar Systems, 2025, 3: 864–874. doi: 10.1109/TRS.2025.3574571.[18] DING Congzhang, GUO Shisheng, CUI Guolong, et al. A non-line-of-sight human activity recognition method based on radar multispectrogram[J]. IEEE Transactions on Aerospace and Electronic Systems, 2025, 61(5): 13647–13661. doi: 10.1109/TAES.2025.3579771.[19] 陈彦, 张锐, 李亚东, 等. 基于无线信号的人体姿态估计综述[J]. 雷达学报(中英文), 2025, 14(1): 229–247. doi: 10.12000/JR24189.CHEN Yan, ZHANG Rui, LI Yadong, et al. An overview of human pose estimation based on wireless signals[J]. Journal of Radars, 2025, 14(1): 229–247. doi: 10.12000/JR24189.[20] LI Yadong, ZHANG Dongheng, CHEN Jinbo, et al. Towards domain-independent and real-time gesture recognition using mmWave signal[J]. IEEE Transactions on Mobile Computing, 2023, 22(12): 7355–7369. doi: 10.1109/TMC.2022.3207570.[21] CHAN-TO-HING H and VEERAVALLI B. FUS-MAE: A cross-attention-based data fusion approach for masked autoencoders in remote sensing[C]. IEEE International Geoscience and Remote Sensing Symposium, Athens, Greece, 2024: 6953–6958. doi: 10.1109/IGARSS53475.2024.10642424.[22] FULLER A, MILLARD K, and GREEN J R. CROMA: Remote sensing representations with contrastive radar-optical masked autoencoders[C]. The 37th International Conference onAdvances in Neural Information Processing Systems, New Orleans, USA, 2023: 241, 36: 5506–5538. doi: 10.52202/075280-0241.[23] XIE Yichen, CHEN Hongge, MEYER G P, et al. Cohere3D: Exploiting temporal coherence for unsupervised representation learning of vision-based autonomous driving[C]. IEEE International Conference on Robotics and Automation, Atlanta, USA, 2025: 10095–10102. doi: 10.1109/ICRA55743.2025.11127749.[24] SHAH K, SHAH A, LAU C P, et al. Multi-view action recognition using contrastive learning[C]. IEEE/CVF Winter Conference on Applications of Computer Vision, Waikoloa, USA, 2023: 3370–3380. doi: 10.1109/WACV56688.2023.00338.[25] KARNIADAKIS G E, KEVREKIDIS I G, LU Lu, et al. Physics-informed machine learning[J]. Nature Reviews Physics, 2021, 3(6): 422–440. doi: 10.1038/s42254-021-00314-5.[26] RAISSI M, PERDIKARIS P, and KARNIADAKIS G E. Physics-informed neural networks: A deep learning framework for solving forward and inverse problems involving nonlinear partial differential equations[J]. Journal of Computational Physics, 2019, 378: 686–707. doi: 10.1016/j.jcp.2018.10.045.[27] ZHANG Tao, QIAO Xingshuai, LI Xiuping, et al. Radar feature analysis of human activity recognition under multiview scenes[J]. IEEE Sensors Journal, 2024, 24(14): 21997–22010. doi: 10.1109/JSEN.2023.3325619.[28] ZHU Haoran, HE Haoze, CHOROMANSKA A, et al. Multi-view radar autoencoder for self-supervised automotive radar representation learning[C]. IEEE Intelligent Vehicles Symposium, Jeju Island, Korea, Republic of, 2024: 1601–1608. doi: 10.1109/IV55156.2024.10588463.[29] HE Kaiming, FAN Haoqi, WU Yuxin, et al. Momentum contrast for unsupervised visual representation learning[C]. IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, USA, 2020: 9726–9735. doi: 10.1109/CVPR42600.2020.00975.[30] CHEN Xinlei, FAN Haoqi, GIRSHICK R, et al. Improved baselines with momentum contrastive learning[EB/OL]. arXiv: 2003.04297, 2020. doi: 10.48550/arXiv.2003.04297.[31] HE Yonghua, WANG Jiangyu, LI Yonggang, et al. Research on radar clutter suppression methods[C]. IEEE Information Technology and Mechatronics Engineering Conference, Chongqing, China, 2023: 611–615. doi: 10.1109/ITOEC57671.2023.10291513.[32] DOGARU T, NGUYEN L, and LE C. Computer models of the human body signature for sensing through the wall radar applications[R]. ARL-TR-4290, 2007.[33] PARK J K, PARK J H, KANG D K, et al. MPSK-MIMO FMCW radar-based indoor multipath recognition[J]. IEEE Sensors Journal, 2024, 24(17): 27824–27835. doi: 10.1109/JSEN.2024.3430082.[34] HAO Zhanjun, YAN Hao, DANG Xiaochao, et al. Millimeter-wave radar localization using indoor multipath effect[J]. Sensors, 2022, 22(15): 5671. doi: 10.3390/s22155671.[35] PARK J K, PARK J H, and KIM K T. Multipath signal mitigation for indoor localization based on MIMO FMCW radar system[J]. IEEE Internet of Things Journal, 2024, 11(2): 2618–2629. doi: 10.1109/JIOT.2023.3292349.[36] HE Kaiming, ZHANG Xiangyu, REN Shaoqing, et al. Deep residual learning for image recognition[C]. IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, USA, 2016: 770–778. doi: 10.1109/CVPR.2016.90.[37] WEN Yanbo, WEI Shunjun, CAI Xiang, et al. CMTI: Non-line-of-sight radar imaging for non-cooperative corner motion target[J]. IEEE Transactions on Vehicular Technology, 2025, 74(1): 179–190. doi: 10.1109/TVT.2024.3398218.[38] LOSHCHILOV I and HUTTER F. SGDR: Stochastic gradient descent with warm restarts[C]. The 5th International Conference on Learning Representations, Toulon, France, 2017: 1–16.[39] GRILL J B, STRUB F, ALTCHÉ F, et al. Bootstrap your own latent a new approach to self-supervised learning[C]. The 34th International Conference on Neural Information Processing Systems, Vancouver, Canada, 2020: 1786.[40] CHEN Ting, KORNBLITH S, NOROUZI M, et al. A simple framework for contrastive learning of visual representations[C]. The 37th International Conference on Machine Learning, Vienna, Austria, 2020: 1597–1607.[41] VAN DEN OORD A, LI Yazhe, and VINYALS O. Representation learning with contrastive predictive coding[EB/OL]. arXiv: 1807.03748, 2018. doi: 10.48550/arXiv.1807.03748.[42] MADRY A, MAKELOV A, SCHMIDT L, et al. Towards deep learning models resistant to adversarial attacks[C]. The 6th International Conference on Learning Representations, Vancouver, Canada, 2018: 1–23. -

Proportional views

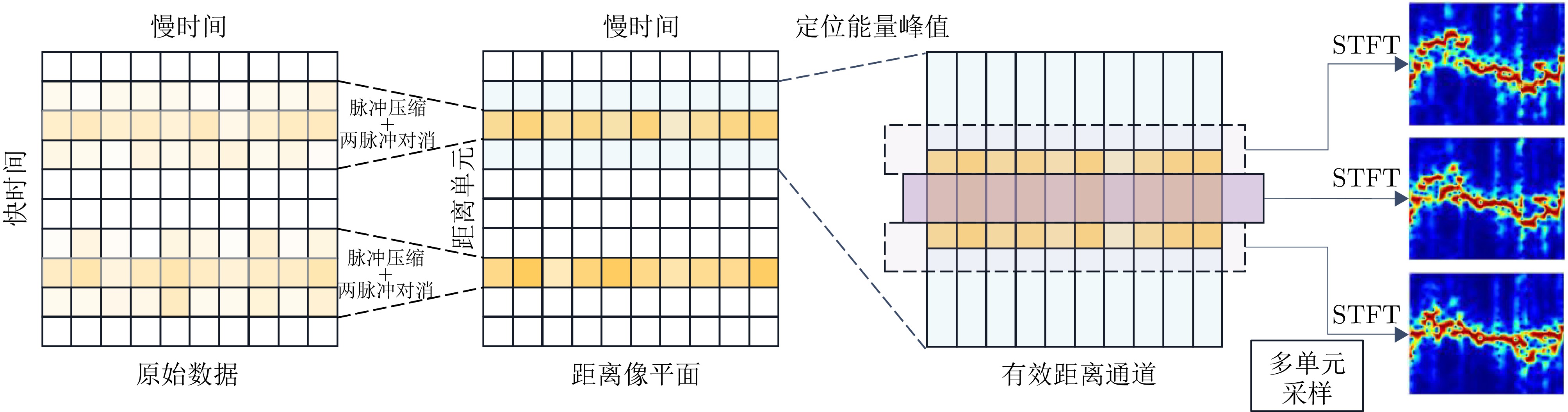

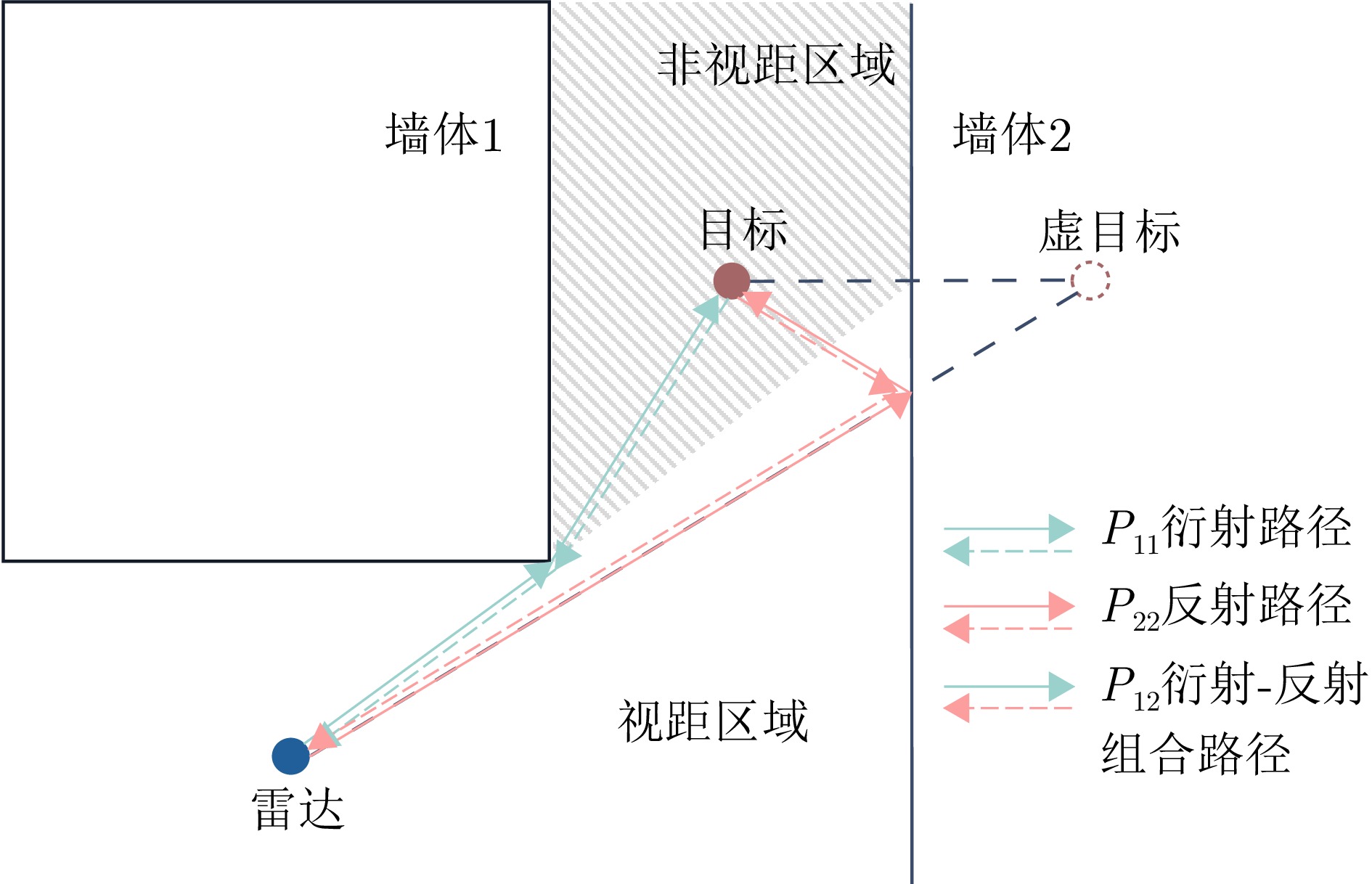

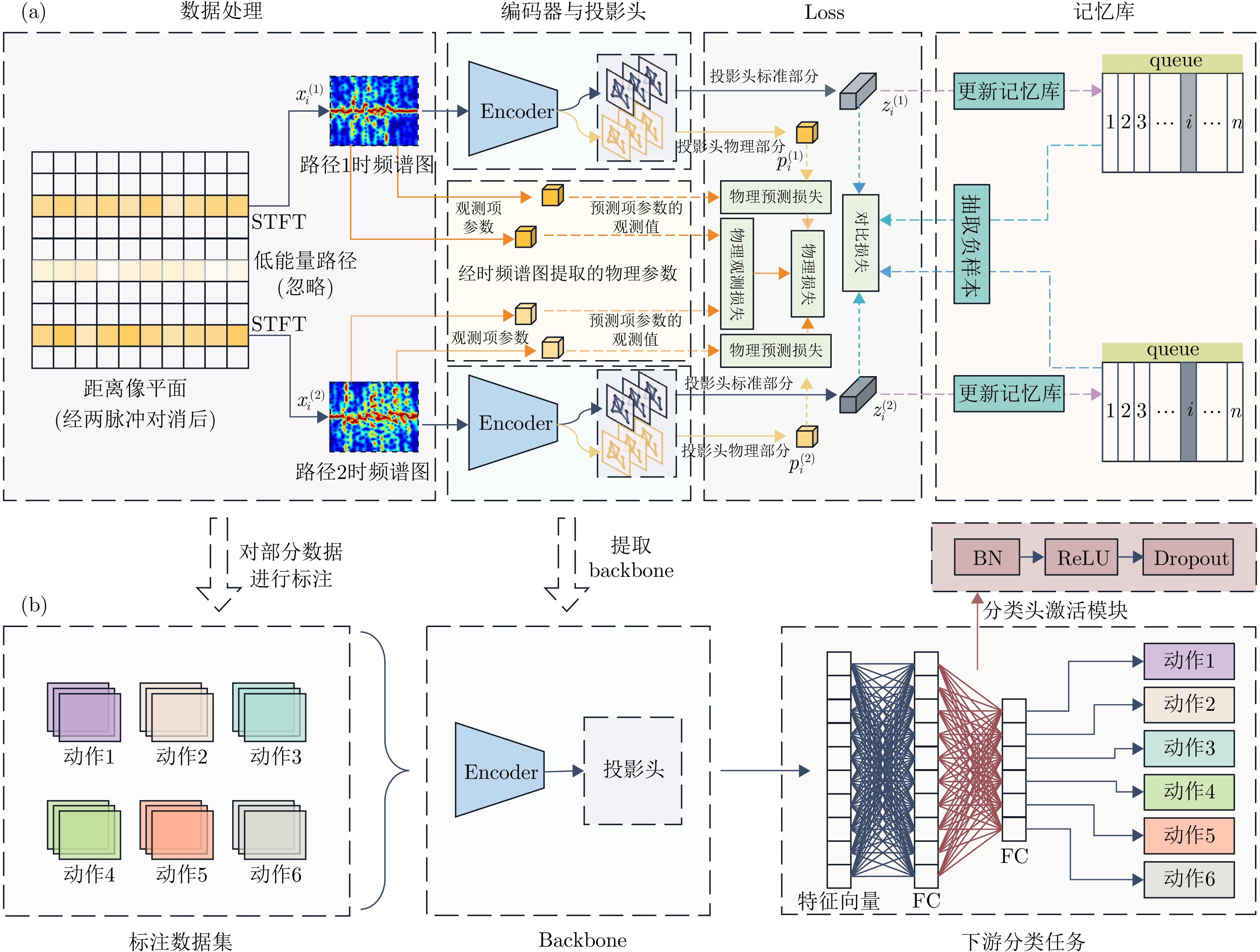

- Figure 1. Framework schematic of multipath-effect-based contrastive learning for radar activity recognition

- Figure 2. Data processing procedure diagram

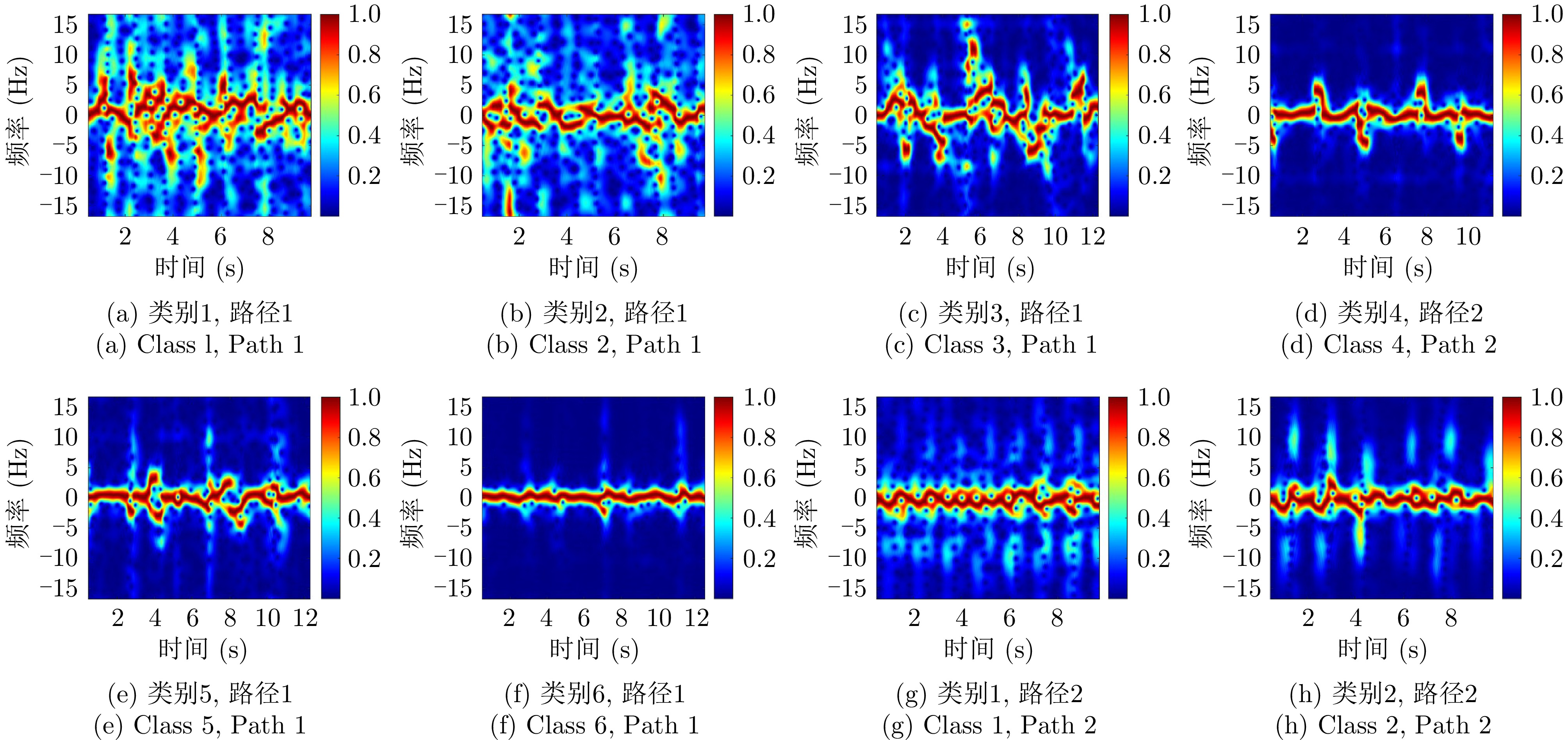

- Figure 3. Example of time-frequency spectrograms for different actions and different paths

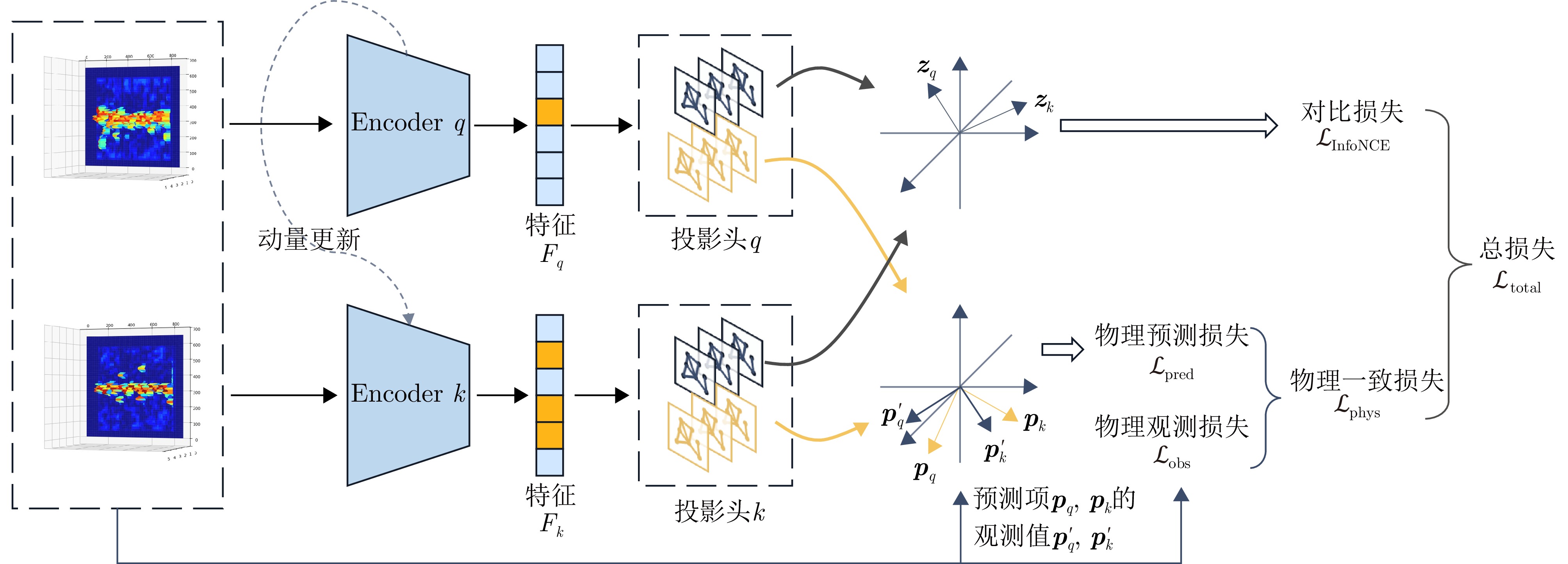

- Figure 4. Physics-information-injected contrastive learning network MuPhyCoNet

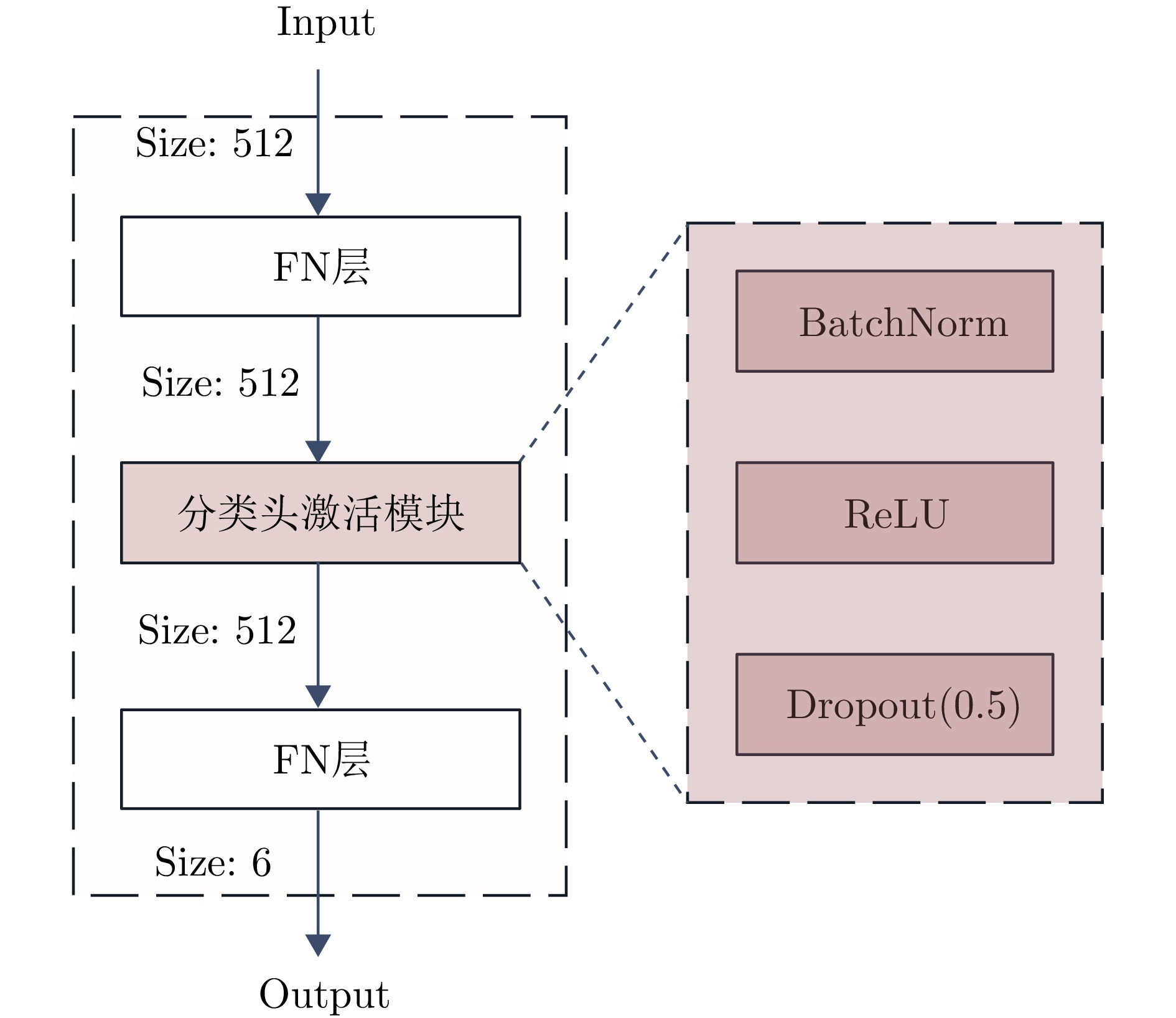

- Figure 5. Downstream classifier structure schematic diagram

- Figure 6. SFCW radar echo path schematic diagram

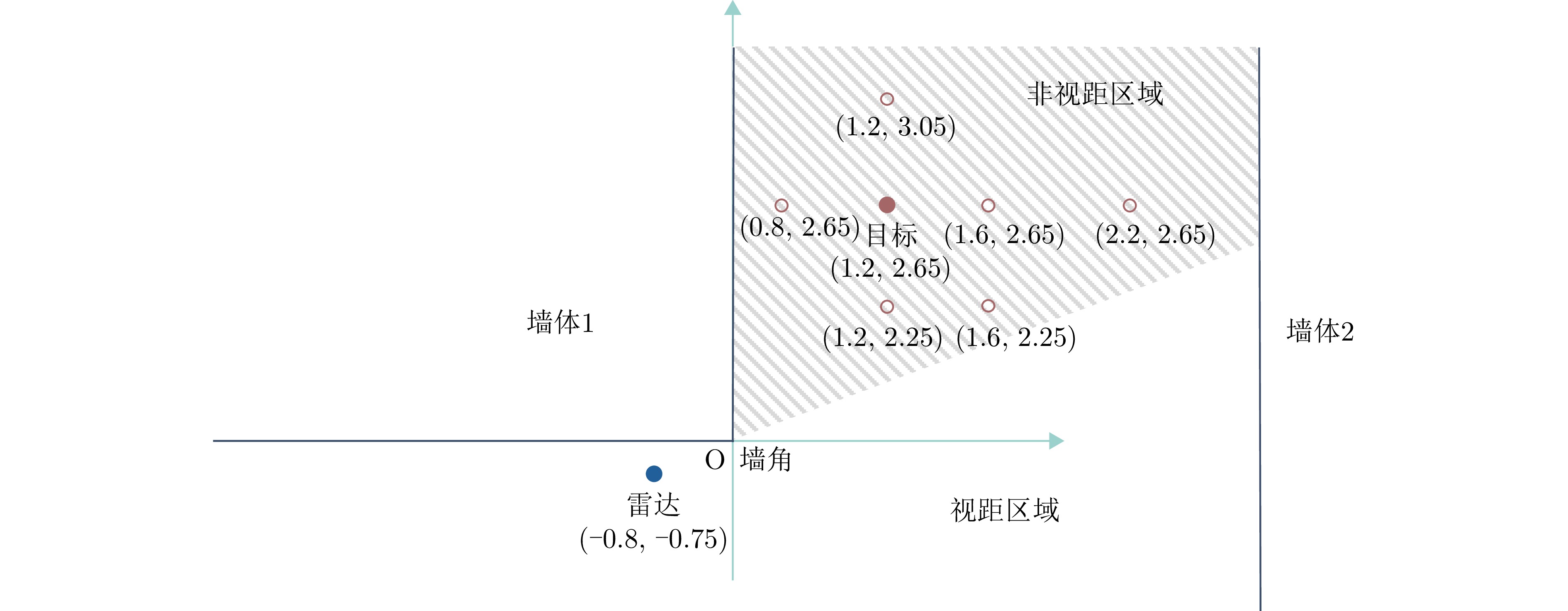

- Figure 7. Radar and target positions in the experimental scenario

- Figure 8. Classification accuracy under different label rates (grouped bar chart)

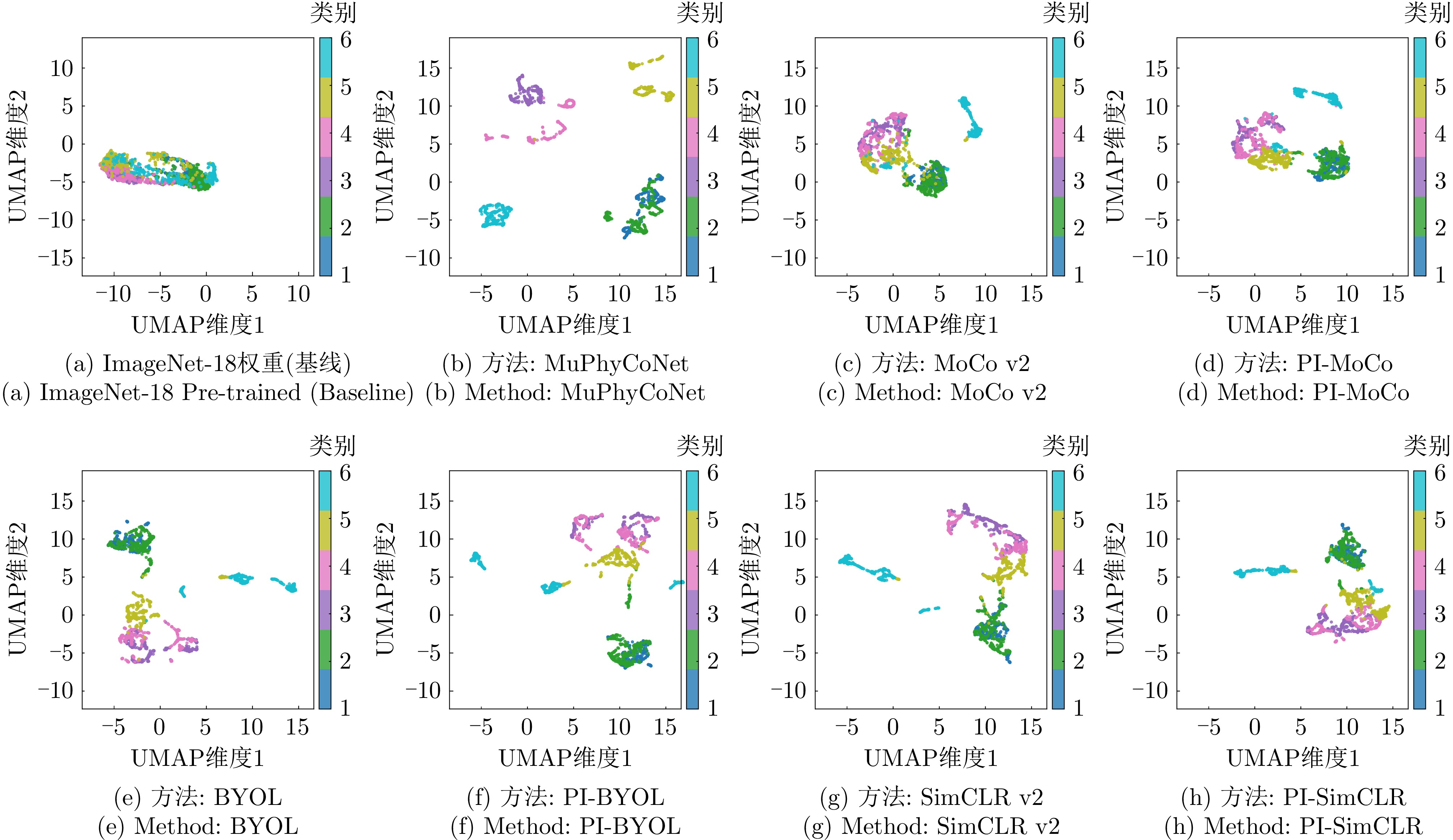

- Figure 9. Feature space visualization of different contrastive learning methods (PCA and UMAP dimensionality reduction)

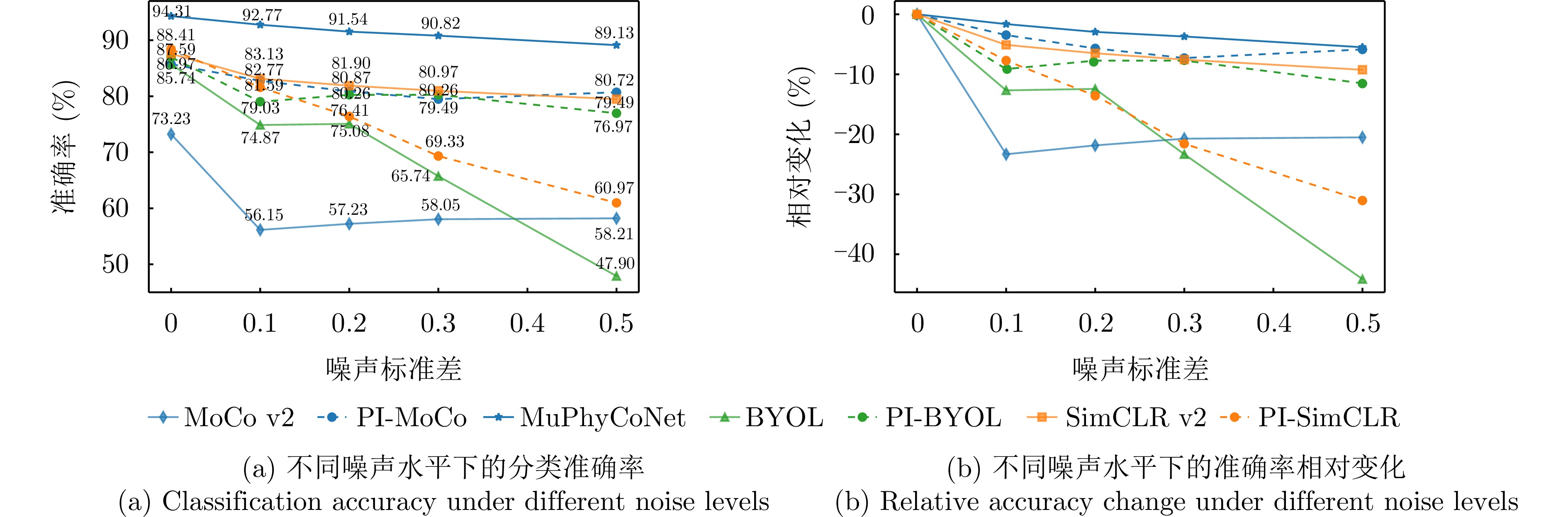

- Figure 10. Noise robustness analysis

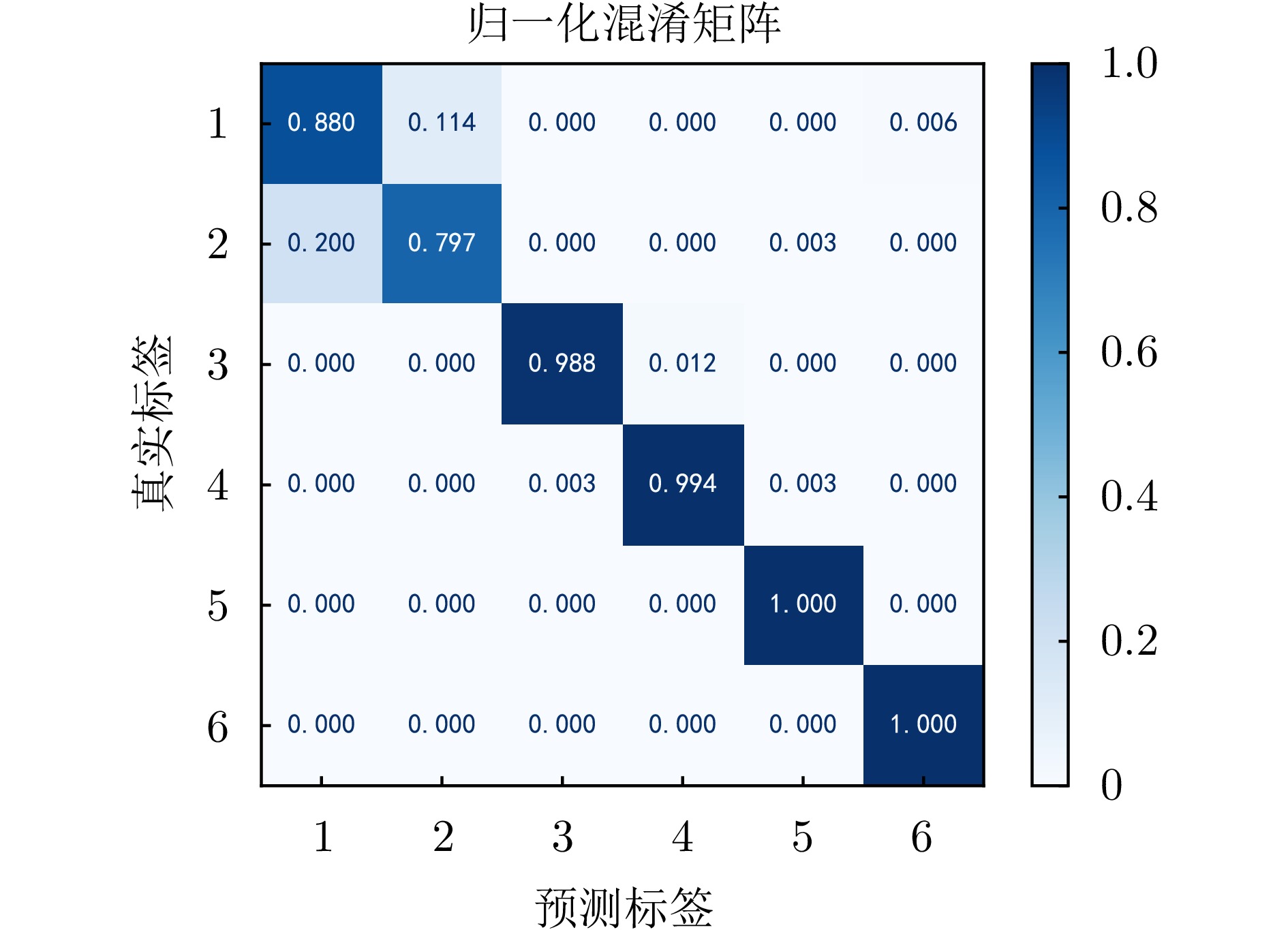

- Figure 11. Normalized confusion matrix

Submit Manuscript

Submit Manuscript Peer Review

Peer Review Editor Work

Editor Work

DownLoad:

DownLoad: